没有合适的资源?快使用搜索试试~ 我知道了~

A multilinear singular value decomposition.pdf

温馨提示

试读

27页

We discuss a multilinear generalization of the singular value decomposition. There is a strong analogy between several properties of the matrix and the higher-order tensor decomposition; uniqueness, link with the matrix eigenvalue decomposition, first-order perturbation effects, etc., are analyzed. We investigate how tensor symmetries affect the decomposition and propose a multilinear generalization of the symmetric eigenvalue decomposition for pair-wise symmetric tensors

资源推荐

资源详情

资源评论

A MULTILINEAR SINGULAR VALUE DECOMPOSITION

∗

LIEVEN DE LATHAUWER

†

, BART DE MOOR

†

, AND JOOS VANDEWALLE

†

SIAM J. M

ATRIX ANAL. APPL.

c

2000 Society for Industrial and Applied Mathematics

Vol. 21, No. 4, pp. 1253–1278

Abstract. We discuss a multilinear generalization of the singular value decomposition. There is

a strong analogy between several properties of the matrix and the higher-order tensor decomposition;

uniqueness, link with the matrix eigenvalue decomposition, first-order perturbation effects, etc., are

analyzed. We investigate how tensor symmetries affect the decomposition and propose a multilinear

generalization of the symmetric eigenvalue decomposition for pair-wise symmetric tensors.

Key words. multilinear algebra, singular value decomposition, higher-order tensor

AMS subject classifications. 15A18, 15A69

PII. S0895479896305696

1. Introduction. An increasing number of signal processing problems involve

the manipulation of quantities of which the elements are addressed by more than

two indices. In the literature these higher-order equivalents of vectors (first order)

and matrices (second order) are called higher-order tensors, multidimensional matri-

ces, or multiway arrays. For a lot of applications involving higher-order tensors, the

existing framework of vector and matrix algebra appears to be insufficient and/or

inappropriate. In this paper we present a proper generalization of the singular value

decomposition (SVD).

To a large extent, higher-order tensors are currently gaining importance due to the

developments in the field of higher-order statistics (HOS): for multivariate stochastic

variables the basic HOS (higher-order moments, cumulants, spectra and cepstra) are

highly symmetric higher-order tensors, in the same way as, e.g., the covariance of a

stochastic vector is a symmetric (Hermitean) matrix. A brief enumeration of some

opportunities offered by HOS gives an idea of the promising role of higher-order

tensors on the signal processing scene. It is clear that statistical descriptions of random

processes are more accurate when, in addition to first- and second-order statistics, they

take HOS into account; from a mathematical point of view this is reflected by the fact

that moments and cumulants correspond, within multiplication with a fixed scalar,

to the subsequent coefficients of a Taylor series expansion of the first, resp., second,

characteristic function of the stochastic variable. In statistical nonlinear system theory

HOS are even unavoidable (e.g., the autocorrelation of x

2

is a fourth-order moment).

∗

Received by the editors June 24, 1996; accepted for publication (in revised form) by B. Kagstrom

January 4, 1999; published electronically April 18, 2000. This research was partially supported by

the Flemish Government (the Concerted Research Action GOA-MIPS, the FWO (Fund for Scientific

Research - Flanders) projects G.0292.95, G.0262.97, and G.0240.99, the FWO Research Communi-

ties ICCoS (Identification and Control of Complex Systems) and Advanced Numerical Methods for

Mathematical Modelling, the IWT Action Programme on Information Technology (ITA/GBO/T23)-

IT-Generic Basic Research), the Belgian State, Prime Minister’s Office - Federal Office for Scientific,

Technical and Cultural Affairs (the Interuniversity Poles of Attraction Programmes IUAP P4-02 and

IUAP P4-24) and the European Commission (Human Capital and Mobility Network SIMONET,

SC1-CT92-0779). The first author is a post-doctoral Research Assistant with the F.W.O. The sec-

ond author is a senior Research Associate with the F.W.O. and an Associate Professor at the K.U.

Leuven. The third author is a Full Professor at the K.U. Leuven. The scientific responsibility is

assumed by the authors.

http://www.siam.org/journals/simax/21-4/30569.html

†

K.U. Leuven, E.E. Dept., ESAT–SISTA/COSIC, Kard. Mercierlaan 94, B-3001 Leuven (Hever-

lee), Belgium (Lieven.DeLathauwer@esat.kuleuven.ac.be, Bart.DeMoor@esat.kuleuven.ac.be, Joos.

Vandewalle@esat.kuleuven.ac.be).

1253

1254 L. DE LATHAUWER, B. DE MOOR, AND J. VANDEWALLE

Moreover, higher-order cumulants and spectra of a random variable are insensitive

to an additive Gaussian perturbation of that variable, which is exploited in higher-

order-only techniques, conceptually blind for Gaussian noise. HOS make it possible

to solve the source separation (SS) problem by mere exploitation of the statistical

independence of the sources, without knowledge of the array manifold; they also

contain sufficient information to allow a blind identification of linear filters, without

making assumptions on the minimum/nonminimum phase character, etc. (for general

aspects of HOS, the interested reader is referred to the tutorial papers [34, 37, 38, 30]

and the bibliography [41]; for the use of tensor decompositions as a basic tool in

HOS-based signal processing, we refer to [11, 12, 9, 19]).

Higher-order tensors do not merely play an important role in HOS. As a matter

of fact they seem to be used in the most various disciplines, like chemometrics, psy-

chometrics, econometrics, image processing, biomedical signal processing, etc. Most

often they appear in factor analysis-type problems of multiway datasets [13]. Another,

more trivial, use is as a formalism to describe linear vector-matrix, matrix-vector,

matrix-matrix, . . . transformations, in the same way as matrices describe linear trans-

formations between vector spaces. Interesting also is the fact that higher-order terms

in the Taylor series expansion of a multivariate function and higher-order Volterra

filter kernels have a tensor form.

On the other hand, one of the most fruitful developments in the world of linear

algebra and linear algebra-based signal processing is the concept of the SVD of ma-

trices [21, 35, 44]. In this paper we will discuss a multilinear generalization that has

also been investigated in psychometrics as the Tucker model, originally developed to

obtain a “method for searching for relations in a body of data,” for the case “each

person in a group of individuals is measured on each trait in a group of traits by each

of a number of methods,” or “when individuals are measured by a battery of measures

on a number of occasions” [42, 43]. For three-way data, the Tucker model consists of

decomposing a real (I

1

× I

2

× I

3

)-tensor A according to

a

i

1

i

2

i

3

=

I

1

j

1

I

2

j

2

I

3

j

3

s

j

1

j

2

j

3

u

(1)

i

1

j

1

u

(2)

i

2

j

2

u

(3)

i

3

j

3

,(1)

in which u

(1)

i

1

j

1

,u

(2)

i

2

j

2

,u

(3)

i

3

j

3

are entries of orthogonal matrices, and S is a real (I

1

×

I

2

× I

3

)-tensor with the property of “all-orthogonality,” i.e.,

i

1

i

2

s

i

1

i

2

α

s

i

1

i

2

β

=

i

1

i

3

s

i

1

αi

3

s

i

1

βi

3

=

i

2

i

3

s

αi

2

i

3

s

βi

2

i

3

= 0 whenever α = β. This decomposition is

virtually unknown in the communities of numerical algebra and signal processing; on

the other hand, the viewpoint and language in psychometrics is somewhat different

from what is common in our field. It is the aim of this paper to derive the tensor

decomposition in an SVD terminology, using a notation that is a natural extension of

the notation used for matrices. As already mentioned, we will show that the Tucker

model is actually a convincing multilinear generalization of the SVD concept itself.

From our algebraic point of view, we will ask a number of inevitable questions such as

what the geometric link is between the generalized singular vectors/values and the col-

umn, row, ...vectors (oriented energy), how the concept of rank lies to the structure

of the decomposition, whether the best reduced-rank approximation property carries

over, and so on. Our derivations are valid for tensors of arbitrary order and hold

for the complex-valued case too. In view of the many striking analogies between the

matrix SVD and its multilinear generalization, we use the term higher-order singular

value decomposition (HOSVD) in this paper; note at this point that the existence of

A MULTILINEAR SINGULAR VALUE DECOMPOSITION 1255

different multilinear SVD extensions may not be excluded—as a matter of fact, focus-

ing on different properties of the matrix SVD does lead to the definition of different

(formally less striking) multilinear generalizations, as we will explain later on.

In our own research the HOSVD has already proved its value. In [14] we showed

that the decomposition is fundamentally related to the problem of blind source sep-

aration, also known as independent component analysis (ICA). In [18] we used the

decomposition to compute an initial value for a tensorial equivalent of the power

method, aiming at the computation of the best rank-1 approximation of a given

tensor; a high-performant ICA-algorithm was based on this technique. In [19] the

HOSVD was used in a dimensionality reduction for higher-order factor analysis-type

problems, reducing the computational complexity. The current paper however is the

first systematic, elaborated presentation of the HOSVD concept.

The paper is organized as follows. In section 2 we introduce some preliminary

material on multilinear algebra, needed for the further developments. The HOSVD

model is presented in section 3 and compared to its matrix counterpart. In section 4

we discuss some well-known SVD properties and demonstrate that they have striking

higher-order counterparts. In section 5 we investigate how the HOSVD is influenced

by symmetry properties of the higher-order tensor; in analogy with the eigenvalue

decomposition (EVD) of symmetric (Hermitean) matrices we define a higher-order

eigenvalue decomposition (HOEVD) for “pair-wise symmetric” higher-order tensors.

Section 6 contains a first-order perturbation analysis and section 7 quotes some alter-

native ways to generalize the SVD.

Before starting with the next section, we would like to add a comment on the

notation that is used. To facilitate the distinction between scalars, vectors, matrices,

and higher-order tensors, the type of a given quantity will be reflected by its rep-

resentation: scalars are denoted by lower-case letters (a, b, ...; α, β, ...), vectors

are written as capitals (A, B, ...) (italic shaped), matrices correspond to bold-face

capitals (A, B, ...), and tensors are written as calligraphic letters (A, B, ...). This

notation is consistently used for lower-order parts of a given structure. For example,

the entry with row index i and column index j in a matrix A, i.e., (A)

ij

, is symbolized

by a

ij

(also (A)

i

= a

i

and (A)

i

1

i

2

...i

N

= a

i

1

i

2

...i

N

); furthermore, the ith column vector

of a matrix A is denoted as A

i

, i.e., A =(A

1

A

2

...). To enhance the overall read-

ability, we have made one exception to this rule: as we frequently use the characters

i, j, r, and n in the meaning of indices (counters), I, J, R, and N will be reserved to

denote the index upper bounds, unless stated otherwise.

2. Basic definitions.

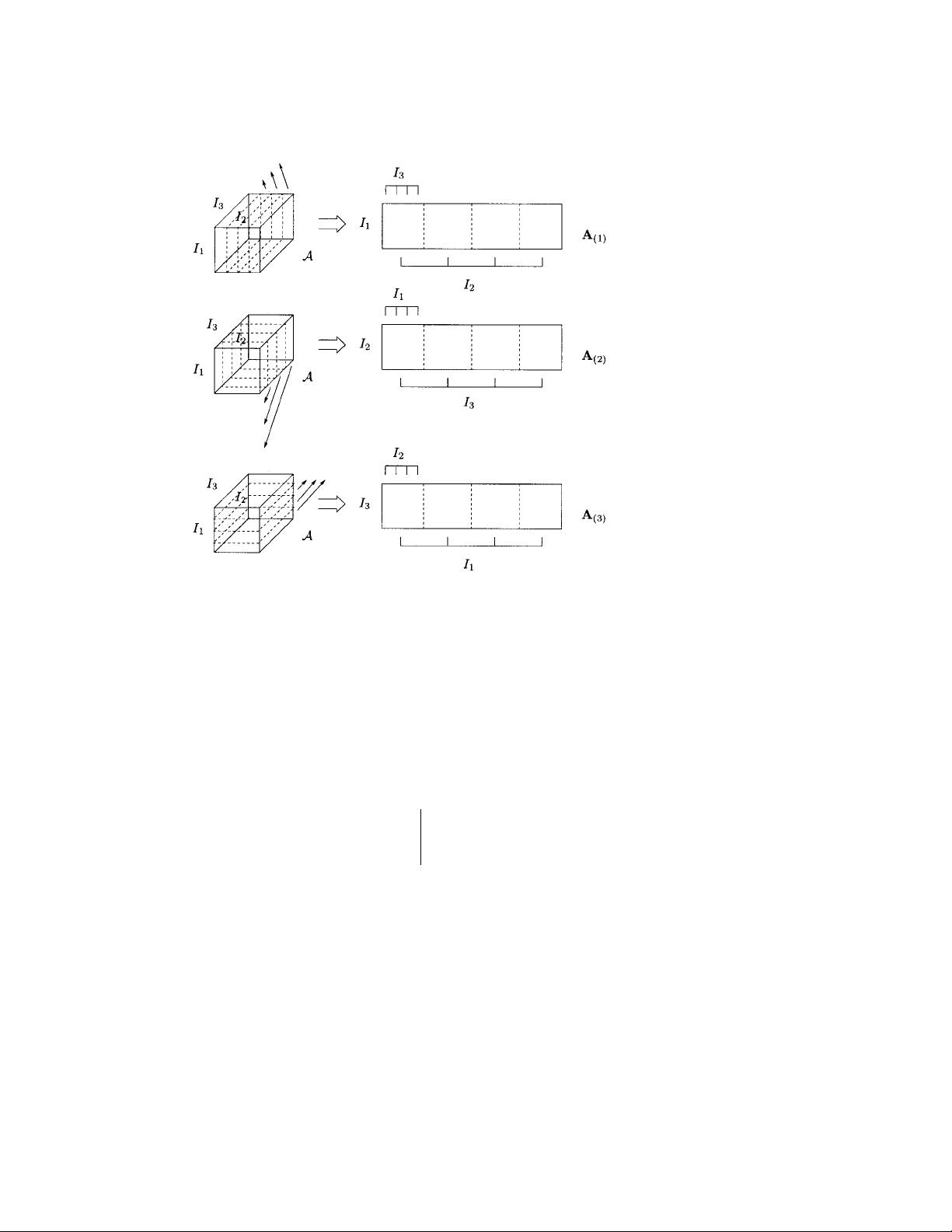

2.1. Matrix representation of a higher-order tensor. The starting point of

our derivation of a multilinear SVD will be to consider an appropriate generalization

of the link between the column (row) vectors and the left (right) singular vectors

of a matrix. To be able to formalize this idea, we define “matrix unfoldings” of

a given tensor, i.e., matrix representations of that tensor in which all the column

(row, ...) vectors are stacked one after the other. To avoid confusion, we will stick

to one particular ordering of the column (row, ...) vectors; for order three, these

unfolding procedures are visualized in Figure 1. Notice that the definitions of the

matrix unfoldings involve the tensor dimensions I

1

, I

2

, I

3

in a cyclic way and that,

when dealing with an unfolding of dimensionality I

c

× I

a

I

b

, we formally assume that

the index i

a

varies more slowly than i

b

. In general, we have the following definition.

Definition 1. Assume an Nth-order tensor A∈C

I

1

×I

2

×...×I

N

. The matrix

unfolding A

(n)

∈ C

I

n

×(I

n+1

I

n+2

...I

N

I

1

I

2

...I

n−1

)

contains the element a

i

1

i

2

...i

N

at the

1256 L. DE LATHAUWER, B. DE MOOR, AND J. VANDEWALLE

Fig. 1. Unfolding of the (I

1

× I

2

× I

3

)-tensor A to the (I

1

× I

2

I

3

)-matrix A

(1)

, the (I

2

× I

3

I

1

)-

matrix A

(2)

and the (I

3

× I

1

I

2

)-matrix A

(3)

(I

1

= I

2

= I

3

=4).

position with row number i

n

and column number equal to

(i

n+1

− 1)I

n+2

I

n+3

...I

N

I

1

I

2

...I

n−1

+(i

n+2

− 1)I

n+3

I

n+4

...I

N

I

1

I

2

...I

n−1

+ ···

+(i

N

− 1)I

1

I

2

...I

n−1

+(i

1

− 1)I

2

I

3

...I

n−1

+(i

2

− 1)I

3

I

4

...I

n−1

+ ···+ i

n−1

.

Example 1. Define a tensor A∈R

3×2×3

by a

111

= a

112

= a

211

= −a

212

=1,

a

213

= a

311

= a

313

= a

121

= a

122

= a

221

= −a

222

=2, a

223

= a

321

= a

323

=4,

a

113

= a

312

= a

123

= a

322

=0. The matrix unfolding A

(1)

is given by

A

(1)

=

110

220

1 −12

2 −24

202

404

.

2.2. Rank properties of a higher-order tensor. There are major differences

between matrices and higher-order tensors when rank properties are concerned. As we

will explain in section 3, these differences directly affect the way an SVD generalization

could look. As a matter of fact, there is not a unique way to generalize the rank

concept.

First, it is easy to generalize the notion of column and row rank. If we refer

in general to the column, row, ...vectors of an Nth-order tensor A∈C

I

1

×I

2

×...×I

N

as its “n-mode vectors,” defined as the I

n

-dimensional vectors obtained from A by

varying the index i

n

and keeping the other indices fixed, then we have the following

definition.

A MULTILINEAR SINGULAR VALUE DECOMPOSITION 1257

Definition 2. The n-rank of A, denoted by R

n

= rank

n

(A), is the dimension of

the vector space spanned by the n-mode vectors.

The n-rank of a given tensor can be analyzed by means of matrix techniques.

Property 1. The n-mode vectors of A are the column vectors of the matrix

unfolding A

(n)

and

rank

n

(A) = rank(A

(n)

).

A major difference with the matrix case, however, is the fact that the different

n-ranks of a higher-order tensor are not necessarily the same, as can easily be verified

by checking some examples (see further).

The rank of a higher-order tensor is usually defined in analogy with the fact that

a rank-R matrix can be decomposed as a sum of R rank-1 terms [12, 29].

Definition 3. An Nth-order tensor A has rank 1 when it equals the outer

product of N vectors U

(1)

, U

(2)

, ..., U

(N)

, i.e.,

a

i

1

i

2

...i

N

= u

(1)

i

1

u

(2)

i

2

...u

(N)

i

N

,

for all values of the indices.

Definition 4. The rank of an arbitrary Nth-order tensor A, denoted by R =

rank(A), is the minimal number of rank-1 tensors that yield A in a linear combina-

tion.

(With respect to the definition of a rank-1 tensor, a remark on the notation has to

be made. For matrices, the vector-vector outer product of U

(1)

and U

(2)

is denoted as

U

(1)

· U

(2)

T

; to avoid an ad hoc notation based on “generalized transposes” (in which

the fact that column vectors are transpose-free would not have an inherent meaning),

we will further denote the outer product of U

(1)

, U

(2)

, ..., U

(N)

by U

(1)

◦ U

(2)

◦···◦

U

(N)

.)

A second difference between matrices and higher-order tensors is the fact that the

rank is not necessarily equal to an n-rank, even when all the n-ranks are the same.

From the definitions it is clear that always R

n

R.

Example 2. Consider the (2 × 2 × 2)-tensor A defined by

a

111

= a

221

= a

112

=1

a

211

= a

121

= a

212

= a

122

= a

222

=0.

It follows that R

1

= R

2

=2but R

3

=1.

Example 3. Consider the (2 × 2 × 2)-tensor A defined by

a

211

= a

121

= a

112

=1

a

111

= a

222

= a

122

= a

212

= a

221

=0.

The 1-rank, 2-rank, and 3-rank are equal to 2. The rank, however, equals 3, since

A = X

2

◦ Y

1

◦ Z

1

+ X

1

◦ Y

2

◦ Z

1

+ X

1

◦ Y

1

◦ Z

2

,

in which

X

1

= Y

1

= Z

1

=

1

0

,X

2

= Y

2

= Z

2

=

0

1

is a decomposition in a minimal linear combination of rank-1 tensors (a proof is given

in [19]).

剩余26页未读,继续阅读

资源评论

liuguohua8072014-05-09非常好,很好的一篇文献,正是我需要的

liuguohua8072014-05-09非常好,很好的一篇文献,正是我需要的

xueying9827

- 粉丝: 0

- 资源: 8

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功