没有合适的资源?快使用搜索试试~ 我知道了~

GAN对抗生成网络作者讲义

温馨提示

试读

86页

自从 Ian Goodfellow 在 14 年发表了 论文 Generative Adversarial Nets 以来,生成式对抗网络 GAN 广受关注,加上学界大牛 Yann Lecun 在 Quora 答题时曾说,他最激动的深度学习进展是生成式对抗网络,使得 GAN 成为近年来在机器学习领域的新宠,可以说,研究机器学习的人,不懂 GAN,简直都不好意思出门。

资源推荐

资源详情

资源评论

Generative Adversarial

Networks (GANs)

Ian Goodfellow, OpenAI Research Scientist

NIPS 2016 tutorial

Barcelona, 2016-12-4

(Goodfellow 2016)

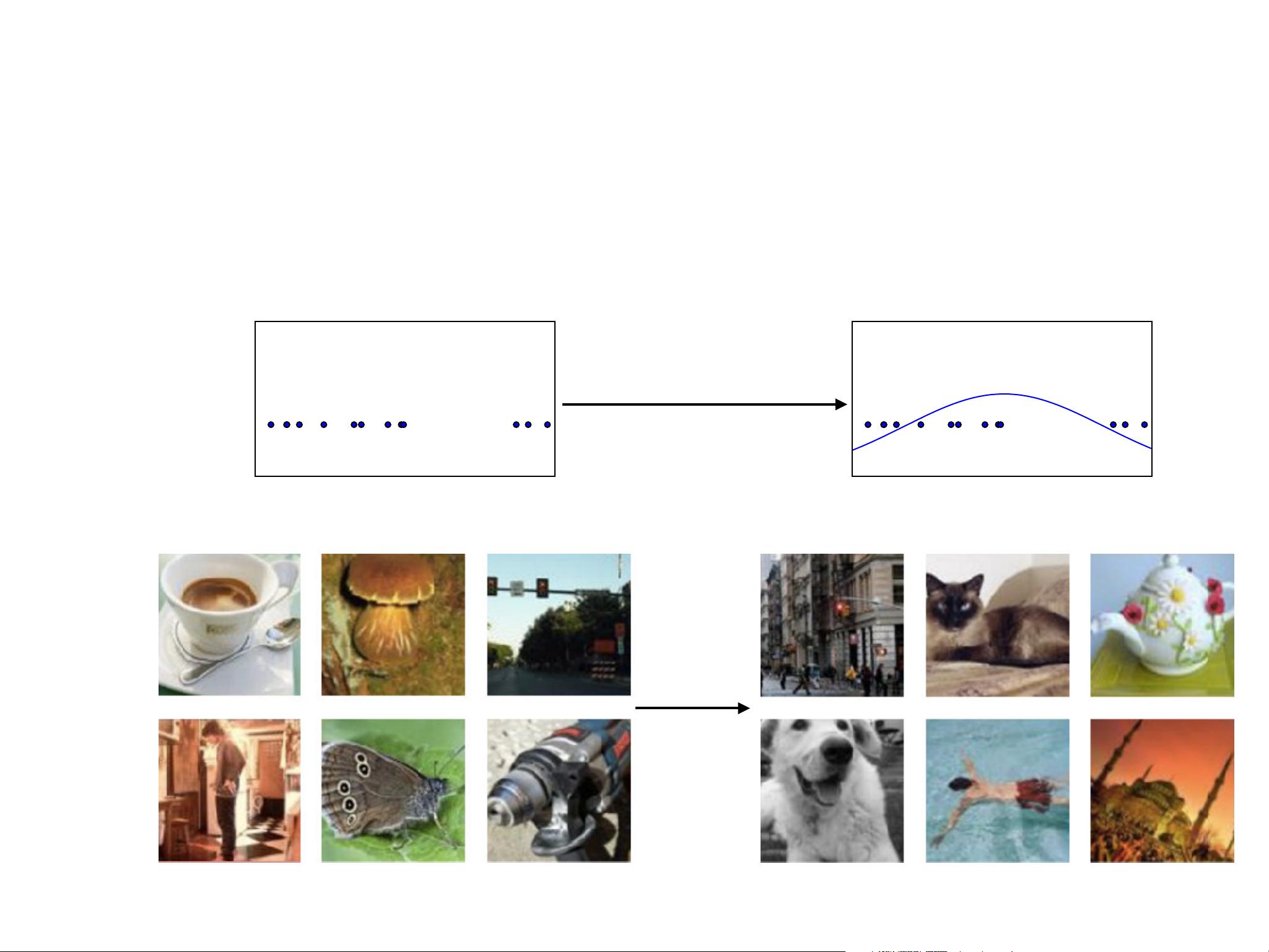

Generative Modeling

•

Density estimation

•

Sample generation

Training examples Model samples

(Goodfellow 2016)

Roadmap

•

Why study generative modeling?

•

How do generative models work? How do GANs compare to

others?

•

How do GANs work?

•

Tips and tricks

•

Research frontiers

•

Combining GANs with other methods

(Goodfellow 2016)

Why study generative models?

•

Excellent test of our ability to use high-dimensional,

complicated probability distributions

•

Simulate possible futures for planning or simulated RL

•

Missing data

•

Semi-supervised learning

•

Multi-modal outputs

•

Realistic generation tasks

(Goodfellow 2016)

Next Video Frame Prediction

CHAPTER 15. REPRESENTATION LEARNING

Ground Truth MSE Adversarial

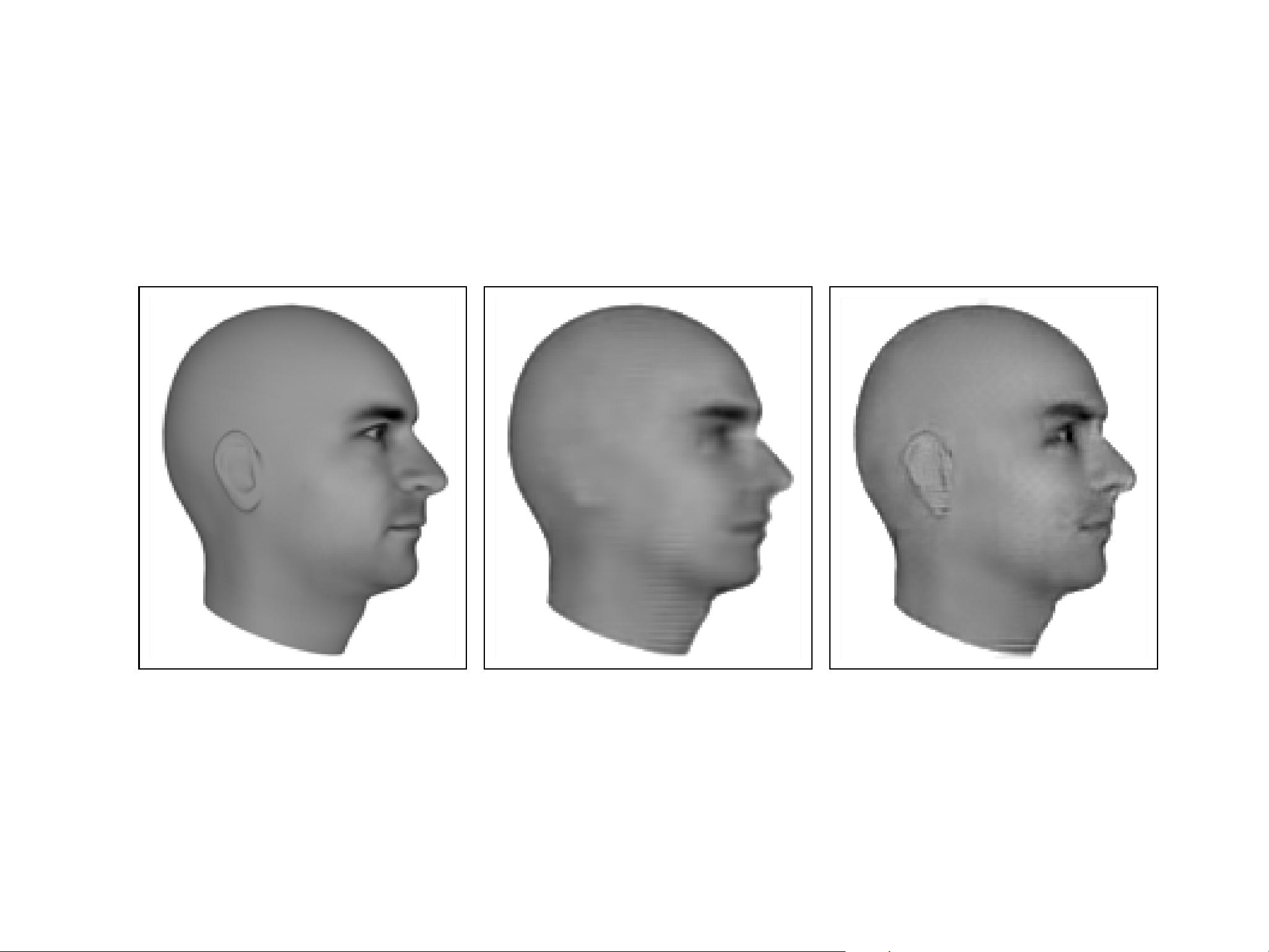

Figure 15.6: Predictive generative networks p rovide an example of the importance of

learning which features are salient. In this example, the predictive generative network

has been trained to predict the appearance of a 3-D model of a human head at a specific

viewing angle. (Left)Ground truth. This is the correct image, that the network should

emit. (Center)Image produced by a predictive generative network trained with mean

squared error alone. Because the ears do not cause an extreme difference in brightness

compared to the neighboring skin, they were not sufficiently salient for the model to learn

to represent them. (Right)Image produced by a model trained with a combination of

mean squared error and adversarial loss. Using this learned cost function, the ears are

salient because they follow a p redictable pattern. Learning which underlying causes are

important and relevant e nough to mod el is an important ac tive area of res e arch. Figures

graciously p rovided by Lotter et al. (2015).

recognizable shape and consistent position means th at a feedforward network

can easily learn to detect them, making them highly salient under the generative

adversarial framework. See figure 15.6 for example images. Generative adversarial

networks are on ly one step toward determining which factors should be represented.

We expect that future research will discover better ways of determining which

factors to represent, and devel op mechanisms for representing different factors

depending on the task.

A benefit of learning the underlying causal factors, as pointed out by Schölkopf

et al. (2012), i s that if the true generative process has

x

as an effect and

y

as

a cause, then modeling

p

(

x | y

) is robust to changes in

p

(

y

). If the cause-effect

relationship was reversed, this would not be true, since by Bayes’ rule,

p

(

x | y

)

woul d be sensitive to changes in

p

(

y

). Very often, when we consider changes in

distribution due to different domains, temporal non-stationarity, or changes in

the n ature of the task, the causal mechanisms remain invariant (the laws of the

universe are cons tant) while the marginal distribution over the underlying causes

can change. Hence, better generalization and robustness to all kinds of changes can

545

(Lotter et al 2016)

剩余85页未读,继续阅读

资源评论

fandongwei2018-07-2786张PPT,好好学习下,O(∩_∩)O谢谢

fandongwei2018-07-2786张PPT,好好学习下,O(∩_∩)O谢谢

白天。

- 粉丝: 1

- 资源: 6

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功