New Frontiers in Imitation

Learning

Yisong Yue

Behavioral Modeling

12.4. TAXI DRIVER ROUTE PREFERENCE DATA 147

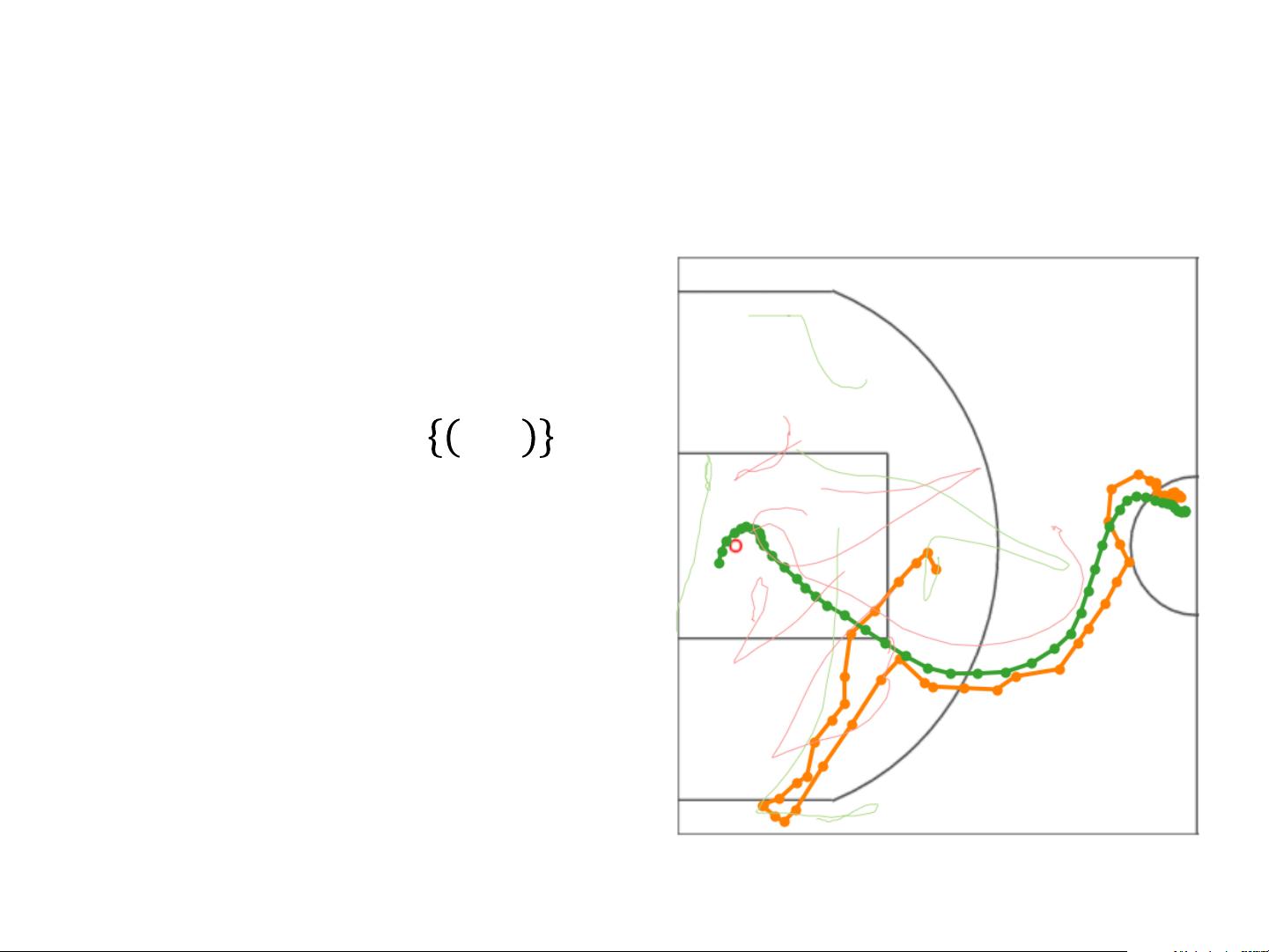

Figure 12.2: The collected GPS datapoints

12.4.3 Fitting to the Road Network and Segmenting

To address noise in the GPS data, we fit it to the road network using a particle filter (Thrun et al.,

2005). A particle filter simulates a large number of vehicles traversing over the road network,

focusing its attention on particles that best match the GPS readings. A motion model is employed

to simulate the movement of the vehicle and an observation model is employed to express the

relationship between the true location of the vehicle and the GPS reading of the vehicle. We use

a motion model based on the empirical distribution of changes in speed and a Laplace distribution

for our observation model.

Once fitted to the road network, we segmented our GPS traces into distinct trips. Our segmen-

tation is based on time-thresholds. Position readings with a small velocity for a period of time are

considered to be at the end of one trip and the beginning of a new trip. We note that this problem

is particularly difficult for taxi driver data, because these drivers may often stop only long enough

to let out a passenger and this can be difficult to distinguish from stopping at a long stoplight. To

address same of the potential noise, we discard trips that are too short, too noisy, and too cyclic.

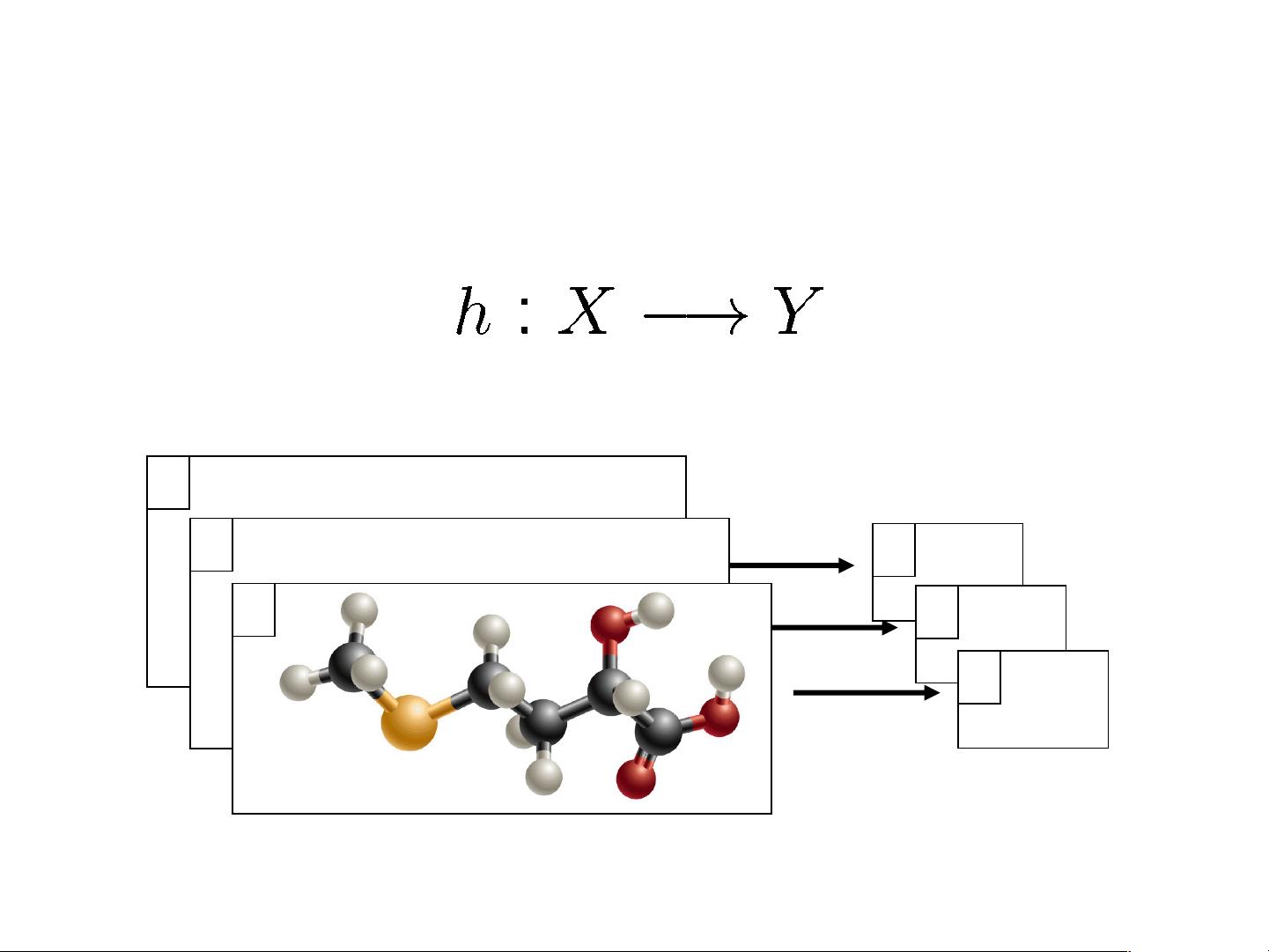

• Find function from input space X to output space Y

such that the prediction error is low.

Microsoft announced today that they

acquired Apple for the amount equal to the

gross national product of Switzerland.

Microsoft officials stated that they first

wanted to buy Switzerland, but eventually

were turned off by the mountains and the

snowy winters…

x

y

1

GATACAACCTATCCCCGTATATATATTCTA

TGGGTATAGTATTAAATCAATACAACCTAT

CCCCGTATATATATTCTATGGGTATAGTAT

TAAATCAATACAACCTATCCCCGTATATAT

ATTCTATGGGTATAGTATTAAATCAGATAC

AACCTATCCCCGTATATATATTCTATGGGT

ATAGTATTAAATCACATTTA

x

y

-1

x

y

7.3

Warm Up: Supervised Learning

Imitation Learning

• Input:

– Sequence of contexts/states:

• Predict:

– Sequence of actions

• Learn Using:

– Sequences of demonstrated actions

h

s

a

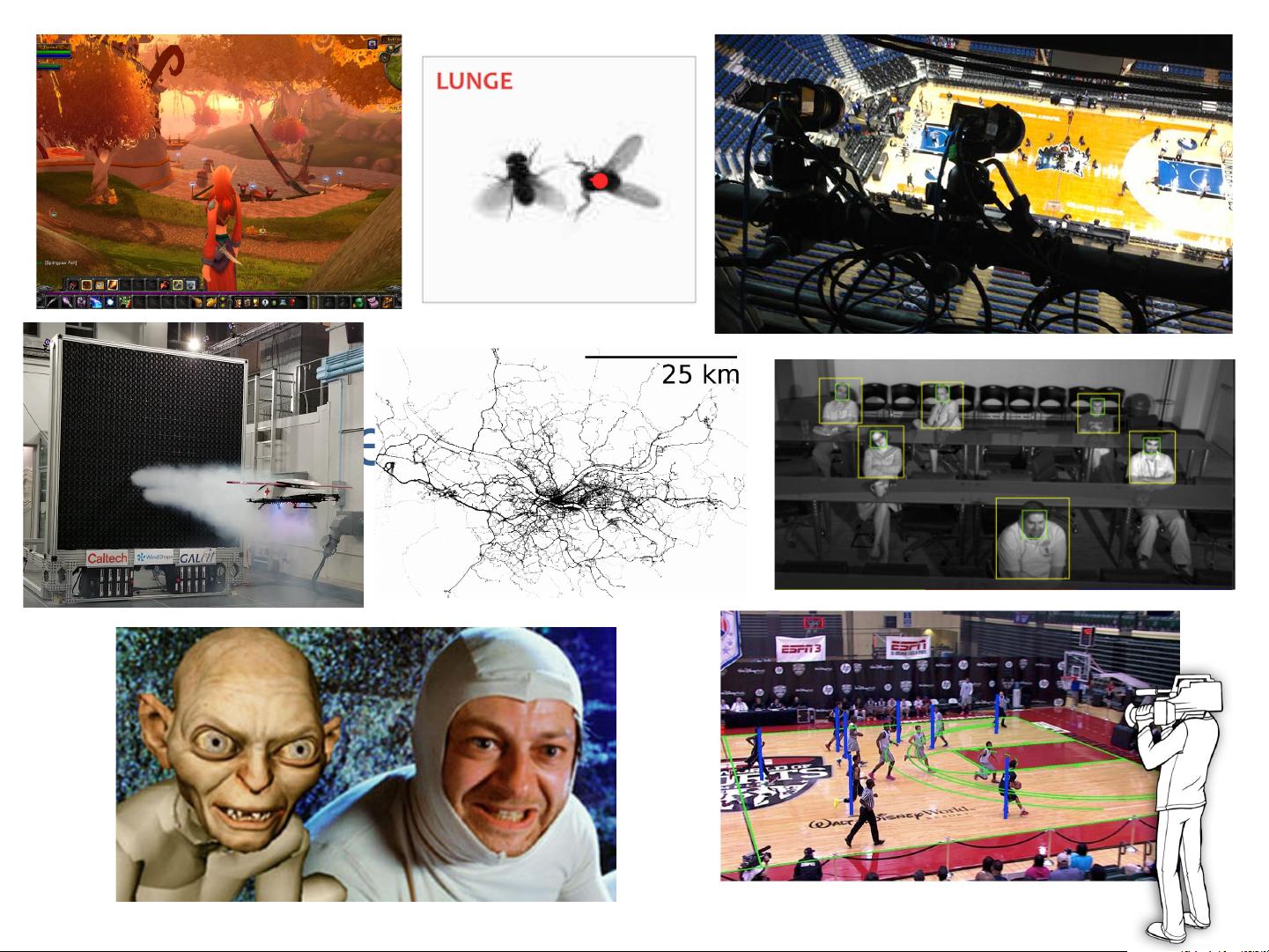

Example: Basketball Player Trajectories

• 𝑠 = location of players & ball

• 𝑎 = next location of player

• Training set: 𝐷 = %𝑠, %𝑎

– %𝑠 = sequence of 𝑠

– %𝑎 = sequence of 𝑎

• Goal: learn ℎ(𝑠) → 𝑎

Generating Long-term Trajectories Using Deep

Hierarchical Networks

Stephan Zheng

Caltech

stzheng@caltech.edu

Yisong Yue

Caltech

yyue@caltech.edu

Patrick Lucey

STATS

plucey@stats.com

Abstract

We study the problem of modeling spatiotemporal trajectories over long time

horizons using expert demonstrations. For instance, in sports, agents often choose

action sequences with long-term goals in mind, such as achieving a certain strategic

position. Conventional policy learning approaches, such as those based on Markov

decision processes, generally fail at learning cohesive long-term behavior in such

high-dimensional state spaces, and are only effective when fairly myopic decision-

making yields the desired behavior. The key difficulty is that conventional models

are “single-scale” and only learn a single state-action policy. We instead propose a

hierarchical policy class that automatically reasons about both long-term and short-

term goals, which we instantiate as a hierarchical neural network. We showcase our

approach in a case study on learning to imitate demonstrated basketball trajectories,

and show that it generates significantly more realistic trajectories compared to

non-hierarchical baselines as judged by professional sports analysts.

1 Introduction

Figure 1: The player (green)

has two macro-goals: 1)

pass the ball (orange) and

2) move to the basket.

Modeling long-term behavior is a key challenge in many learning prob-

lems that require complex decision-making. Consider a sports player

determining a movement trajectory to achieve a certain strategic position.

The space of such trajectories is prohibitively large, and precludes conven-

tional approaches, such as those based on simple Markovian dynamics.

Many decision problems can be naturally modeled as requiring high-level,

long-term macro-goals, which span time horizons much longer than the

timescale of low-level micro-actions (cf. He et al.

[8]

, Hausknecht and

Stone

[7]

). A natural example for such macro-micro behavior occurs in

spatiotemporal games, such as basketball where players execute complex

trajectories. The micro-actions of each agent are to move around the

court and, if they have the ball, dribble, pass or shoot the ball. These

micro-actions operate at the centisecond scale, whereas their macro-goals,

such as "maneuver behind these 2 defenders towards the basket", span

multiple seconds. Figure 1 depicts an example from a professional basketball game, where the player

must make a sequence of movements (micro-actions) in order to reach a specific location on the

basketball court (macro-goal).

Intuitively, agents need to trade-off between short-term and long-term behavior: often sequences of

individually reasonable micro-actions do not form a cohesive trajectory towards a macro-goal. For

instance, in Figure 1 the player (green) takes a highly non-linear trajectory towards his macro-goal of

positioning near the basket. As such, conventional approaches are not well suited for these settings,

as they generally use a single (low-level) state-action policy, which is only successful when myopic

or short-term decision-making leads to the desired behavior.

30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain.