Introduction to Boosted Trees

TexPoint fonts used in EMF.

Read the TexPoint manual before you delete this box.: AAA

Tianqi Chen

Oct. 22 2014

Outline

• Review of key concepts of supervised learning

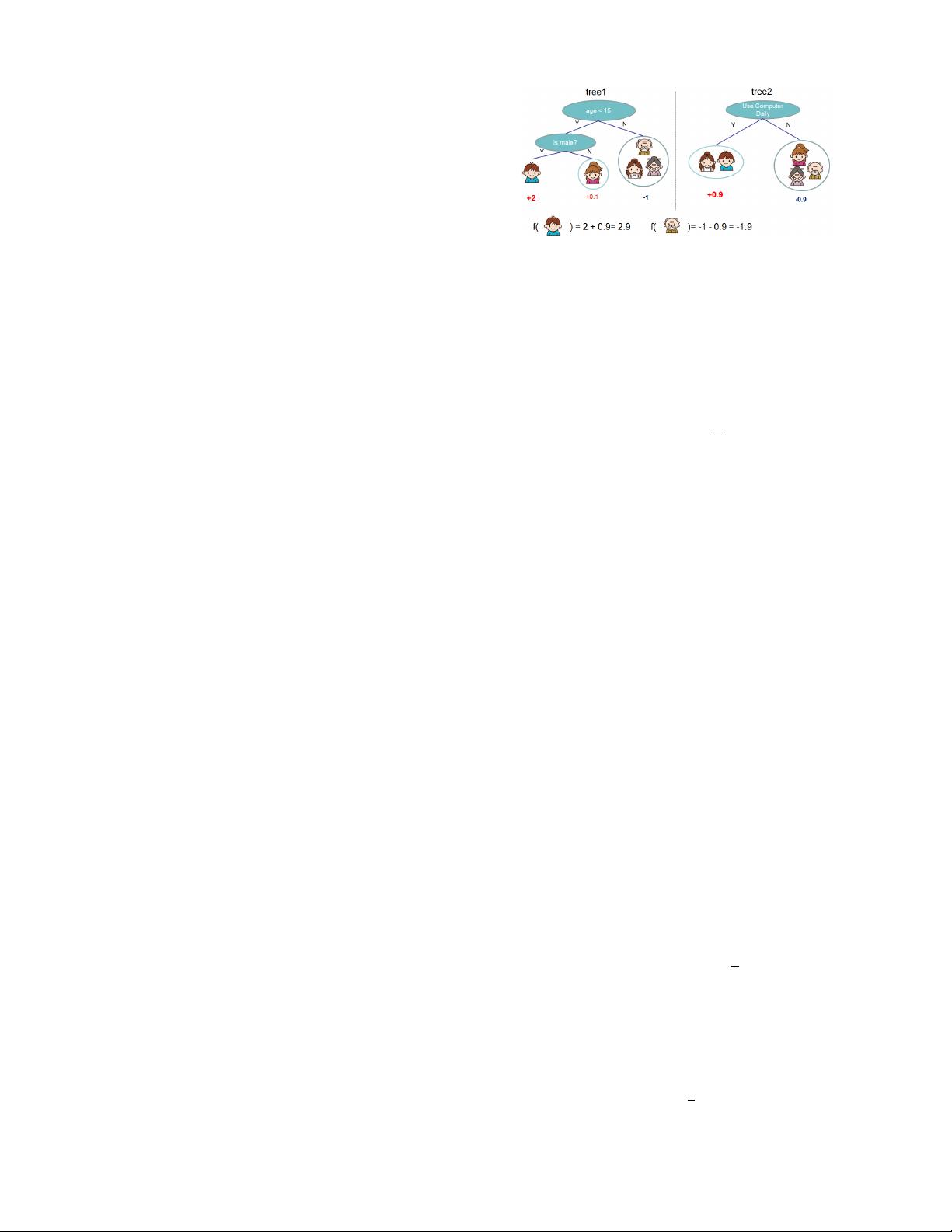

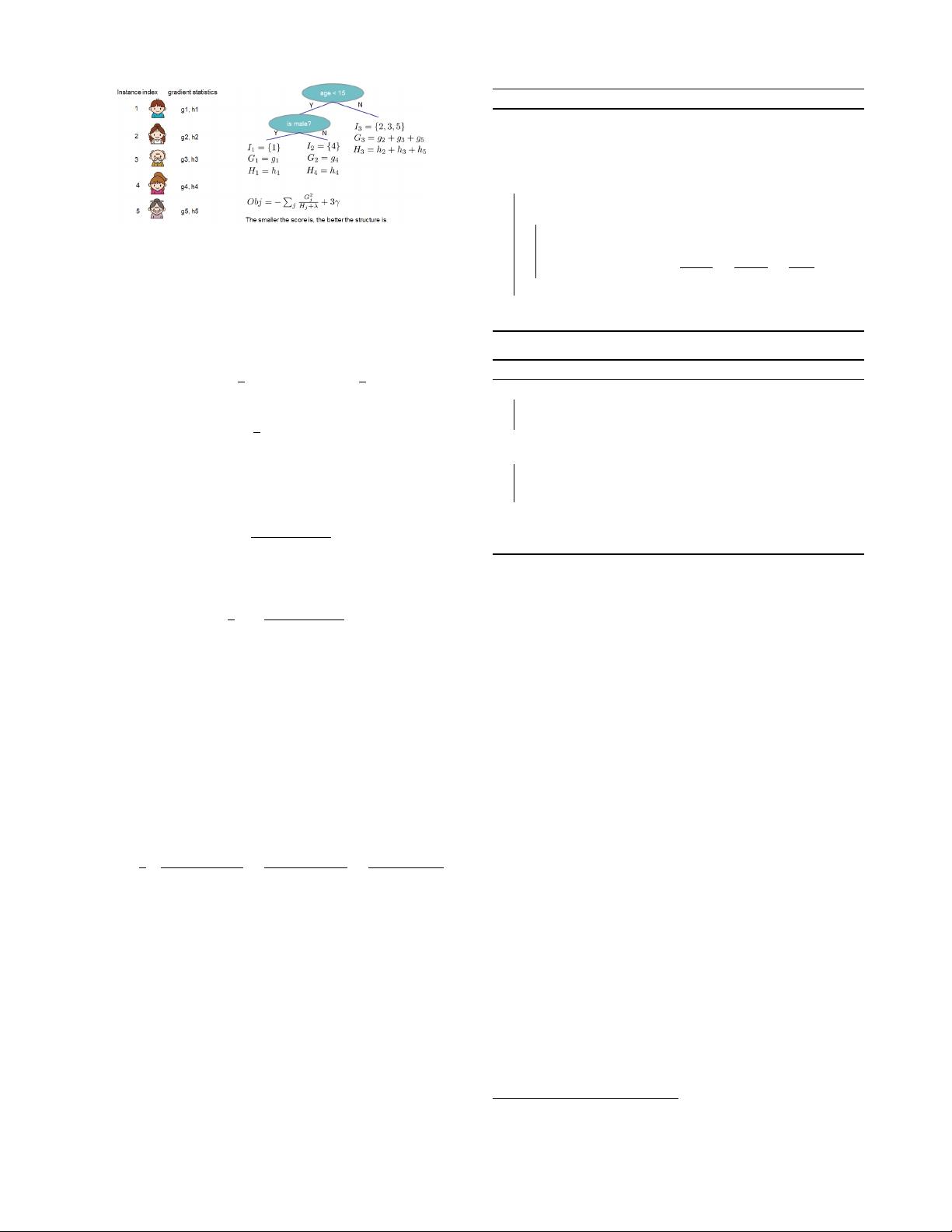

• Regression Tree and Ensemble (What are we Learning)

• Gradient Boosting (How do we Learn)

• Summary

Elements in Supervised Learning

• Notations: i-th training example

• Model: how to make prediction given

Linear model: (include linear/logistic regression)

The prediction score can have different interpretations

depending on the task

Linear regression: is the predicted score

Logistic regression: is predicted the probability

of the instance being positive

Others… for example in ranking can be the rank score

• Parameters: the things we need to learn from data

Linear model:

Elements continued: Objective Function

• Objective function that is everywhere

• Loss on training data:

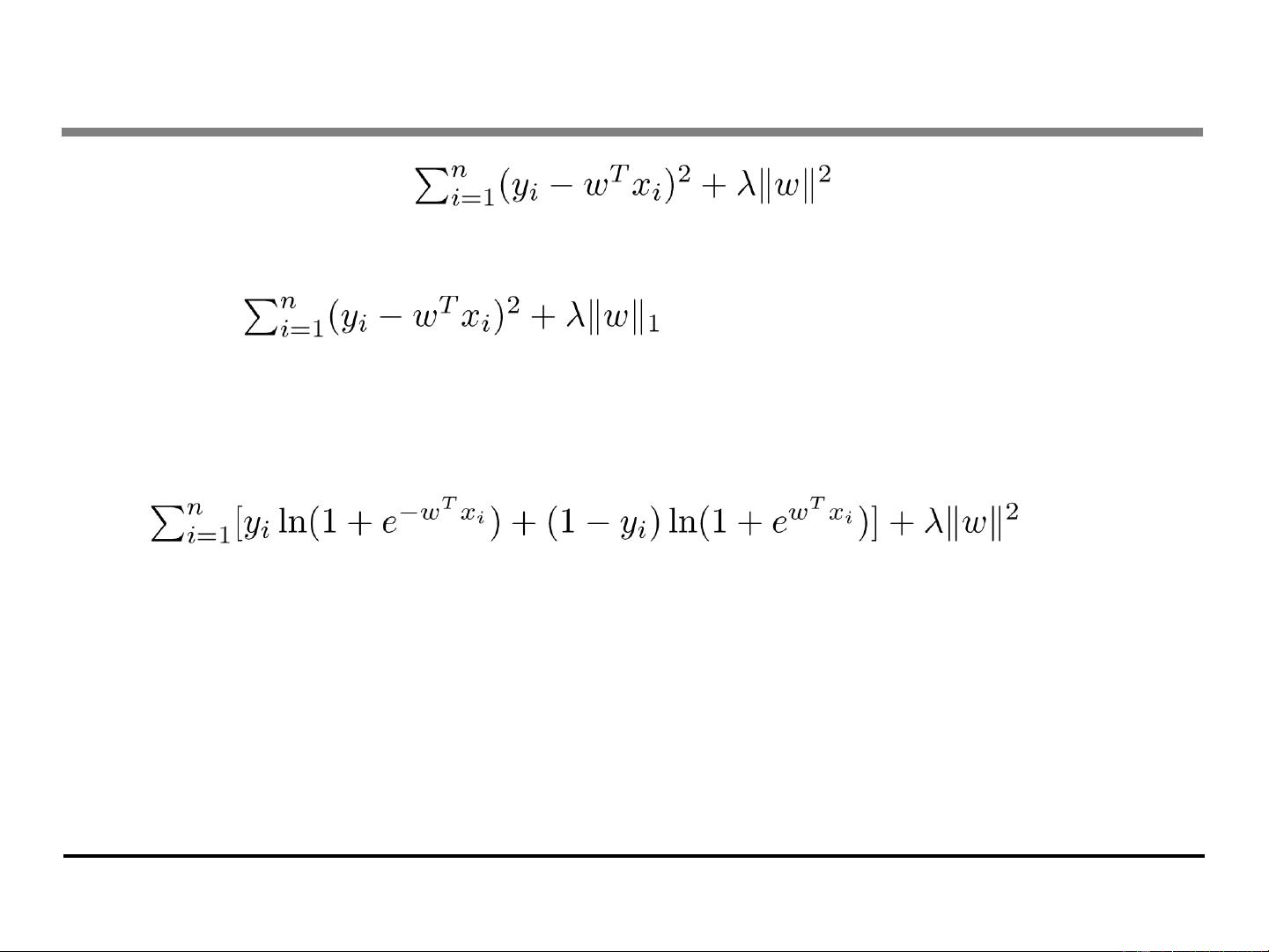

Square loss:

Logistic loss:

• Regularization: how complicated the model is?

L2 norm:

L1 norm (lasso):

Training Loss measures how

well model fit on training data

Regularization, measures

complexity of model

Putting known knowledge into context

• Ridge regression:

Linear model, square loss, L2 regularization

• Lasso:

Linear model, square loss, L1 regularization

• Logistic regression:

Linear model, logistic loss, L2 regularization

• The conceptual separation between model, parameter,

objective also gives you engineering benefits.

Think of how you can implement SGD for both ridge regression

and logistic regression

- 1

- 2

前往页