Structure Inference Net: Object Detection Using Scene-Level Context and

Instance-Level Relationships

Yong Liu

1,2

, Ruiping Wang

1,2,3

, Shiguang Shan

1,2,3

, Xilin Chen

1,2,3

1

Key Laboratory of Intelligent Information Processing of Chinese Academy of Sciences (CAS),

Institute of Computing Technology, CAS, Beijing, 100190, China

2

University of Chinese Academy of Sciences, Beijing, 100049, China

3

Cooperative Medianet Innovation Center, China

yong.liu@vipl.ict.ac.cn, {wangruiping, sgshan, xlchen}@ict.ac.cn

Abstract

Context is important for accurate visual recognition. In

this work we propose an object detection algorithm that not

only considers object visual appearance, but also makes use

of two kinds of context including scene contextual informa-

tion and object relationships within a single image. There-

fore, object detection is regarded as both a cognition prob-

lem and a reasoning problem when leveraging these struc-

tured information. Specifically, this paper formulates object

detection as a problem of graph structure inference, where

given an image the objects are treated as nodes in a graph

and relationships between the objects are modeled as edges

in such graph. To this end, we present a so-called Struc-

ture Inference Network (SIN), a detector that incorporates

into a typical detection framework (e.g. Faster R-CNN) with

a graphical model which aims to infer object state. Com-

prehensive experiments on PASCAL VOC and MS COCO

datasets indicate that scene context and object relationships

truly improve the performance of object detection with more

desirable and reasonable outputs.

1. Introduction

Object detection is one of the fundamental computer vi-

sion problems. Recently, this topic has enjoyed a series of

breakthroughs thanks to the advances of deep learning, and

it is observed that prevalent object detectors predominantly

regard detection as a problem of classifying candidate boxes

[

16, 15, 33, 24, 7]. While most of them have achieved im-

pressive performance in a number of detection benchmarks,

they only focus on local information near an object’s region

of interest within the image. Usually an image contains rich

contextual information including scene context and object

relationships [

10]. Ignoring these information inevitably

places constraints on the accuracy of objects detected [

3].

To illustrate such constraints, considering the practical

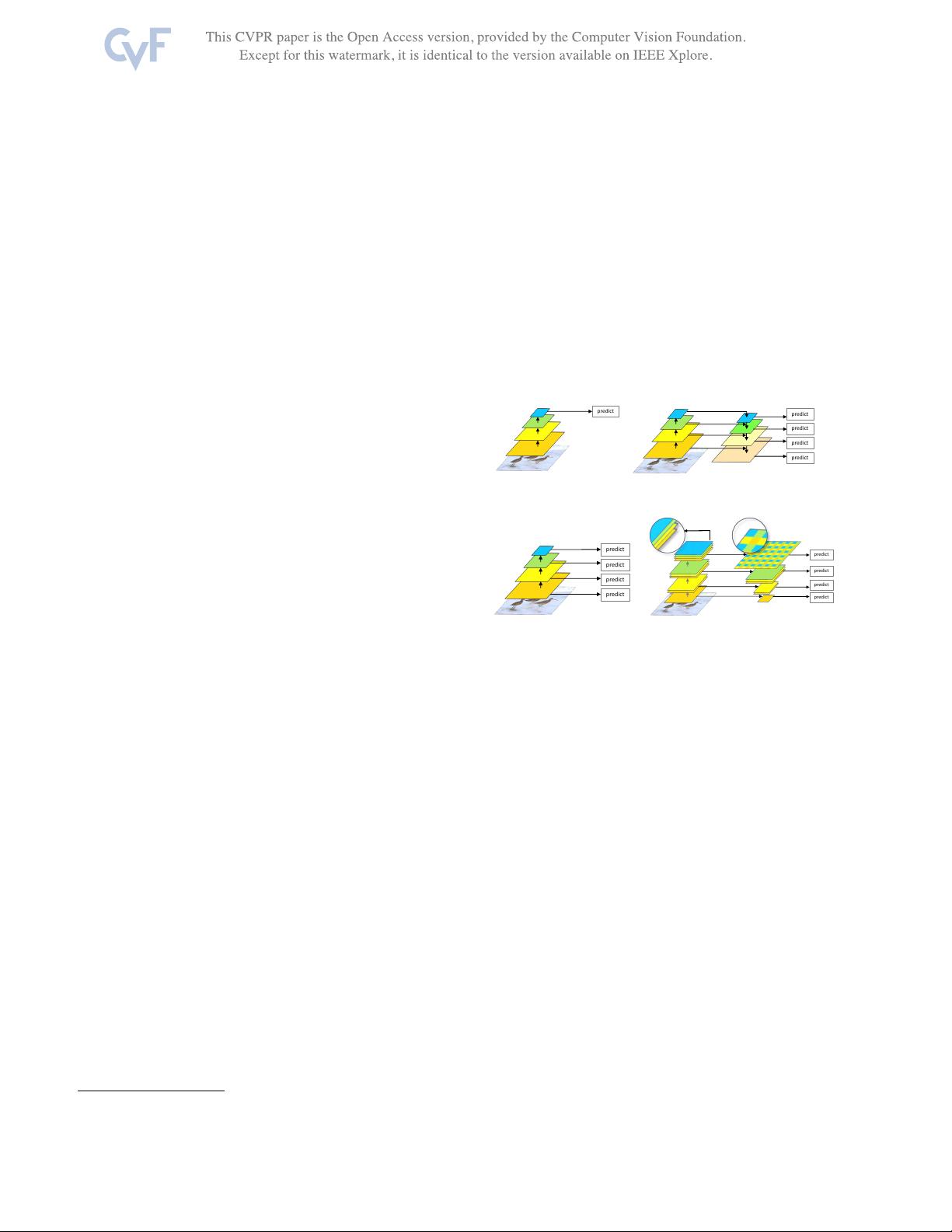

(a) (b)

Figure 1. Some Typical Detection Errors of Faster R-CNN. (a)

Some boats are mislabeled as cars on PASCAL VOC [

12]. (b) The

mouse is undetected on MS COCO [26].

examples in Fig.

1, detected by Faster R-CNN [33]. In

the first case where is a river field, some of the boats are

mislabeled as cars, since the detector only concentrates on

object’s visual appearance. If the scene information in this

image was taken into account, such banana skin could have

been easily avoided. In the second case, though a laptop and

person have been detected as expected, no further object is

found any more. It is quite common that mouse and laptop

usually co-occur within a single image. If using object rela-

tive position and co-occurrence pattern, more objects within

the given image could be detected.

Many empirical studies [

10, 14, 19, 41, 30, 29, 36] have

suggested that recognition algorithms can be improved by

proper modeling of context. To handle the problem above,

two types of contextual information model have been ex-

plored for detection [

4]. The first type incorporates con-

text around object or scene-level context [

3, 43, 37], and the

second models object-object relationships at instance-level

[

18, 4, 30]. While these two types of models capture com-

plementary contextual information, they can be combined

together to jointly help detection.

We are thus motivated to intuitively conjecture that vi-

sual concepts in most of natural images form an organism

with the key components of scene, objects and relation-

ships, and different objects in the scene are organized in a

6985