Reinforcement Learning Algorithms in Markov

Decision Processes

AAAI-10 Tutorial

Part III: Learning to control

Csaba Szepesv

´

ari Richard S. Sutton

University of Alberta

E-mails: {szepesva,rsutton}@.ualberta.ca

Atlanta, July 11, 2010

Szepesv

´

ari & Sutton (UofA) RL Algorithms July 11, 2010 1 / 39

Contributions

! !"# !"$ %

&'()*+

!

%,

-.*/0/)1)(2

34+5)(2

67+'()*+5

80.94(

:*1)'2;<)(=;

.4'*9+)>4.

?4=0@)*.;:*1)'2

80.94(;

:*1)'2;<A*

;.4'*9+)>4.

Off-policy learning with options and recognizers

Doina Precup, Richard S. Sutton, Cosmin Paduraru, Anna J. Koop, Satinder Singh

McGill University, University of Alberta, University of Michigan

Options

Distinguished

region

Ideas and Motivation Background Recognizers

Off-policy algorithm for options

Learning w/o the Behavior Policy

Wall

Options

• A way of behaving for a period of time

Models of options

• A predictive model of the outcome of following the option

• What state will you be in?

• Will you still control the ball?

• What will be the value of some feature?

• Will your teammate receive the pass?

• What will be the expected total reward along the way?

• How long can you keep control of the ball?

Dribble Keepaway Pass

Options for soccer players could be

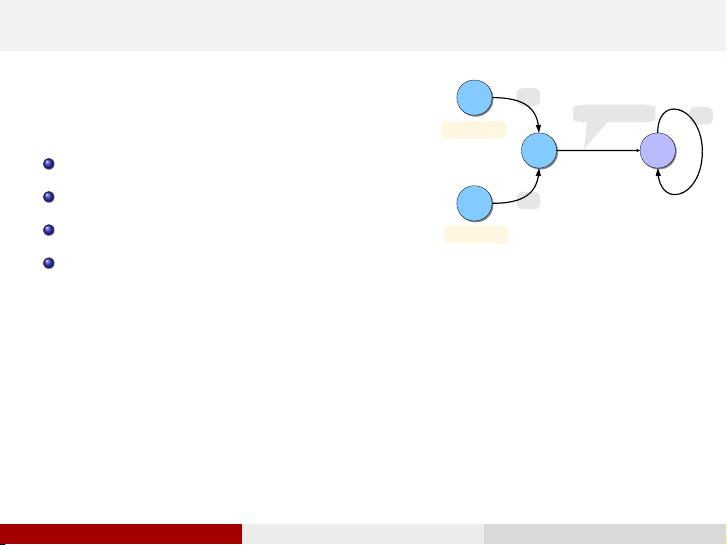

Options in a 2D world

The red and blue options

are mostly executed.

Surely we should be able

to learn about them from

this experience!

Experienced

trajectory

Off-policy learning

• Learning about one policy while behaving according to another

• Needed for RL w/exploration (as in Q-learning)

• Needed for learning abstract models of dynamical systems

(representing world knowledge)

• Enables efficient exploration

• Enables learning about many ways of behaving at the same time

(learning models of options)

! a policy

! a stopping condition

Non-sequential example

Problem formulation w/o recognizers

Problem formulation with recognizers

• One state

• Continuous action a ∈ [0, 1]

• Outcomes z

i

= a

i

• Given samples from policy b : [0, 1] → #

+

• Would like to estimate the mean outcome for a sub-region of the

action space, here a ∈ [0.7, 0.9]

Target policy π : [0, 1] → "

+

is uniform within the region of interest

(see dashed line in figure below). The estimator is:

ˆm

π

=

1

n

n

X

i=1

π(a

i

)

b(a

i

)

z

i

.

Theorem 1 Let A = {a

1

, . . . a

k

} ⊆ A be a subset of all the

possible actions. Consider a fixed behavior policy b and let π

A

be

the class of policies that only choose actions from A, i.e., if

π(a) > 0 then a ∈ A. Then the policy induced by b and the binary

recognizer c

A

is the policy with minimum-variance one-step

importance sampling corrections, among those in π

A

:

π as given by (1) = arg min

p∈π

A

E

b

"

„

π(a

i

)

b(a

i

)

«

2

#

(2)

Proof: Using Lagrange multipliers

Theorem 2 Consider two binary recognizers c

1

and c

2

, such that

µ

1

> µ

2

. Then the importance sampling corrections for c

1

have

lower variance than the impor tance sampling corrections for c

2

.

Off-policy learning

Let the importance sampling ratio at time step t be:

ρ

t

=

π(s

t

, a

t

)

b(s

t

, a

t

)

The truncated n-step return, R

(n)

t

, satisfies:

R

(n)

t

= ρ

t

[r

t+1

+ (1 − β

t+1

)R

(n−1)

t+1

].

The update to the parameter vector is propor tional to:

∆θ

t

=

h

R

λ

t

− y

t

i

∇

θ

y

t

ρ

0

(1 − β

1

) · · · ρ

t−1

(1 − β

t

).

Theorem 3 For every time step t ≥ 0 and any initial state s,

E

b

[∆θ

t

|s] = E

π

[∆

¯

θ

t

|s].

Proof: By induction on n we show that

E

b

{R

(n)

t

|s} = E

π

{

¯

R

(n)

t

|s}

which implies that E

b

{R

λ

t

|s} = E

π

(

¯

R

λ

t

|s}. The rest of the proof is

algebraic manipulations (see paper).

Implementation of off-policy learning for options

In order to avoid ∆θ → 0, we use a restart function g : S → [0, 1]

(like in the PSD algorithm). The forward algorithm becomes:

∆θ

t

= (R

λ

t

− y

t

)∇

θ

y

t

t

X

i=0

g

i

ρ

i

...ρ

t−1

(1 − β

i+1

)...(1 − β

t

),

where g

t

is the extent of restar ting in state s

t

.

The incremental learning algorithm is the following:

• Initialize κ

0

= g

0

, e

0

= κ

0

∇

θ

y

0

• At every time step t:

δ

t

= ρ

t

(r

t+1

+ (1 − β

t+1

)y

t+1

) − y

t

θ

t+1

= θ

t

+ αδ

t

e

t

κ

t+1

= ρ

t

κ

t

(1 − β

t+1

) + g

t+1

e

t+1

= λρ

t

(1 − β

t+1

)e

t

+ κ

t+1

∇

θ

y

t+1

References

Off-policy learning is tricky

• The Bermuda triangle

! Temporal-difference learning

! Function approximation (e.g., linear)

! Off-policy

• Leads to divergence of iterative algorithms

! Q-learning diverges with linear FA

! Dynamic programming diverges with linear FA

Baird's Counterexample

V

k

(s) =

!(7)+2!(1)

terminal

state

99%

1%

100%

V

k

(s) =

!(7)+2!(2)

V

k

(s) =

!(7)+2!(3)

V

k

(s) =

!(7)+2!(4)

V

k

(s) =

!(7)+2!(5)

V

k

(s) =

2!(7)+!(6)

0

5

10

0 1000 2000 3000 4000 5000

10

10

/ -10

Iterations (k)

5

10

10

10

0

10

-

-

Parameter

values, !

k

(i)

(log scale,

broken at !1)

!

k

(7)

!

k

(1) –

!

k

(5)

!

k

(6)

Precup, Sutton & Dasgupta (PSD) algorithm

• Uses importance sampling to convert off-policy case to on-policy case

• Convergence assured by theorem of Tsitsiklis & Van Roy (1997)

• Survives the Bermuda triangle!

BUT!

• Variance can be high, even infinite (slow learning)

• Difficult to use with continuous or large action spaces

• Requires explicit representation of behavior policy (probability distribution)

Option formalism

An option is defined as a triple o = !I , π, β"

• I ⊆ S is the set of states in which the option can be initiated

• π is the internal policy of the option

• β : S → [0, 1] is a stochastic ter mination condition

We want to compute the

reward model of option o:

E

o

{R(s)} = E {r

1

+ r

2

+ . . . + r

T

|s

0

= s, π, β}

We assume that

linear function approximation is used to represent

the model:

E

o

{R(s)} ≈ θ

T

φ

s

= y

Baird, L. C. (1995). Residual algorithms: Reinforcement learning with function

approximation. In Proceedings of ICML.

Precup, D., Sutton, R. S. and Dasgupta, S. (2001). Off-policy temporal-difference

learning with function approximation. In Proceedings of ICML.

Sutton, R.S., Precup D. and Singh, S (1999). Between MDPs and semi-MDPs: A

framework for temporal abstraction in reinforcement learning. Artificial

Intelligence, vol . 112, pp. 181–211.

Sutton,, R.S. and Tanner, B. (2005). Temporal-difference networks. In Proceedings

of NIPS-17.

Sutton R.S., Rafols E. and Koop, A. (2006). Temporal abstraction in

temporal-difference networks”. In Proceedings of NIPS-18.

Tadic,V. (2001). On the convergence of temporal-difference learning with linear

function approximation. In Machine learning vol. 42.

Tsitsiklis, J. N., and Van Roy, B. (1997). An analysis of temporal-difference learning

with function approximation. IEEE Transactionson Automatic Control 42.

Acknowledgements

Theorem 4 If the following assumptions hold:

• The function approximator used to represent the model is a

state aggregrator

• The recognizer behaves consistently with the function

approximator, i.e., c(s, a) = c(p, a), ∀s ∈ p

• The recognition probability for each par tition, ˆµ(p) is estimated

using maximum likelihood:

ˆµ(p) =

N(p, c = 1)

N(p)

Then there exists a polic y ˆπ such that the off-policy learning

algorithm converges to the same model as the on-policy algorithm

using ˆπ.

Proof: In the limit, w.p.1, ˆµ converges to

P

s

d

b

(s|p)

P

a

c(p, a)b(s, a) where d

b

(s|p) is the probability of

visiting state s from partition p under the stationary distribution of b.

Let ˆπ be defined to be the same for all states in a partition p:

ˆπ (p, a) = ˆρ(p, a)

X

s

d

b

(s|p)b(s, a)

ˆπ is well-defined, in the sense that

P

a

ˆπ (s, a) = 1. Using Theorem

3, off-policy updates using importance sampling corrections ˆρ will

have the same expected value as on-policy updates using ˆπ.

The authors gratefully acknowledge the ideas and encouragement

they have received in this work from Eddie Rafols, Mark Ring,

Lihong Li and other members of the rlai.net group. We thank Csaba

Szepesvari and the reviewers of the paper for constructive

comments. This research was supported in par t by iCore, NSERC,

Alberta Ingenuity, and CFI.

The target policy π is induced by a recognizer function

c : [0, 1] !→ #

+

:

π(a) =

c(a)b(a)

P

x

c(x)b(x)

=

c(a)b(a)

µ

(1)

(see blue line below). The estimator is:

ˆm

π

=

1

n

n

X

i=1

z

i

π(a

i

)

b(a

i

)

=

1

n

n

X

i=1

z

i

c(a

i

)b(a

i

)

µ

1

b(a

i

)

=

1

n

n

X

i=1

z

i

c(a

i

)

µ

!" !"" #"" $"" %"" &""

"

'&

!

!'&

()*+,+-./01.,+.2-34

5.13,.630780#""04.)*/301.,+.2-349

:+;<7=;0,3-762+>3,

:+;<0,3-762+>3,

?=)@3,07804.)*/30.-;+724

McGill

The importance sampling corrections are:

ρ(s, a) =

π(s, a)

b(s, a)

=

c(s, a)

µ(s)

where µ(s) depends on the behavior policy b. If b is unknown,

instead of µ we will use a

maximum likelihood estimate

ˆµ : S → [0, 1], and importance sampling corrections will be defined

as:

ˆρ(s, a) =

c(s, a)

ˆµ(s)

On-policy learning

If π is used to generate behavior, then the reward model of an

option can be learned using TD-learning.

The n-step truncated return is:

¯

R

(n)

t

= r

t+1

+ (1 − β

t+1

)

¯

R

(n−1)

t+1

.

The λ-return is defined as usual:

¯

R

λ

t

= (1 − λ)

∞

X

n=1

λ

n−1

¯

R

(n)

t

.

The parameters of the function approximator are updated on every

step proportionally to:

∆

¯

θ

t

=

h

¯

R

λ

t

− y

t

i

∇

θ

y

t

(1 − β

1

) · · · (1 − β

t

).

• Recognizers reduce variance

• First off-policy learning algorithm for option models

• Off-policy learning without knowledge of the behavior

distribution

• Observations

– Options are a natural way to reduce the variance of

importance sampling algorithms (because of the termination

condition)

– Recognizers are a natural way to define options, especially

for large or continuous action spaces.

Contributions

! !"# !"$ %

&'()*+

!

%,

-.*/0/)1)(2

34+5)(2

67+'()*+5

80.94(

:*1)'2;<)(=;

.4'*9+)>4.

?4=0@)*.;:*1)'2

80.94(;

:*1)'2;<A*

;.4'*9+)>4.

Off-policy learning with options and recognizers

Doina Precup, Richard S. Sutton, Cosmin Paduraru, Anna J. Koop, Satinder Singh

McGill University, University of Alberta, University of Michigan

Options

Distinguished

region

Ideas and Motivation Background Recognizers

Off-policy algorithm for options

Learning w/o the Behavior Policy

Wall

Options

• A way of behaving for a period of time

Models of options

• A predictive model of the outcome of following the option

• What state will you be in?

• Will you still control the ball?

• What will be the value of some feature?

• Will your teammate receive the pass?

• What will be the expected total reward along the way?

• How long can you keep control of the ball?

Dribble Keepaway Pass

Options for soccer players could be

Options in a 2D world

The red and blue options

are mostly executed.

Surely we should be able

to learn about them from

this experience!

Experienced

trajectory

Off-policy learning

• Learning about one policy while behaving according to another

• Needed for RL w/exploration (as in Q-learning)

• Needed for learning abstract models of dynamical systems

(representing world knowledge)

• Enables efficient exploration

• Enables learning about many ways of behaving at the same time

(learning models of options)

! a policy

! a stopping condition

Non-sequential example

Problem formulation w/o recognizers

Problem formulation with recognizers

• One state

• Continuous action a ∈ [0,1]

• Outcomes z

i

= a

i

• Given samples from policy b : [0, 1] → #

+

• Would like to estimate the mean outcome for a sub-region of the

action space, here a ∈ [0.7,0.9]

Targetpolicy π : [0, 1] → "

+

is uniform within the region of interest

(see dashed line in figure below). The estimator is:

ˆm

π

=

1

n

n

X

i=1

π(a

i

)

b(a

i

)

z

i

.

Theorem 1 Let A = {a

1

, .. . a

k

} ⊆ A be a subset of all the

possible actions. Consider a fixed behavior policy b and let π

A

be

the class of policies that only choose actions from A, i.e., if

π(a) > 0 then a ∈ A. Then the policy induced by b and the binary

recognizer c

A

is the policy with minimum-variance one-step

importance sampling corrections, among those in π

A

:

π as given by (1) = arg min

p∈π

A

E

b

"

„

π(a

i

)

b(a

i

)

«

2

#

(2)

Proof: Using Lagrange multipliers

Theorem 2 Consider two binary recognizers c

1

and c

2

, such that

µ

1

> µ

2

. Then the impor tance sampling corrections for c

1

have

lower variance than the importance sampling corrections for c

2

.

Off-policy learning

Let the importance sampling ratio at time step t be:

ρ

t

=

π(s

t

, a

t

)

b(s

t

, a

t

)

The truncated n-step return, R

(n)

t

, satisfies:

R

(n)

t

= ρ

t

[r

t+1

+ (1 − β

t+1

)R

(n−1)

t+1

].

The update to the parameter vector is proportional to:

∆θ

t

=

h

R

λ

t

− y

t

i

∇

θ

y

t

ρ

0

(1 − β

1

) ·· · ρ

t−1

(1 − β

t

).

Theorem 3 For every time step t ≥ 0 and any initial state s,

E

b

[∆θ

t

|s] = E

π

[∆

¯

θ

t

|s].

Proof: By induction on n we show that

E

b

{R

(n)

t

|s} = E

π

{

¯

R

(n)

t

|s}

which implies that E

b

{R

λ

t

|s} = E

π

(

¯

R

λ

t

|s}. The rest of the proof is

algebraic manipulations (see paper).

Implementation of off-policy learning for options

In order to avoid ∆θ → 0, we use a restart function g : S → [0, 1]

(like in the PSD algorithm). The forward algorithm becomes:

∆θ

t

= (R

λ

t

− y

t

)∇

θ

y

t

t

X

i=0

g

i

ρ

i

...ρ

t−1

(1 − β

i+1

)...(1 − β

t

),

where g

t

is the extent of restarting in state s

t

.

The incremental learning algorithm is the following:

• Initialize κ

0

= g

0

, e

0

= κ

0

∇

θ

y

0

• At every time step t:

δ

t

= ρ

t

(r

t+1

+ (1 − β

t+1

)y

t+1

) − y

t

θ

t+1

= θ

t

+ αδ

t

e

t

κ

t+1

= ρ

t

κ

t

(1 − β

t+1

) + g

t+1

e

t+1

= λρ

t

(1 − β

t+1

)e

t

+ κ

t+1

∇

θ

y

t+1

References

Off-policy learning is tricky

• The Bermuda triangle

! Temporal-difference learning

! Function approximation (e.g., linear)

! Off-policy

• Leads to divergence of iterative algorithms

! Q-learning diverges with linear FA

! Dynamic programming diverges with linear FA

Baird's Counterexample

V

k

(s) =

!(7)+2!(1)

terminal

state

99%

1%

100%

V

k

(s) =

!(7)+2!(2)

V

k

(s) =

!(7)+2!(3)

V

k

(s) =

!(7)+2!(4)

V

k

(s) =

!(7)+2!(5)

V

k

(s) =

2!(7)+!(6)

0

5

10

0 1000 2000 3000 4000 5000

10

10

/ -10

Iterations (k)

5

10

10

10

0

10

-

-

Parameter

values, !

k

(i)

(log scale,

broken at !1)

!

k

(7)

!

k

(1) –

!

k

(5)

!

k

(6)

Precup, Sutton & Dasgupta (PSD) algorithm

• Uses importance sampling to convert off-policy case to on-policy case

• Convergence assured by theorem of Tsitsiklis & Van Roy (1997)

• Survives the Bermuda triangle!

BUT!

• Variance can be high, even infinite (slow learning)

• Difficult to use with continuous or large action spaces

• Requires explicit representation of behavior policy (probability distribution)

Option formalism

An option is defined as a triple o = !I ,π, β"

• I ⊆ S is the set of states in which the option can be i nitiated

• π is the internal policy of the option

• β : S → [0,1] is a stochastic termination condition

We want to compute the

reward model of option o:

E

o

{R(s)} = E {r

1

+ r

2

+ . .. + r

T

|s

0

= s, π, β}

We assume that

linear function approximation is used to represent

the model:

E

o

{R(s)} ≈ θ

T

φ

s

= y

Baird,L. C. (1995). Residualalgorithms: Reinforcement learning withfunction

approximation. InProceedings of ICML.

Precup,D., Sutton, R.S. and Dasgupta,S. (2001). Off-policytemporal-difference

learning withfunction approximation. InProceedings of ICML.

Sutton,R.S., Precup D.and Singh, S(1999). Between MDPs andsemi-MDPs: A

frameworkfor temporal abstractionin reinforcement learning. Artificial

Intelligence,vol . 112, pp.181–211.

Sutton,,R.S. and Tanner,B. (2005). Temporal-differencenetworks. InProceedings

ofNIPS-17.

SuttonR.S., Rafols E.and Koop, A.(2006). Temporalabstraction in

temporal-differencenetworks”. In Proceedings ofNIPS-18.

Tadic,V. (2001).On the convergenceof temporal-difference learningwith linear

functionapproximation. In Machine learningvol. 42.

Tsitsiklis,J. N., andVan Roy,B. (1997). An analysisof temporal-differencelear ning

withfunction approximation. IEEE Transactionson AutomaticControl 42.

Acknowledgements

Theorem 4 If the following assumptions hold:

• The function approximator used to represent the model is a

state aggregrator

• The recognizer behaves consistently with the function

approximator, i.e., c(s,a) = c(p, a),∀s ∈ p

• The recognition probability for each partition, ˆµ(p) is estimated

using maximum likelihood:

ˆµ(p) =

N(p, c = 1)

N(p)

Then there exists a policy ˆπ such that the off-policy learning

algorithm converges to the same model as the on-policy algorithm

using ˆπ.

Proof: In the limit, w.p.1, ˆµ converges to

P

s

d

b

(s|p)

P

a

c(p, a)b(s,a) where d

b

(s|p) is the probability of

visiting state s from partition p under the stationary distribution of b.

Let ˆπ be defined to be the same for all states in a par tition p:

ˆπ (p,a) = ˆρ(p, a)

X

s

d

b

(s|p)b(s, a)

ˆπ is well-defined, in the sense that

P

a

ˆπ (s,a) = 1. Using Theorem

3, off-policy updates using importance sampling corrections ˆρ will

have the same expected value as on-policy updates using ˆπ.

The authors gratefully acknowledge the ideas and encouragement

they have received in this work from Eddie Rafols, Mark Ring,

Lihong Li and other members of the rlai.net group. We thank Csaba

Szepesvari and the reviewers of the paper for constructive

comments. This research was supported in part by iCore, NSERC,

Alberta Ingenuity, and CFI.

The target policy π is induced by a recognizer function

c : [0, 1] !→ #

+

:

π(a) =

c(a)b(a)

P

x

c(x)b(x)

=

c(a)b(a)

µ

(1)

(see blue line below). The estimator is:

ˆm

π

=

1

n

n

X

i=1

z

i

π(a

i

)

b(a

i

)

=

1

n

n

X

i=1

z

i

c(a

i

)b(a

i

)

µ

1

b(a

i

)

=

1

n

n

X

i=1

z

i

c(a

i

)

µ

!" !"" #"" $"" %"" &""

"

'&

!

!'&

()*+,+-./01.,+.2-34

5.13,.630780#""04.)*/301.,+.2-349

:+;<7=;0,3-762+>3,

:+;<0,3-762+>3,

?=)@3,07804.)*/30.-;+724

McGill

The importance sampling corrections are:

ρ(s, a) =

π(s, a)

b(s, a)

=

c(s, a)

µ(s)

where µ(s) depends on the behavior policy b. If b is unknown,

instead of µ we will use a

maximum likelihood estimate

ˆµ : S → [0, 1], and importance sampling corrections will be defined

as:

ˆρ(s, a) =

c(s, a)

ˆµ(s)

On-policy learning

If π is used to generate behavior,then the reward model of an

option can be learned using TD-learning.

The n-step truncated return is:

¯

R

(n)

t

= r

t+1

+ (1 − β

t+1

)

¯

R

(n−1)

t+1

.

The λ-return is defined as usual:

¯

R

λ

t

= (1 − λ)

∞

X

n=1

λ

n−1

¯

R

(n)

t

.

The parameters of the function approximator are updated on every

step proportionally to:

∆

¯

θ

t

=

h

¯

R

λ

t

− y

t

i

∇

θ

y

t

(1 − β

1

) ·· · (1 − β

t

).

• Recognizers reduce variance

• First off-policy learning algorithm for option models

• Off-policy learning without knowledge of the behavior

distribution

• Observations

– Options are a natural way to reduce the variance of

importance sampling algorithms (because of the termination

condition)

– Recognizers are a natural way to define options, especially

for large or continuous action spaces.