没有合适的资源?快使用搜索试试~ 我知道了~

Latent Dirichlet Allocation.pdf

需积分: 0 12 下载量 101 浏览量

2009-11-09

11:10:34

上传

评论

收藏 414KB PDF 举报

温馨提示

试读

30页

Blei的关于LDA模型的经典文章 学习topic model必看

资源推荐

资源详情

资源评论

Journal of Machine Learning Research 3 (2003) 993-1022 Submitted 2/02; Published 1/03

Latent Dirichlet Allocation

David M. Blei BLEI@CS.BERKELEY.EDU

Computer Science Division

University of California

Berkeley, CA 94720, USA

Andrew Y. Ng ANG@CS.STANFORD.EDU

Computer Science Department

Stanford University

Stanford, CA 94305, USA

Michael I. Jordan JORDAN@CS.BERKELEY.EDU

Computer Science Division and Department of Statistics

University of California

Berkeley, CA 94720, USA

Editor: John Lafferty

Abstract

We describe latent Dirichlet allocation (LDA), a generative probabilistic model for collections of

discrete data such as text corpora. LDA is a three-level hierarchical Bayesian model, in which each

item of a collection is modeled as a finite mixture over an underlying set of topics. Each topic is, in

turn, modeled as an infinite mixture over an underlying set of topic probabilities. In the context of

text modeling, the topic probabilities provide an explicit representation of a document. We present

efficient approximate inference techniques based on variational methods and an EM algorithm for

empirical Bayes parameter estimation. We report results in document modeling, text classification,

and collaborative filtering, comparing to a mixture of unigrams model and the probabilistic LSI

model.

1. Introduction

In this paper we consider the problem of modeling text corpora and other collections of discrete

data. The goal is to find short descriptions of the members of a collection that enable efficient

processing of large collections while preserving the essential statistical relationships that are useful

for basic tasks such as classification, novelty detection, summarization, and similarity and relevance

judgments.

Significant progress has been made on this problem by researchers in the field of informa-

tion retrieval (IR) (Baeza-Yates and Ribeiro-Neto, 1999). The basic methodology proposed by

IR researchers for text corpora—a methodology successfully deployed in modern Internet search

engines—reduces each document in the corpus to a vector of real numbers, each of which repre-

sents ratios of counts. In the popular tf-idf scheme (Salton and McGill, 1983), a basic vocabulary

of “words” or “terms” is chosen, and, for each document in the corpus, a count is formed of the

number of occurrences of each word. After suitable normalization, this term frequency count is

compared to an inverse document frequency count, which measures the number of occurrences of a

c

2003 David M. Blei, Andrew Y. Ng and Michael I. Jordan.

BLEI,NG, AND JORDAN

word in the entire corpus (generally on a log scale, and again suitably normalized). The end result

is a term-by-document matrix X whose columns contain the tf-idf values for each of the documents

in the corpus. Thus the tf-idf scheme reduces documents of arbitrary length to fixed-length lists of

numbers.

While the tf-idf reduction has some appealing features—notably in its basic identification of sets

of words that are discriminative for documents in the collection—the approach also provides a rela-

tively small amount of reduction in description length and reveals little in the way of inter- or intra-

document statistical structure. To address these shortcomings, IR researchers have proposed several

other dimensionality reduction techniques, most notably latent semantic indexing (LSI) (Deerwester

et al., 1990). LSI uses a singular value decomposition of the X matrix to identify a linear subspace

in the space of tf-idf features that captures most of the variance in the collection. This approach can

achieve significant compression in large collections. Furthermore, Deerwester et al. argue that the

derived features of LSI, which are linear combinations of the original tf-idf features, can capture

some aspects of basic linguistic notions such as synonymy and polysemy.

To substantiate the claims regarding LSI, and to study its relative strengths and weaknesses, it is

useful to develop a generative probabilistic model of text corpora and to study the ability of LSI to

recover aspects of the generative model from data (Papadimitriou et al., 1998). Given a generative

model of text, however, it is not clear why one should adopt the LSI methodology—one can attempt

to proceed more directly, fitting the model to data using maximum likelihood or Bayesian methods.

A significant step forward in this regard was made by Hofmann (1999), who presented the

probabilistic LSI (pLSI) model, also known as the aspect model, as an alternative to LSI. The pLSI

approach, which we describe in detail in Section 4.3, models each word in a document as a sample

from a mixture model, where the mixture components are multinomial random variables that can be

viewed as representations of “topics.” Thus each word is generated from a single topic, and different

words in a document may be generated from different topics. Each document is represented as

a list of mixing proportions for these mixture components and thereby reduced to a probability

distribution on a fixed set of topics. This distribution is the “reduced description” associated with

the document.

While Hofmann’s work is a useful step toward probabilistic modeling of text, it is incomplete

in that it provides no probabilistic model at the level of documents. In pLSI, each document is

represented as a list of numbers (the mixing proportions for topics), and there is no generative

probabilistic model for these numbers. This leads to several problems: (1) the number of parame-

ters in the model grows linearly with the size of the corpus, which leads to serious problems with

overfitting, and (2) it is not clear how to assign probability to a document outside of the training set.

To see how to proceed beyond pLSI, let us consider the fundamental probabilistic assumptions

underlying the class of dimensionality reduction methods that includes LSI and pLSI. All of these

methods are based on the “bag-of-words” assumption—that the order of words in a document can

be neglected. In the language of probability theory, this is an assumption of exchangeability for the

words in a document (Aldous, 1985). Moreover, although less often stated formally, these methods

also assume that documents are exchangeable; the specific ordering of the documents in a corpus

can also be neglected.

A classic representation theorem due to de Finetti (1990) establishes that any collection of ex-

changeable random variables has a representation as a mixture distribution—in general an infinite

mixture. Thus, if we wish to consider exchangeable representations for documents and words, we

need to consider mixture models that capture the exchangeability of both words and documents.

994

LATENT DIRICHLET ALLOCATION

This line of thinking leads to the latent Dirichlet allocation (LDA) model that we present in the

current paper.

It is important to emphasize that an assumption of exchangeability is not equivalent to an as-

sumption that the random variables are independent and identically distributed. Rather, exchange-

ability essentially can be interpreted as meaning “conditionally independent and identically dis-

tributed,” where the conditioning is with respect to an underlying latent parameter of a probability

distribution. Conditionally, the joint distribution of the random variables is simple and factored

while marginally over the latent parameter, the joint distribution can be quite complex. Thus, while

an assumption of exchangeability is clearly a major simplifying assumption in the domain of text

modeling, and its principal justification is that it leads to methods that are computationally efficient,

the exchangeability assumptions do not necessarily lead to methods that are restricted to simple

frequency counts or linear operations. We aim to demonstrate in the current paper that, by taking

the de Finetti theorem seriously, we can capture significant intra-document statistical structure via

the mixing distribution.

It is also worth noting that there are a large number of generalizations of the basic notion of

exchangeability, including various forms of partial exchangeability, and that representation theo-

rems are available for these cases as well (Diaconis, 1988). Thus, while the work that we discuss in

the current paper focuses on simple “bag-of-words” models, which lead to mixture distributions for

single words (unigrams), our methods are also applicable to richer models that involve mixtures for

larger structural units such as n-grams or paragraphs.

The paper is organized as follows. In Section 2 we introduce basic notation and terminology.

The LDA model is presented in Section 3 and is compared to related latent variable models in

Section 4. We discuss inference and parameter estimation for LDA in Section 5. An illustrative

example of fitting LDA to data is provided in Section 6. Empirical results in text modeling, text

classification and collaborative filtering are presented in Section 7. Finally, Section 8 presents our

conclusions.

2. Notation and terminology

We use the language of text collections throughout the paper, referring to entities such as “words,”

“documents,” and “corpora.” This is useful in that it helps to guide intuition, particularly when

we introduce latent variables which aim to capture abstract notions such as topics. It is important

to note, however, that the LDA model is not necessarily tied to text, and has applications to other

problems involving collections of data, including data from domains such as collaborative filtering,

content-based image retrieval and bioinformatics. Indeed, in Section 7.3, we present experimental

results in the collaborative filtering domain.

Formally, we define the following terms:

• A word is the basic unit of discrete data, defined to be an item from a vocabulary indexed by

{1,... ,V}. We represent words using unit-basis vectors that have a single component equal to

one and all other components equal to zero. Thus, using superscripts to denote components,

the vth word in the vocabulary is represented by a V-vector w such that w

v

= 1 and w

u

= 0 for

u 6= v.

• A document is a sequence of N words denoted by w =(w

1

,w

2

,... ,w

N

), where w

n

is the nth

word in the sequence.

• A corpus is a collection of M documents denoted by

D = {w

1

,w

2

,... ,w

M

}.

995

BLEI,NG, AND JORDAN

We wish to find a probabilistic model of a corpus that not only assigns high probability to

members of the corpus, but also assigns high probability to other “similar” documents.

3. Latent Dirichlet allocation

Latent Dirichlet allocation (LDA) is a generative probabilistic model of a corpus. The basic idea is

that documents are represented as random mixtures over latent topics, where each topic is charac-

terized by a distribution over words.

1

LDA assumes the following generative process for each document w in a corpus D:

1. Choose N ∼ Poisson(ξ).

2. Choose θ ∼ Dir(α).

3. For each of the N words w

n

:

(a) Choose a topic z

n

∼ Multinomial(θ).

(b) Choose a word w

n

from p(w

n

|z

n

,β), a multinomial probability conditioned on the topic

z

n

.

Several simplifying assumptions are made in this basic model, some of which we remove in subse-

quent sections. First, the dimensionality k of the Dirichlet distribution (and thus the dimensionality

of the topic variable z) is assumed known and fixed. Second, the word probabilities are parameter-

ized by a k ×V matrix β where β

ij

= p(w

j

= 1|z

i

= 1), which for now we treat as a fixed quantity

that is to be estimated. Finally, the Poisson assumption is not critical to anything that follows and

more realistic document length distributions can be used as needed. Furthermore, note that N is

independent of all the other data generating variables (θ and z). It is thus an ancillary variable and

we will generally ignore its randomness in the subsequent development.

A k-dimensional Dirichlet random variable θ can take values in the (k − 1)-simplex (a k-vector

θ lies in the (k− 1)-simplex if θ

i

≥ 0,

∑

k

i=1

θ

i

= 1), and has the following probability density on this

simplex:

p(θ|α)=

Γ

∑

k

i=1

α

i

∏

k

i=1

Γ(α

i

)

θ

α

1

−1

1

···θ

α

k

−1

k

, (1)

where the parameter αis a k-vector with components α

i

> 0, and where Γ(x) is the Gamma function.

The Dirichlet is a convenient distribution on the simplex — it is in the exponential family, has finite

dimensional sufficient statistics, and is conjugate to the multinomial distribution. In Section 5, these

properties will facilitate the development of inference and parameter estimation algorithms for LDA.

Given the parameters α and β, the joint distribution of a topic mixture θ, a set of N topics z, and

a set of N words w is given by:

p(θ,z,w|α, β)=p(θ|α)

N

∏

n=1

p(z

n

|θ)p(w

n

|z

n

,β), (2)

1. We refer to the latent multinomial variables in the LDA model as topics, so as to exploit text-oriented intuitions, but

we make no epistemological claims regarding these latent variables beyond their utility in representing probability

distributions on sets of words.

996

LATENT DIRICHLET ALLOCATION

α

zw

θ

β

M

N

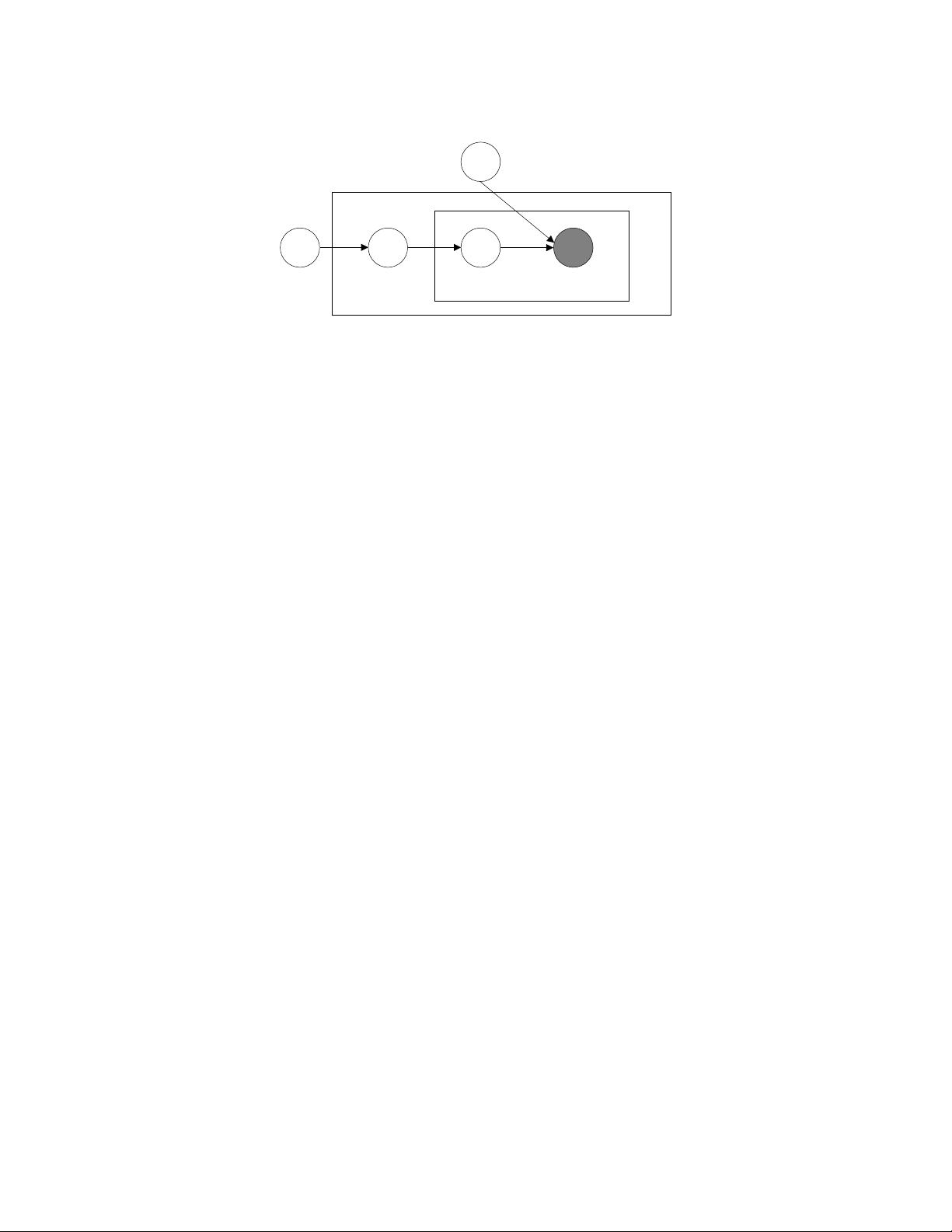

Figure 1: Graphical model representation of LDA. The boxes are “plates” representing replicates.

The outer plate represents documents, while the inner plate represents the repeated choice

of topics and words within a document.

where p(z

n

|θ) is simply θ

i

for the unique i such that z

i

n

= 1. Integrating over θ and summing over

z, we obtain the marginal distribution of a document:

p(w|α, β)=

Z

p(θ|α)

N

∏

n=1

∑

z

n

p(z

n

|θ)p(w

n

|z

n

,β)

!

dθ. (3)

Finally, taking the product of the marginal probabilities of single documents, we obtain the proba-

bility of a corpus:

p(

D| α,β)=

M

∏

d=1

Z

p(θ

d

|α)

N

d

∏

n=1

∑

z

dn

p(z

dn

|θ

d

)p(w

dn

|z

dn

,β)

!

dθ

d

.

The LDA model is represented as a probabilistic graphical model in Figure 1. As the figure

makes clear, there are three levels to the LDA representation. The parameters α and β are corpus-

level parameters, assumed to be sampled once in the process of generating a corpus. The variables

θ

d

are document-level variables, sampled once per document. Finally, the variables z

dn

and w

dn

are

word-level variables and are sampled once for each word in each document.

It is important to distinguish LDA from a simple Dirichlet-multinomial clustering model. A

classical clustering model would involve a two-level model in which a Dirichlet is sampled once

for a corpus, a multinomial clustering variable is selected once for each document in the corpus,

and a set of words are selected for the document conditional on the cluster variable. As with many

clustering models, such a model restricts a document to being associated with a single topic. LDA,

on the other hand, involves three levels, and notably the topic node is sampled repeatedly within the

document. Under this model, documents can be associated with multiple topics.

Structures similar to that shown in Figure 1 are often studied in Bayesian statistical modeling,

where they are referred to as hierarchical models (Gelman et al., 1995), or more precisely as con-

ditionally independent hierarchical models (Kass and Steffey, 1989). Such models are also often

referred to as parametric empirical Bayes models, a term that refers not only to a particular model

structure, but also to the methods used for estimating parameters in the model (Morris, 1983). In-

deed, as we discuss in Section 5, we adopt the empirical Bayes approach to estimating parameters

such as α and β in simple implementations of LDA, but we also consider fuller Bayesian approaches

as well.

997

剩余29页未读,继续阅读

资源评论

jljkid

- 粉丝: 0

- 资源: 1

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- Integrated-Energy-Systems-with-CAES-(注释完全,可直接运行)

- PDF为英语文本绘制热区(DEMO)

- 4.22.cpp

- 基于Transformer和Bert的close domain抽取式问答系统构建的智能聊天机器人项目源代码

- 基于扩展(EKF)和无迹卡尔曼滤波(UKF)的电力系统动态状态估计(注释完全,可直接运行)(文档加Matlab源码)

- 2023各大软件技术峰会演进资料汇总(PPT),资料难得

- 基于混沌集成决策树的电能质量复合扰动识别(注释完全,可直接运行)(文档加Matlab源码)

- 航空公司如何成功实现数字化转型英文版.rar

- RTL8309N-8口交换机评估板Cadence设计硬件(原理图+PCB)及PDF原理图+RTL8309N技术规格书

- 基于JAVA的打飞机游戏设计(程序).zip

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功