没有合适的资源?快使用搜索试试~ 我知道了~

论文:The wake-sleep algorithm for unsupervised neural networks

温馨提示

试读

12页

The wake-sleep algorithm for unsupervised neural networks 作者Hinton,提出Helmholtz机和wake-sleep算法

资源推荐

资源详情

资源评论

The wake-sleep algorithm for unsupervised

neural networks

Geoffrey E Hinton

Peter Dayan Brendan J Frey Radford M Neal

Department of Computer Science

University of Toronto

6 King’s College Road

Toronto M5S 1A4, Canada

3

rd

April 1995

Abstract

An unsupervised learning algorithm for a multilayer network of stochastic

neurons is described. Bottom-up “recognition” connections convert the input

into representations in successive hidden layers and top-down “generative”

connections reconstruct the representation inonelayer fromthe representation

in the layer above. In the “wake” phase, neurons are driven by recognition

connections, andgenerativeconnectionsareadaptedtoincreasetheprobability

that they would reconstruct the correctactivity vector in the layerbelow. In the

“sleep” phase, neurons are driven by generative connections and recognition

connections are adapted to increase the probability that they would produce

the correct activity vector in the layer above.

Supervised learning algorithms for multilayer neural networks face two problems:

Theyrequirea teacher to specify the desired output ofthe networkand theyrequire

some method of communicating error information to all of the connections. The

wake-sleep algorithm avoids both these problems. When there is no external

teaching signal to be matched, some other goal is required to force the hidden

units to extract underlying structure. In the wake-sleep algorithm the goal is to

learn representations that are economical to describe but allow the input to be

reconstructedaccurately. We can quantify this goal by imagining a communication

game in which each vector of raw sensory inputs is communicated to a receiver by

first sending its hidden representation and then sending the difference between the

input vector and its top-down reconstruction from the hidden representation. The

aim of learning is to minimize the “description length” which is the total number

of bits that would be required to communicate the input vectors in this way [1].

No communication actually takes place, but minimizing the description length

that would be required forces the networkto learn economical representations that

capture the underlying regularities in the data [2].

1

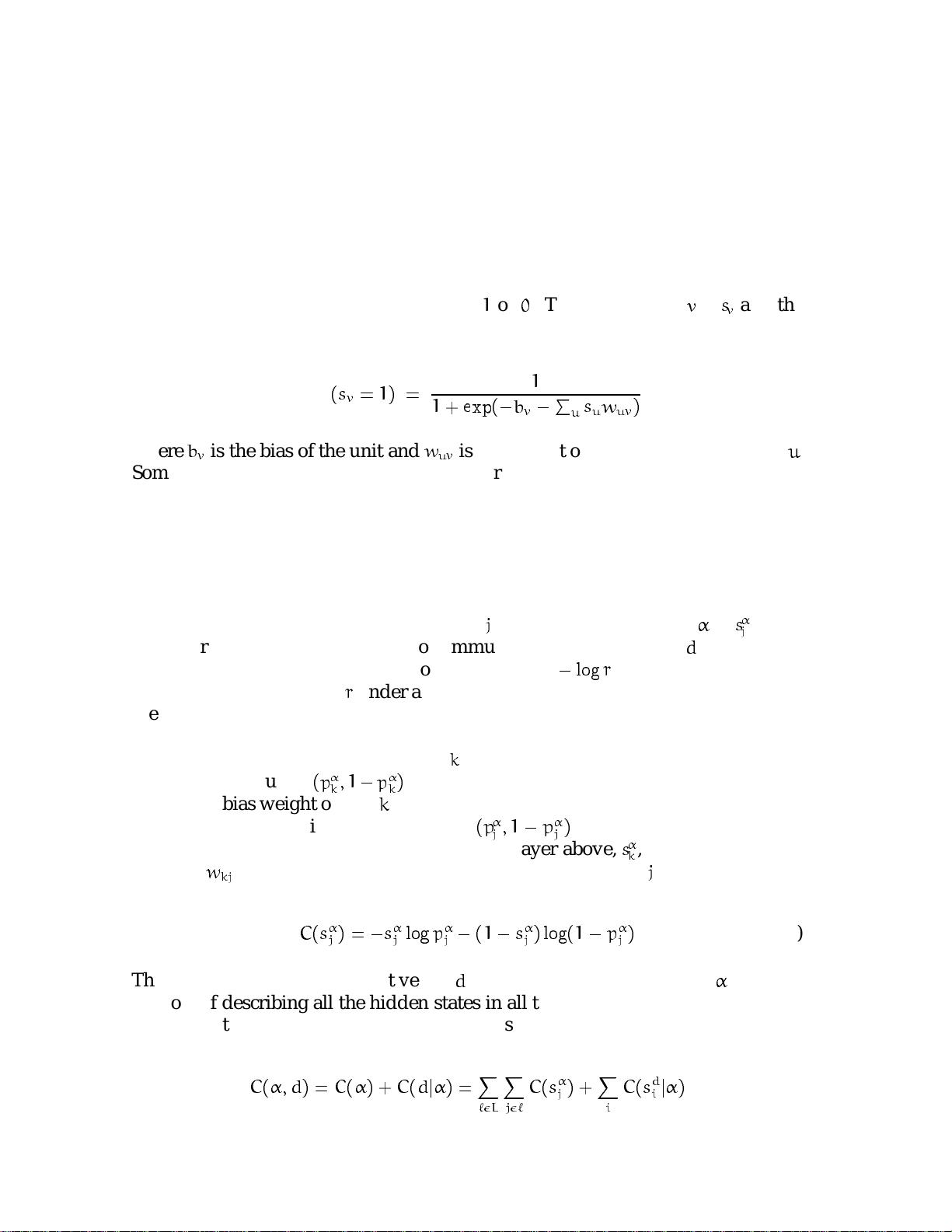

The neural network has two quite different sets of connections. The bottom-up

“recognition”connections are used to convertthe input vector into a representation

in one or more layers of hidden units. The top-down “generative” connections are

then used to reconstruct an approximation to the input vector from its underlying

representation. The training algorithm for these two sets of connections can be

used with many different types of stochastic neuron, but for simplicity we use only

stochastic binary units that have states of

1

or

0

. The state of unit

v

is

s

v

and the

probability that it is on is:

Prob

(

s

v

=

1

)=

1

1

+ exp(

;

b

v

;

P

u

s

u

w

uv

)

(1)

where

b

v

is the bias of the unit and

w

uv

is the weight on a connection from unit

u

.

Sometimes the units are driven by the generative weights and other times by the

recognition weights, but the same equation is used in both cases (figure 1).

In the “wake” phase the units are driven bottom-up using the recognition weights,

producing a representation of the input vector in the first hidden layer, a repre-

sentation of this representation in the second hidden layer and so on. All of these

layersof representation combined are called the “total representation” of the input,

and the binary state of each hidden unit,

j

, in total representation

is

s

j

. This

total representation could be used to communicate the input vector,

d

, to a receiver.

According to Shannon’s coding theorem, it requires

;

log

r

bits to communicate an

event that has probability

r

under a distribution agreed by the sender and receiver.

We assume that the receiver knows the top-down generative weights [3] so these

can be used to create the agreed probability distributions required for communi-

cation. First, the activity of each unit,

k

, in the top hidden layer is communicated

using the distribution

(

p

k

;1

;

p

k

)

which is obtained by applying Eq. 1 to the single

generative bias weight of unit

k

. Then the activities of the units in each lower layer

are communicated using the distribution

(

p

j

;1

;

p

j

)

obtained by applying Eq. 1

to the already communicated activities in the layer above,

s

k

, and the generative

weights,

w

kj

. The description length of the binary state of unit

j

is:

C

(

s

j

)=

;

s

j

log

p

j

;

(

1

;

s

j

) log(

1

;

p

j

)

(2)

The description length for input vector

d

using the total representation

is simply

the cost of describing all the hidden states in all the hidden layers plus the cost of

describing the input vector given the hidden states

C

(

; d

)=

C

(

)+

C

(

d

j

)=

X

`L

X

j`

C

(

s

j

)+

X

i

C

(

s

d

i

j

)

(3)

2

where

`

is an index over the

L

layers of hidden units and

i

is an index over the

input units which have states

s

d

i

.

Because the hidden units are stochastic, an input vector will not always be repre-

sented in the same way. In the wake phase, the recognition weights determine a

conditional probability distribution,

Q

(

j

d

)

over total representations. Neverthe-

less, if the recognition weights are fixed, there is a very simple, on-line method of

modifying the generative weights to minimize the expected cost

P

Q

(

j

d

)

C

(

; d

)

of describing the input vector using a stochastically chosen total representation.

After using the recognition weights to choose a total representation, each genera-

tive weight is adjusted in proportion to the derivative of equation 3 by using the

purely local delta rule:

w

kj

=

s

k

(

s

j

;

p

j

)

(4)

where

isa learning rate. Although the units aredrivenbytherecognition weights,

it is only the generative weights that learn in the wake phase. The learning makes

each layer of the total representation better at reconstructing the activities in the

layer below.

It seems obvious that the recognition weights should be adjusted to maximize the

probability of picking the

that minimizes

C

(

; d

)

. But this is incorrect. When

therearemany alternative waysofdescribing an input vector it ispossible to design

a stochastic coding scheme that takes advantage of the entropy across alternative

descriptions [1]. The cost is then:

C

(

d

)=

X

Q

(

j

d

)

C

(

; d

)

;

;

X

Q

(

j

d

) log

Q

(

j

d

)

!

(5)

The second term is the entropy of the distribution that the recognition weights

assign to the various alternative representations. If, for example, there are two

alternative representations each of which costs 4 bits, the combined cost is only 3

bits provided we use the two alternatives with equal probability[4]. It is precisely

analagous to the way in which the energies of the alternative states of a physi-

cal system are combined to yield the Helmholtz free energy of the system. As

in physics,

C

(

d

)

is minimized when the probabilities of the alternatives are expo-

nentially related to their costs by the Boltzmann distribution (at a temperature of

1):

P

(

j

d

)=

exp(

;

C

(

; d

))

P

exp(

;

C

(

; d

))

(6)

3

剩余11页未读,继续阅读

资源评论

jamyo2015-09-01很好,弄深度学习必看文章

jamyo2015-09-01很好,弄深度学习必看文章 权嘟嘟2014-11-16挺好的,深度学习方向的可以学习学习

权嘟嘟2014-11-16挺好的,深度学习方向的可以学习学习 pallove2016-11-01之前下了一个中文翻译的,现在下来英文版的对照着看一下,谢谢分享了。

pallove2016-11-01之前下了一个中文翻译的,现在下来英文版的对照着看一下,谢谢分享了。 nil0072017-11-30谢谢楼主分享

nil0072017-11-30谢谢楼主分享 amwayy2017-06-23深度学习的好资料

amwayy2017-06-23深度学习的好资料

ccemmawatson

- 粉丝: 22

- 资源: 4

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功