Crawling the Hidden Web

Sriram Raghavan Hector Garcia-Molina

Computer Science Department

Stanford University

Stanford, CA 94305, USA

{rsram, hector}@cs.stanford.edu

Abstract

Current-day crawlers retrieve content only from

the publicly indexable Web, i.e., the set of Web

pages reachable purely by following hypertext

links, ignoringsearch forms and pagesthat require

authorization or prior registration. In particular,

they ignore the tremendous amount of high qual-

ity content “hidden” behind search forms, in large

searchable electronic databases. In this paper, we

address the problem of designing a crawler capa-

ble of extracting content from this hidden Web.

We introduce a generic operational model of a

hidden Web crawler and describe how this model

is realized in HiWE (Hidden Web Exposer), a

prototype crawler built at Stanford. We intro-

duce a new Layout-based Information Extraction

Technique (LITE) and demonstrate its use in au-

tomatically extracting semantic information from

search forms and response pages. We also present

results from experiments conducted to test and

validate our techniques.

1 Introduction

Crawlers are programs that automatically traverse the Web

graph, retrieving pages and building a local repository of

the portion of the Web that they visit. Depending on the ap-

plication at hand, the pages in the repository are either used

to build search indexes, or are subjected to various forms

of analysis (e.g., text mining). Traditionally, crawlers have

only targeteda portion of the Web called the publicly index-

able Web (PIW) [13]. This refers to the set of pages reach-

able purely by following hypertext links, ignoring search

forms and pages that require authorization or prior regis-

tration.

Permission to copy without fee all or part of this material is granted pro-

vided that the copies are not made or distributed for direct commercial

advantage, the VLDB copyright notice and the title of the publication and

its date appear, and notice is given that copying is by permission of the

Very Large Data Base Endowment. To copy otherwise, or to republish,

requires a fee and/or special permission from the Endowment.

Proceedings of the 27th VLDB Conference,

Roma, Italy, 2001

However, a number of recent studies [2, 13, 14] have ob-

served that a significant fraction of Web content in fact lies

outside the PIW. Specifically, large portions of the Web

are ‘hidden’ behind search forms, in searchable structured

and unstructured databases (called the hidden Web [8] or

deep Web [2]). Pages in the hidden Web are dynamically

generated in response to queries submitted via the search

forms. The hidden Web continues to grow, as organizations

with large amounts of high-quality information (e.g., the

Census Bureau, Patents and Trademarks Office, news me-

dia companies) are placing their content online, providing

Web-accessible search facilities over existing databases.

For instance, the website InvisibleWeb.com lists over

10000 such databases ranging from archives of job listings

to directories, news archives, and electronic catalogs. Re-

cent estimates [2] place the size of the hidden Web (in terms

of generated HTML pages) at around 500 times the size of

the PIW.

In this paper, we address the problem of building a hid-

den Web crawler; one that can crawl and extract content

from these hidden databases. Such a crawler will enable

indexing, analysis, and mining of hidden Web content, akin

to what is currently being achieved with the PIW. In addi-

tion, the content extracted by such crawlers can be used to

categorize and classify the hidden databases.

Challenges. There are significant technical challenges

in designing a hidden Web crawler. First, the crawler

must be designed to automatically parse, process, and in-

teract with form-based search interfaces that are designed

primarily for human consumption. Second, unlike PIW

crawlers which merely submit requests for URLs, hidden

Web crawlers must also provide input in the form of search

queries (i.e., “fill out forms”). This raises the issue of how

best to equip crawlers with the necessary input values for

use in constructing search queries.

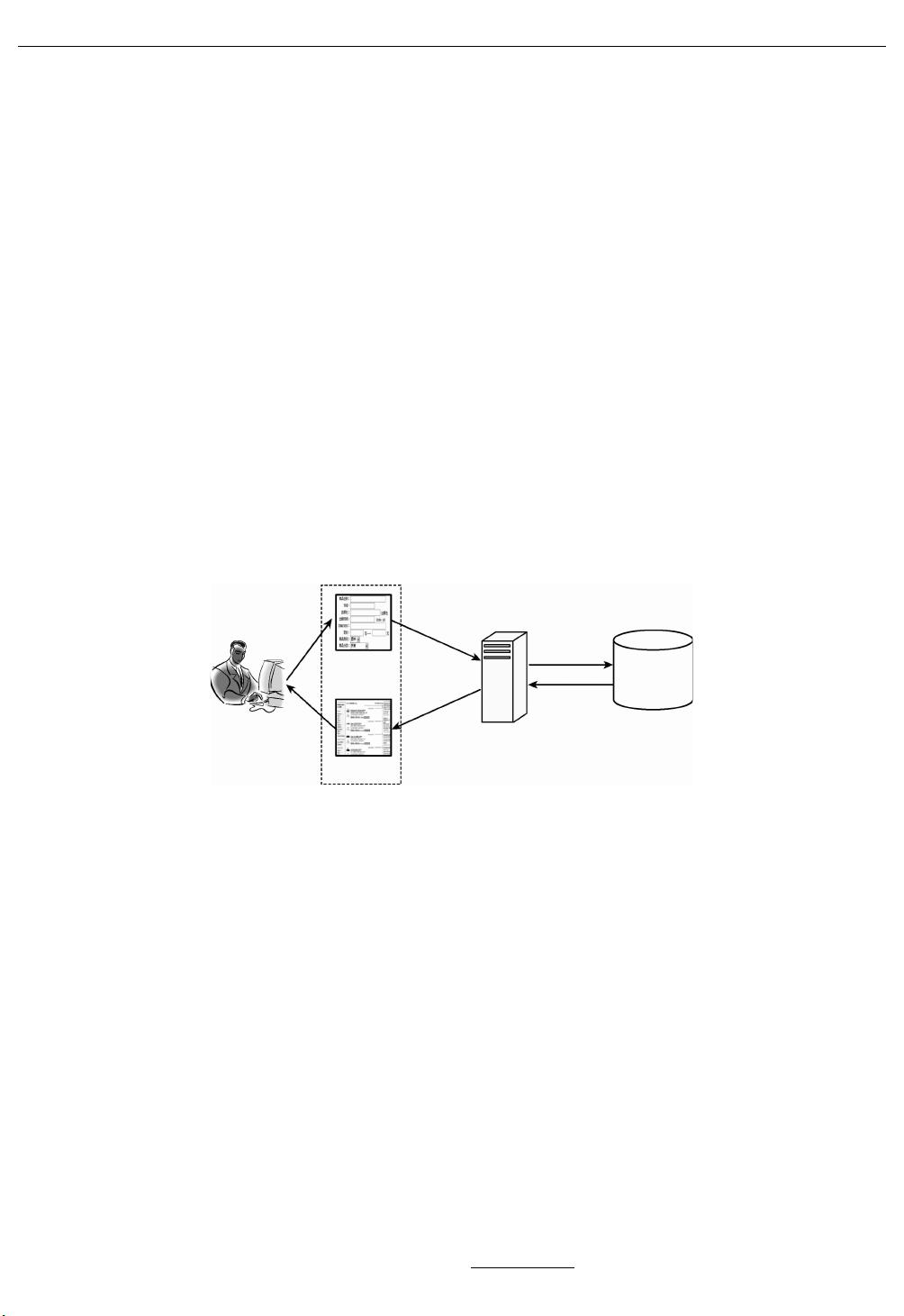

To address these challenges, we adopt a task-specific,

human-assisted approach to crawling the hidden Web.

Task-specificity: We aim to selectively crawl portions

of the hidden Web, extracting content based on the re-

quirements of a particular application or task. For exam-

ple, consider a market analyst who is interested in build-

ing an archive of news articles, reports, press releases, and

white papers pertaining to the semiconductor industry, and