没有合适的资源?快使用搜索试试~ 我知道了~

基于Spring Cloud和ES事件流构建的商城微服务

温馨提示

试读

28页

基于Spring Cloud和ES事件流构建的商城微服务英文文档Event Sourcing in Microservices Using Spring Cloud and Reactor.pdf

资源推荐

资源详情

资源评论

A blog about open source software, graph databases, cloud native architectures, information

theory, artificial intelligence, and machine learning.

Kenny Bastani (http://www.kennybastani.com/)

Blog (http://www.kennybastani.com/) GitHub (http://www.github.com/kbastani)

Twitter (http://www.twitter.com/kennybastani)

LinkedIn (http://www.linkedin.com/in/kennybastani)

Event Sourcing in Microservices Using Spring Cloud and Reactor

Tuesday, April 19, 2016

When building applications in a microservice architecture, managing state becomes a

distributed systems problem. Instead of being able to manage state as transactions inside

the boundaries of a single monolithic application, a microservice must be able to manage

consistency using transactions that are distributed across a network of many different

applications and databases.

In this article we will explore the problems of data consistency and high availability in

microservices. We will start by taking a look at some of the important concepts and themes

behind handling data consistency in distributed systems.

Throughout this article we will use a reference application of an online store

(https://github.com/kbastani/spring-cloud-event-sourcing-example) that is built with

microservices using Spring Boot (http://projects.spring.io/spring-boot/) and Spring Cloud

(http://projects.spring.io/spring-cloud/). We’ll then look at how to use reactive streams

(http://www.reactive-streams.org/) with Project Reactor (https://projectreactor.io/) to

implement event sourcing in a microservice architecture. Finally, we’ll use Docker

(https://www.docker.com/) and Maven to build, run, and orchestrate the multi-container

reference application.

When building microservices, we are forced to start reasoning about state in an architecture

where data is eventually consistent. This is because each microservice exclusively exposes

resources from a database that it owns. Further, each of these databases would be

configured for high availability, with different consistency guarantees for each type of

database.

Eventual consistency (https://en.wikipedia.org/wiki/Eventual_consistency) is a model that is

used to describe some operations on data in a distributed system—where state is replicated

and stored across multiple nodes of a network. Typically, eventual consistency is talked

Eventual Consistency

about when running a database in high availability mode

(https://en.wikipedia.org/wiki/High_availability), where replicas are maintained by

coordinating writes between multiple nodes of a database cluster. The challenge of the

database cluster is that writes must be coordinated to all replicas in the exact order that they

were received. When this happens, each replica is considered to be eventually consistent—

that the state of all replicas are guaranteed to converge towards a consistent state at some

point in the future.

When first building microservices, eventual consistency is a frequent point of contention

between developers, DBAs, and architects. The head scratching starts to occur more

frequently when the architecture design discussions begin to turn to the topic of data and

handling state in a distributed system. The head scratching usually boils down to one

question.

How can we guarantee high availability while also guaranteeing data consistency?

To answer this question we need to understand how to best handle transactions in a

distributed system. It just so happens that most distributed databases have this problem

nailed down with a healthy helping of science.

Mostly all databases today support some form of high availability clustering. Most database

products will provide a list of easy to understand guarantees about a system’s consistency

model. A first step to achieving safety guarantees for stronger consistency models is to

maintain an ordered log of database transactions. This approach is pretty simple in theory. A

transaction log is an ordered record of all updates that were transacted by the database.

When transactions are replayed in the exact order they were recorded, an exact replica of a

database can be generated.

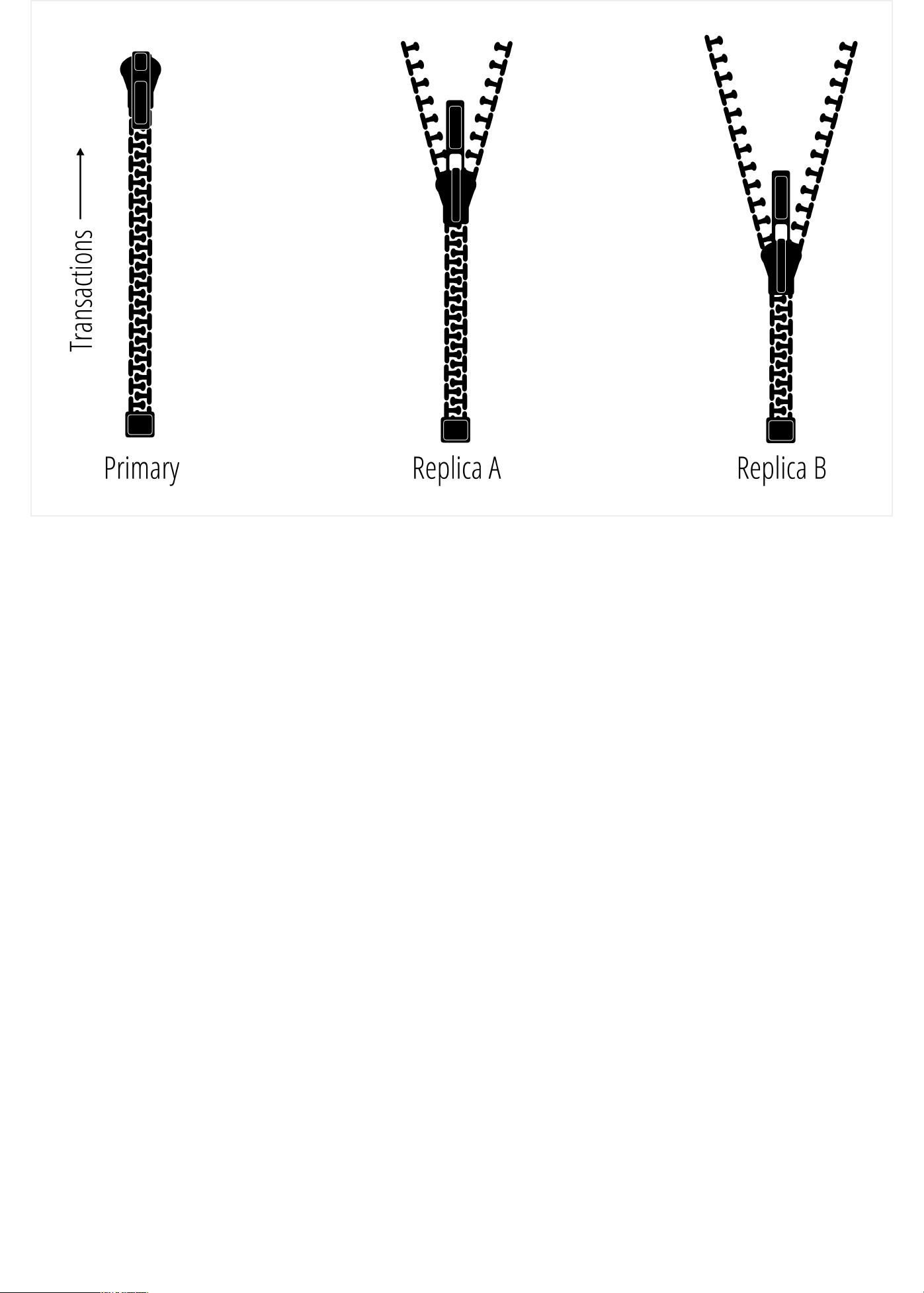

Transaction Logs

The diagram above represents three databases in a cluster that are replicating data using a

shared transaction log. The zipper labeled Primary is the authority in this case and has the

most current view of the database. The difference between the zippers represent the

consistency of each replica, and as the transactions are replayed, each replica converges to

a consistent state with the Primary. The basic idea here is that with eventual consistency, all

zippers will eventually be zipped all the way up.

The transaction logs that databases use actually have deep roots in history that pre-dates

computing. The fundamental approach for managing an ordered log of transactions was first

used by Venetian merchants as far back as the 15th century. The method that these

Venetian merchants started using was called the double-entry bookkeeping system

(https://en.wikipedia.org/wiki/Double-entry_bookkeeping_system)—which is a system of

bookkeeping that requires two side-by-side entries for each transaction. For each of these

transactions, both a credit and a debit are specified from an origin account to a destination

account. To calculate the balance of an account, any merchant could simply replicate the

current state of all accounts by replaying the events recorded in the ledger. This same

fundamental practice of bookkeeping is still used today, and to some extent its a basic

concept for transaction management in modern database systems.

For databases that claim to have eventual consistency, it’s guaranteed that each node in the

database cluster will converge towards a globally consistent state by simply replaying the

transaction log that resulted from a merge of write transactions across replicas. This claim,

however, is only a guarantee of a database’s liveness properties, ignoring any guarantees

about its safety properties. The difference between safety and liveness here is that with

eventual consistency we can only be guaranteed that all updates will be observed

eventually, with no guarantee about correctness.

Most content available today that attempts to educate us on the benefits of microservices

will contain a very sparse explanation behind the saying that "microservices use eventual

consistency"—sometimes referencing CAP theorem

(https://en.wikipedia.org/wiki/CAP_theorem) to bolster any sense of existing confusion. This

tends to be a shallow explanation that leads to more questions than answers. A more

appropriate explanation of eventual consistency in microservices would be the following

statement.

Microservice architectures provide no guarantees about the correctness of your data.

The only consistency guarantee you’ll get with microservices is that all microservices will

eventually agree on something—correct or not.

Cutting through the vast hype that exists on the road to building microservices is not only

important, it is an assured eventuality that all developers must face. This is because when it

comes to building software, a distributed system is a distributed system. A collection of

communicating microservices are no exception. The good news is, there are tried and true

patterns for how to successfully build and maintain complex distributed systems, and that’s

the main theme of the rest of this article.

Event sourcing (http://martinfowler.com/eaaDev/EventSourcing.html) is a method of data

persistence that borrows similar ideas behind a database’s transaction log. For event

sourcing, the unit of a transaction becomes much more granular, using a sequence of

ordered events to represent the state of a domain object stored in a database. Once an

event has been added to to the event log, it cannot be removed or re-ordered. Events are

considered to be immutable and the sequence of events that are stored are append-only.

There are multiple benefits for handling state in a microservice architecture using event

sourcing.

Aggregates can be used to generate the consistent state of any object

It provides an audit trail that can be replayed to generate the state of an object

from any point in time

It provides the many inputs necessary for analyzing data using event stream

processing (https://en.wikipedia.org/wiki/Event_stream_processing)

It enables the use of compensating transactions

(https://en.wikipedia.org/wiki/Compensating_transaction) to rollback events

leading to an inconsistent application state

It also avoids complex synchronization between microservices, paving the way

for asynchronous non-blocking operations between microservices

Event Sourcing

In this article we’re going to look at a JVM-based implementation of event sourcing that

uses Spring Cloud and Spring Boot. As with most of the articles you’ll find on this blog

(http://www.kennybastani.com/search/label/spring%20cloud), we’re going to take a tour of a

realistic sample application that you can run and deploy. This time I’ve put together an

example of an end-to-end cloud native application using microservices. I’ve even included

an AngularJS (https://angularjs.org/) frontend, thanks to some very clever ground work

(https://spring.io/guides/tutorials/spring-security-and-angular-js/) by Dr. Dave Syer

(https://spring.io/team/dsyer/) on the Spring Engineering team. (Thanks Dr. Syer!)

As I mentioned earlier, this reference application was designed as a cloud native

application. Cloud native applications and architectures are designed and built using a set of

standard methodologies that maximize the utility of a cloud platform. Cloud native

applications use something called twelve-factor application methodology

(http://12factor.net/). The twelve-factor methodology is a set of practices and useful

guidelines that were compiled by the engineers behind Heroku, which have become a

standard reference for creating applications suitable to be deployed to a cloud platform.

Cloud native application architectures will typically embrace scale-out infrastructure

principles, such as horizontal scaling of applications and databases. Applications also focus

on building in resiliency and auto-healing to prevent downtime. Through the use of a

platform, availability can be automatically adjusted as necessary using a set of policies.

Also, load balancing for services are shifted to the client-side, and handled between

applications, preventing the need to configure load balancers for new application instances.

I’ve taken big leaps from the other microservice reference applications you’ll find here on

this blog. This application was created to demonstrate a fully formed microservice

architecture that implements the core functionality of an online store.

Reference Application

Online Store Web

剩余27页未读,继续阅读

资源评论

DrLeesun2017-08-28太坑了,完全从外网弄回来的英文,还1个积分下载,外网的英文都看完了,下载下来费了我1个积分,不值,而且百度上还有翻译好的

DrLeesun2017-08-28太坑了,完全从外网弄回来的英文,还1个积分下载,外网的英文都看完了,下载下来费了我1个积分,不值,而且百度上还有翻译好的 「已注销」2018-03-20没看懂。。。。

「已注销」2018-03-20没看懂。。。。

zeb_perfect

- 粉丝: 880

- 资源: 18

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功