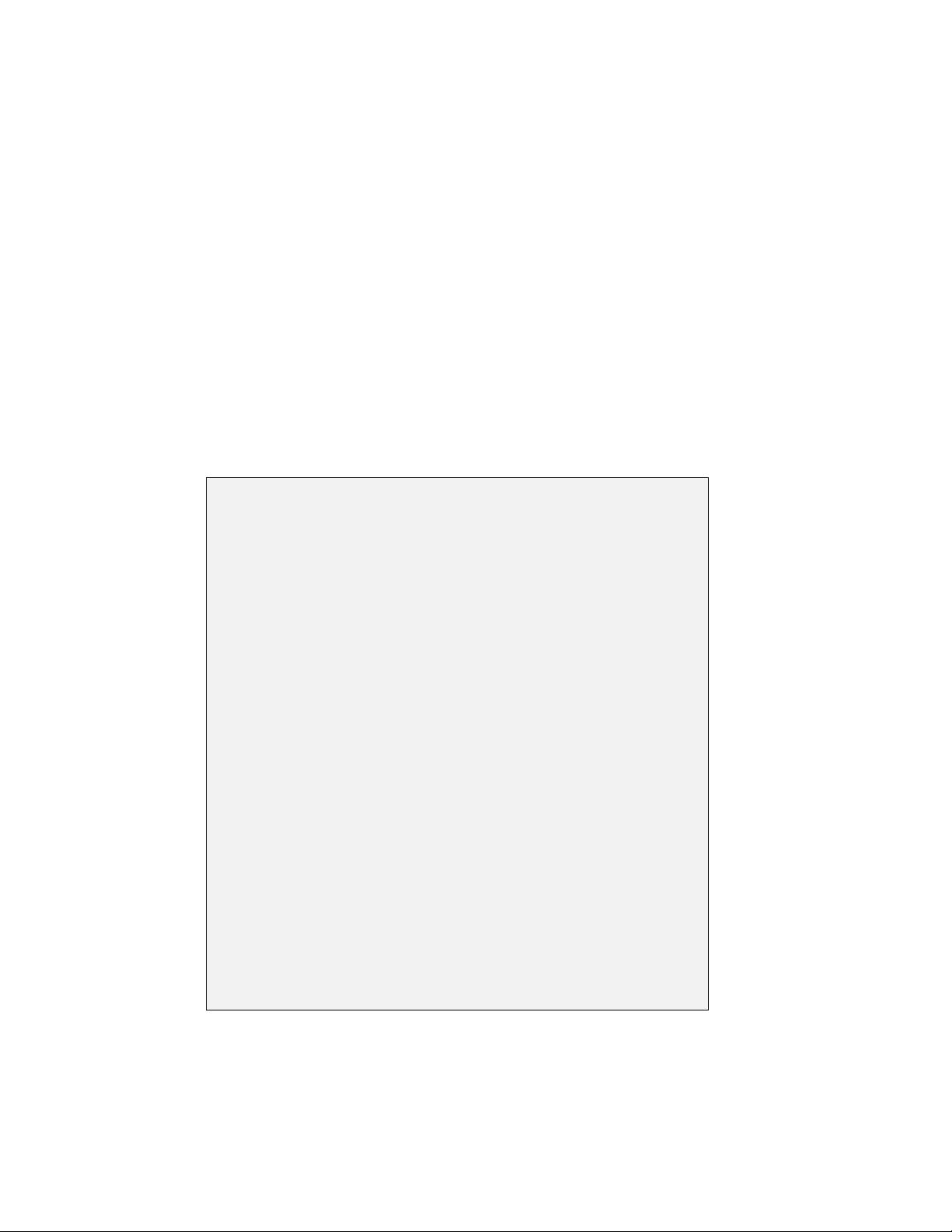

Reinforcement Learning (RL): A Happy Union of AI and

Decision/Control Ideas

Decision/

Control/DP

Principle of

Optimality

Markov Decision

Problems

POMDP

Policy Iteration

Value Iteration

AI/RL

Learning through

Experience

Simulation,

Model-Free Methods

Feature-Based

Representations

A*/Games/

Heuristics

Complementary

Ideas

Late 80s-Early 90s

Historical highlights

Exact DP, optimal control (Bellman, Shannon, 1950s ...)

First major successes: Backgammon programs (Tesauro, 1992, 1996)

Algorithmic progress, analysis, applications, first books (mid 90s ...)

Machine Learning, BIG Data, Robotics, Deep Neural Networks (mid 2000s ...)

AlphaGo and Alphazero (DeepMind, 2016, 2017)

Bertsekas (M.I.T.) Reinforcement Learning 2 / 33

AlphaZero was Trained Using Self-Generated Data

φ

j

¯

f

=

!

1 if j ∈ I

¯

f

0 if j/∈ I

¯

f

d

fi

= 0 if i/∈ I

¯

f

ˆp

f

¯

f

(u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)φ

j

¯

f

ˆg(f,u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)g(i, u, j)

Representative Features Feature Space F

F (j) φ

jf

1

φ

jf

2

φ

jf

3

φ

jf

4

f

1

f

2

f

3

f

4

f

5

f

6

f

7

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

ˆ

F = {f

1

,f

2

,f

3

,f

4

,f

5

,f

6

,f

7

}

Representative Feature States d

fi

f

¯

f with Aggre gation

Current Policy µ Improved Policy ˜µˆµ

T

µ

Φr Φr = ΠT

µ

Φr

Generate “Improved” Policy ˆµ

State Space Feature Space Subspace J = {Φr | s ∈ℜ

s

}

#

s

ℓ=1

F

ℓ

(i, v)r

ℓ

r =(r

1

,...,r

s

)

State iy(i) Ay(i)+bF

s

(i, v) F

1

(i, v) F

2

(i, v) Linear Weighting of

Features

Cost = 2αϵ r

k+1

= arg min

r∈ℜ

s

m

"

t=1

N

t

−1

"

τ =0

$

φ(i

τ,t

)

′

r − c

τ,t

(r

k

)

%

2

µ

ℓ

µ

1 −

µ

ℓ

µ

1

φ

j

¯

f

=

!

1 if j ∈ I

¯

f

0 if j/∈ I

¯

f

d

fi

= 0 if i/∈ I

¯

f

ˆp

f

¯

f

(u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)φ

j

¯

f

ˆg(f,u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)g(i, u, j)

Representative Features Feature Space F

F (j) φ

jf

1

φ

jf

2

φ

jf

3

φ

jf

4

f

1

f

2

f

3

f

4

f

5

f

6

f

7

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

ˆ

F = {f

1

,f

2

,f

3

,f

4

,f

5

,f

6

,f

7

}

Representative Feature States d

fi

f

¯

f with Aggre gation

Current Policy µ Improved Policy ˜µˆµ

T

µ

Φr Φr = ΠT

µ

Φr

Generate “Improved” Policy ˆµ

State Space Feature Space Subspace J = {Φr | s ∈ℜ

s

}

#

s

ℓ=1

F

ℓ

(i, v)r

ℓ

r =(r

1

,...,r

s

)

State iy(i) Ay(i)+bF

s

(i, v) F

1

(i, v) F

2

(i, v) Linear Weighting of

Features

Cost = 2αϵ r

k+1

= arg min

r∈ℜ

s

m

"

t=1

N

t

−1

"

τ =0

$

φ(i

τ,t

)

′

r − c

τ,t

(r

k

)

%

2

µ

ℓ

µ

1 −

µ

ℓ

µ

1

φ

j

¯

f

=

!

1 if j ∈ I

¯

f

0 if j/∈ I

¯

f

d

fi

= 0 if i/∈ I

¯

f

ˆp

f

¯

f

(u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)φ

j

¯

f

ˆg(f,u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)g(i, u, j)

Representative Features Feature Space F

F (j) φ

jf

1

φ

jf

2

φ

jf

3

φ

jf

4

f

1

f

2

f

3

f

4

f

5

f

6

f

7

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

ˆ

F = {f

1

,f

2

,f

3

,f

4

,f

5

,f

6

,f

7

}

Representative Feature States d

fi

f

¯

f with Aggre gation

Current Policy µ Improved Policy ˜µˆµ

T

µ

Φr Φr = ΠT

µ

Φr

Generate “Improved” Policy ˆµ

State Space Feature Space Subspace J = {Φr | s ∈ℜ

s

}

#

s

ℓ=1

F

ℓ

(i, v)r

ℓ

r =(r

1

,...,r

s

)

State iy(i) Ay(i)+bF

s

(i, v) F

1

(i, v) F

2

(i, v) Linear Weighting of

Features

Cost = 2αϵ r

k+1

= arg min

r∈ℜ

s

m

"

t=1

N

t

−1

"

τ =0

$

φ(i

τ,t

)

′

r − c

τ,t

(r

k

)

%

2

µ

ℓ

µ

1 −

µ

ℓ

µ

1

Tail problem approximation u

1

k

u

2

k

u

3

k

u

4

k

u

5

k

Constraint Relaxation

UU

1

U

2

AlphaZero (Google-Deep Mind) Plays much b etter than all computer programs F (i)Cost

ˆ

J

!

F (i)

"

Plays different! Approximate Value Function Player Feature s Mapping

At State x

k

Current state x

0

... MCTS Loo kahead Minimization Cos t-to-go Approximation

Empty schedule LOOKAHEAD MINIMIZATION ROLLOUT States x

k+2

min

u

k

,µ

k+1

,...,µ

k+ℓ−1

E

#

g

k

(x

k

,u

k

,w

k

)+

k+ℓ−1

$

m=k+1

g

k

!

x

m

,µ

m

(x

m

),w

m

"

+

˜

J

k+ℓ

(x

k+ℓ

)

%

Subspace S = {Φr | r ∈ℜ

s

} x

∗

˜x

Rollout: Simulation with fixed policy Parametric approximation at the end Monte Carlo tree search

T

(λ)

(x)=T (

x) x = P

(c)

(x)

x − T (x) y − T (y) ∇f (x)

x − P

(c)

(x) x

k

x

k+1

x

k+2

Slope = −

1

c

T

(λ)

(x)=T (

x) x = P

(c)

(x)

Extrapolation by a Factor of 2 T

(λ)

= P

(c)

·T = T · P

(c)

Extrapolation Formula T

(λ)

= P

(c)

· T = T · P

(c)

Multistep Extrapolation T

(λ)

= P

(c)

· T = T · P

(c)

1

Tail problem approximation u

1

k

u

2

k

u

3

k

u

4

k

u

5

k

Constraint Relaxation

UU

1

U

2

AlphaZero (Google-Deep Mind) Plays much b etter than all computer programs F (i)Cost

ˆ

J

!

F (i)

"

Plays different! Approximate Value Function Player Feature s Mapping

At State x

k

Current state x

0

... MCTS Lookahead Minimization Cost-to-go Approximation

Empty schedule LOOKAHEAD MINIMIZATION ROLLOUT States x

k+2

min

u

k

,µ

k+1

,...,µ

k+ℓ−1

E

#

g

k

(x

k

,u

k

,w

k

)+

k+ℓ−1

$

m=k+1

g

k

!

x

m

,µ

m

(x

m

),w

m

"

+

˜

J

k+ℓ

(x

k+ℓ

)

%

Subspace S = {Φr | r ∈ℜ

s

} x

∗

˜x

Rollout: Simulation with fixed policy Parametric approximation at the end Monte Carlo tree search

T

(λ)

(x)=T (

x) x = P

(c)

(x)

x − T (x) y − T (y) ∇f (x)

x − P

(c)

(x) x

k

x

k+1

x

k+2

Slope = −

1

c

T

(λ)

(x)=T (

x) x = P

(c)

(x)

Extrapolation by a Factor of 2 T

(λ)

= P

(c)

·T = T · P

(c)

Extrapolation Formula T

(λ)

= P

(c)

· T = T · P

(c)

Multistep Extrapolation T

(λ)

= P

(c)

· T = T · P

(c)

1

Tail problem approximation u

1

k

u

2

k

u

3

k

u

4

k

u

5

k

Self-Learning/Policy Iteration Constraint Relaxa tion

UU

1

U

2

AlphaZero (Google-Deep Mind) Plays much b etter than all computer programs F (i)Cost

ˆ

J

!

F (i)

"

Plays different! Approximate Value Function Player Feature s Mapping

At State x

k

Current state x

0

... MCTS Lookahead Minimization Cost-to-go Approximation

Empty schedule LOOKAHEAD MINIMIZATION ROLLOUT States x

k+2

min

u

k

,µ

k+1

,...,µ

k+ℓ−1

E

#

g

k

(x

k

,u

k

,w

k

)+

k+ℓ−1

$

m=k+1

g

k

!

x

m

,µ

m

(x

m

),w

m

"

+

˜

J

k+ℓ

(x

k+ℓ

)

%

Subspace S = {Φr | r ∈ℜ

s

} x

∗

˜x

Rollout: Simulation with fixed policy Parametric approximation at the end Monte Carlo tree search

T

(λ)

(x)=T (

x) x = P

(c)

(x)

x − T (x) y − T (y) ∇f (x)

x − P

(c)

(x) x

k

x

k+1

x

k+2

Slope = −

1

c

T

(λ)

(x)=T (

x) x = P

(c)

(x)

Extrapolation by a Factor of 2 T

(λ)

= P

(c)

·T = T · P

(c)

Extrapolation Formula T

(λ)

= P

(c)

· T = T · P

(c)

Multistep Extrapolation T

(λ)

= P

(c)

· T = T · P

(c)

1

Tail problem approximation u

1

k

u

2

k

u

3

k

u

4

k

u

5

k

Self-Learning/Policy Iteration Constraint Relaxation

Learned from scratch ... with 4 hours of training! Current “Improved”

AlphaZero (Google-Deep Mind) Plays much better tha n all computer programs F (i)Cost

ˆ

J

!

F (i)

"

Plays different! Approximate Value Function Player Fea tures Mapping

At State x

k

Current state x

0

... MCTS Lookahead Minimization Cost-to -go Approximation

Empty schedule LOOKAHEAD MINIMIZATIO N ROLLOUT States x

k+2

min

u

k

,µ

k+1

,...,µ

k+ℓ−1

E

#

g

k

(x

k

,u

k

,w

k

)+

k+ℓ−1

$

m=k+1

g

k

!

x

m

,µ

m

(x

m

),w

m

"

+

˜

J

k+ℓ

(x

k+ℓ

)

%

Subspace S = {Φr | r ∈ℜ

s

} x

∗

˜x

Rollout: Simulation with fixed policy Parametric approximation at the end Monte Carlo tree se arch

T

(λ)

(x)=T (

x) x = P

(c)

(x)

x − T (x) y − T (y) ∇f(x)

x − P

(c)

(x) x

k

x

k+1

x

k+2

Slope = −

1

c

T

(λ)

(x)=T (x) x = P

(c)

(x)

Extrapolation by a Factor of 2 T

(λ)

= P

(c)

·T = T · P

(c)

Extrapolation Formula T

(λ)

= P

(c)

· T = T · P

(c)

Multistep Extrapolation T

(λ)

= P

(c)

· T = T · P

(c)

1

Tail problem approximation u

1

k

u

2

k

u

3

k

u

4

k

u

5

k

Self-Learning/Policy Iteration Constraint Relaxation

Learned from scratch ... with 4 hours of training! Current “Improved”

AlphaZero (Google-Deep Mind) Plays much better tha n all computer programs F (i)Cost

ˆ

J

!

F (i)

"

Plays different! Approximate Value Function Player Fea tures Mapping

At State x

k

Current state x

0

... MCTS Lookahead Minimization Cost-to -go Approximation

Empty schedule LOOKAHEAD MINIMIZATIO N ROLLOUT States x

k+2

min

u

k

,µ

k+1

,...,µ

k+ℓ−1

E

#

g

k

(x

k

,u

k

,w

k

)+

k+ℓ−1

$

m=k+1

g

k

!

x

m

,µ

m

(x

m

),w

m

"

+

˜

J

k+ℓ

(x

k+ℓ

)

%

Subspace S = {Φr | r ∈ℜ

s

} x

∗

˜x

Rollout: Simulation with fixed policy Parametric approximation at the end Monte Carlo tree sear ch

T

(λ)

(x)=T (

x) x = P

(c)

(x)

x − T (x) y − T (y) ∇f(x)

x − P

(c)

(x) x

k

x

k+1

x

k+2

Slope = −

1

c

T

(λ)

(x)=T (x) x = P

(c)

(x)

Extrapolation by a Factor of 2 T

(λ)

= P

(c)

·T = T · P

(c)

Extrapolation Formula T

(λ)

= P

(c)

· T = T · P

(c)

Multistep Extrapolation T

(λ)

= P

(c)

· T = T · P

(c)

1

Position “values” Move “probabilities”

Cho ose the Aggregation and Disaggregation Probabilities

Use a Neural Network or Other Scheme Form the Aggregate States

I

1

I

q

Use a Neur al Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Feat ur es F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate P rob l em

Has been used to learn other games (Go, Shogi)

Aggregate costs r

⇤

`

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation i n a space of basis functions Plays much better than

all chess programs

Cost ↵

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evalu at e Approximate Cost

˜

J

µ

F (i)

of

F (i)=

F

1

(i),...,F

s

(i)

: Vector of Features of i

˜

J

µ

F (i)

: Feature-based architecture Final Features

If

˜

J

µ

F (i)

=

P

s

`=1

F

`

(i)r

`

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J

ˆ

J

p

with J( x

k

) ! 0 for all p-stable ⇡

W

p

0

: Functions J

ˆ

J

p

0

with J( x

k

) ! 0 for all p

0

-stable ⇡

W

+

=

J | J J

+

,J(t)=0

VI converges to J

+

from within W

+

Cost: g(x

k

,u

k

) 0 VI converges to

ˆ

J

p

from within W

p

W

p

0

: Lyapounov region for p

0

W

p

0

VI converges to

ˆ

J

0

p

from within

W

p

0

W

+

: Lyapounov region for p(x) ⌘ 1, x 6= t W

+

1

φ

j

¯

f

=

!

1 if j ∈ I

¯

f

0 if j/∈ I

¯

f

d

fi

= 0 if i/∈ I

¯

f

ˆp

f

¯

f

(u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)φ

j

¯

f

ˆg(f,u)=

n

"

i=1

d

fi

n

"

j=1

p

ij

(u)g(i, u, j)

Representative Features Feature Space F

F (j) φ

jf

1

φ

jf

2

φ

jf

3

φ

jf

4

f

1

f

2

f

3

f

4

f

5

f

6

f

7

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

Neural Network Features Approximate Cost

˜

J

µ

Policy Improvement

ˆ

F = {f

1

,f

2

,f

3

,f

4

,f

5

,f

6

,f

7

}

Representative Feature States d

fi

f

¯

f with Aggre gation

Current Policy µ Improved Policy ˜µˆµ

T

µ

Φr Φr = ΠT

µ

Φr

Generate “Improved” Policy ˆµ

State Space Feature Space Subspace J = {Φr | s ∈ℜ

s

}

#

s

ℓ=1

F

ℓ

(i, v)r

ℓ

r =(r

1

,...,r

s

)

State iy(i) Ay(i)+bF

s

(i, v) F

1

(i, v) F

2

(i, v) Linear Weighting of

Features

Cost = 2αϵ r

k+1

= arg min

r∈ℜ

s

m

"

t=1

N

t

−1

"

τ =0

$

φ(i

τ,t

)

′

r − c

τ,t

(r

k

)

%

2

µ

ℓ

µ

1 −

µ

ℓ

µ

1

Neural Net Policy Evaluation Improvement of Cur rent Policy µ by

Lookahead Min

States x

k+1

States x

k+2

x

k

Heuristic/ Suboptimal Base Policy

Approximation

˜

J

Adaptive Simulation Terminal cost approximation

Heuristic Policy

Simulation with

Cost

˜

J

µ

!

F (i),r

"

of i ≈ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

!

F (i),r

"

: Feature- based parametric architecture

r: Vector of weights

Position “values” Move “probabilities”

Choose the Aggre gation and Disaggreg ation Pr obabilities

Use a Neural Net work or Other Sc heme Form the Aggregate States

I

1

I

q

Use a Neural Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Features F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate Problem

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

∗

ℓ

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation in a space of bas is functions Plays much better than

all chess prog rams

Cost α

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evaluate Approximate Cost

˜

J

µ

!

F (i)

"

of

F (i)=

!

F

1

(i),...,F

s

(i)

"

:VectorofFeaturesofi

˜

J

µ

!

F (i)

"

: Feature- based architecture

Final Features

If

˜

J

µ

!

F (i),r

"

=

#

s

ℓ=1

F

ℓ

(i)r

ℓ

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J ≥

ˆ

J

p

with J(x

k

) → 0 for all p-stable π

W

p

′

: Functions J ≥

ˆ

J

p

′

with J(x

k

) → 0 for all p

′

-stable π

1

Neural Network Policy Evalua tion Improvement of Current Policy µ

by Lookahead Min

States x

k+1

States x

k+2

x

k

Heuristic/ Suboptimal Base Policy

Approximation

˜

J

Adaptive Simulation Terminal cost approximation

Heuristic Policy

Simulation with

Cost

˜

J

µ

!

F (i),r

"

of i ≈ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

!

F (i),r

"

: Feature- based parametric architecture

r: Vector of weights

Position “values” Move “probabilities”

Choose the Aggre gation and Disaggreg ation Pr obabilities

Use a Neural Net work or Other Sc heme Form the Aggregate States

I

1

I

q

Use a Neural Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Features F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate Problem

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

∗

ℓ

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation in a space of bas is functions Plays much better than

all chess prog rams

Cost α

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evaluate Approximate Cost

˜

J

µ

!

F (i)

"

of

F (i)=

!

F

1

(i),...,F

s

(i)

"

:VectorofFeaturesofi

˜

J

µ

!

F (i)

"

: Feature- based architecture

Final Features

If

˜

J

µ

!

F (i),r

"

=

#

s

ℓ=1

F

ℓ

(i)r

ℓ

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J ≥

ˆ

J

p

with J(x

k

) → 0 for all p-stable π

W

p

′

: Functions J ≥

ˆ

J

p

′

with J(x

k

) → 0 for all p

′

-stable π

1

Neural Network Policy Evalua tion Improvement of Current Policy µ

by Lookahead Min

States x

k+1

States x

k+2

x

k

Heuristic/ Suboptimal Base Policy

Approximation

˜

J

Adaptive Simulation Terminal cost approximation

Heuristic Policy

Simulation with

Cost

˜

J

µ

!

F (i),r

"

of i ≈ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

!

F (i),r

"

: Feature- based parametric architecture

r: Vector of weights

Position “values” Move “probabilities”

Choose the Aggre gation and Disaggreg ation Pr obabilities

Use a Neural Net work or Other Sc heme Form the Aggregate States

I

1

I

q

Use a Neural Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Features F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate Problem

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

∗

ℓ

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation in a space of bas is functions Plays much better than

all chess prog rams

Cost α

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evaluate Approximate Cost

˜

J

µ

!

F (i)

"

of

F (i)=

!

F

1

(i),...,F

s

(i)

"

:VectorofFeaturesofi

˜

J

µ

!

F (i)

"

: Feature- based architecture

Final Features

If

˜

J

µ

!

F (i),r

"

=

#

s

ℓ=1

F

ℓ

(i)r

ℓ

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J ≥

ˆ

J

p

with J(x

k

) → 0 for all p-stable π

W

p

′

: Functions J ≥

ˆ

J

p

′

with J(x

k

) → 0 for all p

′

-stable π

1

Neural Network Policy Evalua tion Improvement of Current Policy µ

by Lookahead Min

States x

k+1

States x

k+2

x

k

Heuristic/ Suboptimal Base Policy

Approximation

˜

J

Adaptive Simulation Terminal cost approximation

Heuristic Policy

Simulation with

Cost

˜

J

µ

!

F (i),r

"

of i ≈ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

!

F (i),r

"

: Feature- based parametric architecture

r: Vector of weights

Position “values” Move “probabilities”

Choose the Aggre gation and Disaggreg ation Pr obabilities

Use a Neural Net work or Other Sc heme Form the Aggregate States

I

1

I

q

Use a Neural Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Features F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate Problem

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

∗

ℓ

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation in a space of bas is functions Plays much better than

all chess prog rams

Cost α

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evaluate Approximate Cost

˜

J

µ

!

F (i)

"

of

F (i)=

!

F

1

(i),...,F

s

(i)

"

:VectorofFeaturesofi

˜

J

µ

!

F (i)

"

: Feature- based architecture

Final Features

If

˜

J

µ

!

F (i),r

"

=

#

s

ℓ=1

F

ℓ

(i)r

ℓ

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J ≥

ˆ

J

p

with J(x

k

) → 0 for all p-stable π

W

p

′

: Functions J ≥

ˆ

J

p

′

with J(x

k

) → 0 for all p

′

-stable π

1

Neural Network Policy Evalua tion Improvement of Current Policy µ

by Lookahead Min

States x

k+1

States x

k+2

x

k

Heuristic/ Suboptimal Base Policy

Approximation

˜

J

Adaptive Simulation Terminal cost approximation

Heuristic Policy

Simulation with

Cost

˜

J

µ

!

F (i),r

"

of i ≈ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

!

F (i),r

"

: Feature- based parametric architecture

r: Vector of weights

Position “values” Move “probabilities”

Choose the Aggre gation and Disaggreg ation Pr obabilities

Use a Neural Net work or Other Sc heme Form the Aggregate States

I

1

I

q

Use a Neural Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Features F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate Problem

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

∗

ℓ

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation in a space of bas is functions Plays much better than

all chess prog rams

Cost α

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evaluate Approximate Cost

˜

J

µ

!

F (i)

"

of

F (i)=

!

F

1

(i),...,F

s

(i)

"

:VectorofFeaturesofi

˜

J

µ

!

F (i)

"

: Feature- based architecture

Final Features

If

˜

J

µ

!

F (i),r

"

=

#

s

ℓ=1

F

ℓ

(i)r

ℓ

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J ≥

ˆ

J

p

with J(x

k

) → 0 for all p-stable π

W

p

′

: Functions J ≥

ˆ

J

p

′

with J(x

k

) → 0 for all p

′

-stable π

1

Corrected

˜

J

˜

JJ

*

Cost

˜

J

µ

F (i),r

of i ⇡ J

µ

(i) J

µ

(i) Feature Map

˜

J

µ

F (i),r

: Feature-based parametric architecture State

r: Vector of weights Original States Aggregate States

Position “value” Move “probabilities”

Cho ose the Aggregation and Disaggregation Probabilities

Use a Neural Network or Other Scheme Form the Aggregate States

I

1

I

q

Use a Neur al Scheme or Other Scheme

Possibly Include “Handcrafted” Features

Generate Feat ur es F (i) of Formulate Aggregate Problem

Generate “Impoved” Policy ˆµ by “Solving” the Aggregate P rob l em

Same algorithm learned multiple games (Go, Shogi)

Aggregate costs r

⇤

`

Cost function

˜

J

0

(i) Cost function

˜

J

1

(j)

Approximation i n a space of basis functions Plays much better than

all chess programs

Cost ↵

k

g(i, u, j) Transition probabilities p

ij

(u) W

p

Controlled Markov Chain Evaluate Approximate Cost

˜

J

µ

of

Evalu at e Approximate Cost

˜

J

µ

F (i)

of

F (i)=

F

1

(i),...,F

s

(i)

: Vector of Features of i

˜

J

µ

F (i)

: Feature-based architecture Final Features

If

˜

J

µ

F (i),r

=

P

s

`=1

F

`

(i)r

`

it is a linear feature-based architecture

(r

1

,...,r

s

: Scalar weights)

W

p

: Functions J

ˆ

J

p

with J( x

k

) ! 0 for all p-stable ⇡

W

p

0

: Functions J

ˆ

J

p

0

with J( x

k

) ! 0 for all p

0

-stable ⇡

W

+

=

J | J J

+

,J(t)=0

VI converges to J

+

from within W

+

Cost: g(x

k

,u

k

) 0 VI converges to

ˆ

J

p

from within W

p

1

The “current" player plays games that are used to “train" an “improved" player

At a given position, the “move probabilities" and the “value" of a position are

approximated by a deep neural net (NN)

Successive NNs are trained using self-generated data and a form of regression

A form of randomized policy improvement Monte-Carlo Tree Search (MCTS)

generates move probabilities

AlphaZero bears similarity to earlier works, e.g., TD-Gammon (Tesauro,1992), but

is more complicated because of the MCTS and the deep NN

The success of AlphaZero is due to a skillful implenentation/integration of known

ideas, and awesome computational power

Bertsekas (M.I.T.) Reinforcement Learning 4 / 33

Approximate DP/RL Methodology is now Ambitious and Universal

Exact DP applies (in principle) to a very broad range of optimization problems

Deterministic <—-> Stochastic

Combinatorial optimization <—-> Optimal control w/ infinite state/control spaces

One decision maker <—-> Two player games

... BUT is plagued by the curse of dimensionality and need for a math model

Approximate DP/RL overcomes the difficulties of exact DP by:

Approximation (use neural nets and other architectures to reduce dimension)

Simulation (use a computer model in place of a math model)

State of the art:

Broadly applicable methodology: Can address broad range of challenging

problems. Deterministic-stochastic-dynamic, discrete-continuous, games, etc

There are no methods that are guaranteed to work for all or even most problems

There are enough methods to try with a reasonable chance of success for most

types of optimization problems

Role of the theory: Guide the art, delineate the sound ideas

Bertsekas (M.I.T.) Reinforcement Learning 5 / 33