没有合适的资源?快使用搜索试试~ 我知道了~

Representation Learning: A Review and New Perspectives

需积分: 0 9 下载量 108 浏览量

2017-06-13

18:03:43

上传

评论

收藏 1.8MB PDF 举报

温馨提示

试读

30页

Representation Learning: A Review and New Perspectives, Bengio的论文。

资源推荐

资源详情

资源评论

1

Representation Learning: A Review and New

Perspectives

Yoshua Bengio

†

, Aaron Courville, and Pascal Vincent

†

Department of computer science and operations research, U. Montreal

† also, Canadian Institute for Advanced Research (CIFAR)

F

Abstract—

The success of machine learning algorithms generally depends on

data representation, and we hypothesize that this is because different

representations can entangle and hide more or less the different ex-

planatory factors of variation behind the data. Although specific domain

knowledge can be used to help design representations, learning with

generic priors can also be used, and the quest for AI is motivating

the design of more powerful representation-learning algorithms imple-

menting such priors. This paper reviews recent work in the area of

unsupervised feature learning and deep learning, covering advances

in probabilistic models, auto-encoders, manifold learning, and deep

networks. This motivates longer-term unanswered questions about the

appropriate objectives for learning good representations, for computing

representations (i.e., inference), and the geometrical connections be-

tween representation learning, density estimation and manifold learning.

Index Terms—Deep learning, representation learning, feature learning,

unsupervised learning, Boltzmann Machine, autoencoder, neural nets

1 INTRODUCTION

The performance of machine learning methods is heavily

dependent on the choice of data representation (or features)

on which they are applied. For that reason, much of the actual

effort in deploying machine learning algorithms goes into the

design of preprocessing pipelines and data transformations that

result in a representation of the data that can support effective

machine learning. Such feature engineering is important but

labor-intensive and highlights the weakness of current learning

algorithms: their inability to extract and organize the discrimi-

native information from the data. Feature engineering is a way

to take advantage of human ingenuity and prior knowledge to

compensate for that weakness. In order to expand the scope

and ease of applicability of machine learning, it would be

highly desirable to make learning algorithms less dependent

on feature engineering, so that novel applications could be

constructed faster, and more importantly, to make progress

towards Artificial Intelligence (AI). An AI must fundamentally

understand the world around us, and we argue that this can

only be achieved if it can learn to identify and disentangle the

underlying explanatory factors hidden in the observed milieu

of low-level sensory data.

This paper is about representation learning, i.e., learning

representations of the data that make it easier to extract useful

information when building classifiers or other predictors. In

the case of probabilistic models, a good representation is often

one that captures the posterior distribution of the underlying

explanatory factors for the observed input. A good representa-

tion is also one that is useful as input to a supervised predictor.

Among the various ways of learning representations, this paper

focuses on deep learning methods: those that are formed by

the composition of multiple non-linear transformations, with

the goal of yielding more abstract – and ultimately more useful

– representations. Here we survey this rapidly developing area

with special emphasis on recent progress. We consider some

of the fundamental questions that have been driving research

in this area. Specifically, what makes one representation better

than another? Given an example, how should we compute its

representation, i.e. perform feature extraction? Also, what are

appropriate objectives for learning good representations?

2 WHY SHOULD WE CARE ABOUT LEARNING

REPRESENTATIONS?

Representation learning has become a field in itself in the

machine learning community, with regular workshops at the

leading conferences such as NIPS and ICML, and a new

conference dedicated to it, ICLR

1

, sometimes under the header

of Deep Learning or Feature Learning. Although depth is an

important part of the story, many other priors are interesting

and can be conveniently captured when the problem is cast as

one of learning a representation, as discussed in the next sec-

tion. The rapid increase in scientific activity on representation

learning has been accompanied and nourished by a remarkable

string of empirical successes both in academia and in industry.

Below, we briefly highlight some of these high points.

Speech Recognition and Signal Processing

Speech was one of the early applications of neural networks,

in particular convolutional (or time-delay) neural networks

2

.

The recent revival of interest in neural networks, deep learning,

and representation learning has had a strong impact in the

area of speech recognition, with breakthrough results (Dahl

et al., 2010; Deng et al., 2010; Seide et al., 2011a; Mohamed

et al., 2012; Dahl et al., 2012; Hinton et al., 2012) obtained

by several academics as well as researchers at industrial labs

bringing these algorithms to a larger scale and into products.

For example, Microsoft has released in 2012 a new version

of their MAVIS (Microsoft Audio Video Indexing Service)

1. International Conference on Learning Representations

2. See Bengio (1993) for a review of early work in this area.

arXiv:1206.5538v3 [cs.LG] 23 Apr 2014

2

speech system based on deep learning (Seide et al., 2011a).

These authors managed to reduce the word error rate on

four major benchmarks by about 30% (e.g. from 27.4% to

18.5% on RT03S) compared to state-of-the-art models based

on Gaussian mixtures for the acoustic modeling and trained on

the same amount of data (309 hours of speech). The relative

improvement in error rate obtained by Dahl et al. (2012) on a

smaller large-vocabulary speech recognition benchmark (Bing

mobile business search dataset, with 40 hours of speech) is

between 16% and 23%.

Representation-learning algorithms have also been applied

to music, substantially beating the state-of-the-art in poly-

phonic transcription (Boulanger-Lewandowski et al., 2012),

with relative error improvement between 5% and 30% on a

standard benchmark of 4 datasets. Deep learning also helped

to win MIREX (Music Information Retrieval) competitions,

e.g. in 2011 on audio tagging (Hamel et al., 2011).

Object Recognition

The beginnings of deep learning in 2006 have focused on

the MNIST digit image classification problem (Hinton et al.,

2006; Bengio et al., 2007), breaking the supremacy of SVMs

(1.4% error) on this dataset

3

. The latest records are still held

by deep networks: Ciresan et al. (2012) currently claims the

title of state-of-the-art for the unconstrained version of the task

(e.g., using a convolutional architecture), with 0.27% error,

and Rifai et al. (2011c) is state-of-the-art for the knowledge-

free version of MNIST, with 0.81% error.

In the last few years, deep learning has moved from

digits to object recognition in natural images, and the latest

breakthrough has been achieved on the ImageNet dataset

4

bringing down the state-of-the-art error rate from 26.1% to

15.3% (Krizhevsky et al., 2012).

Natural Language Processing

Besides speech recognition, there are many other Natural

Language Processing (NLP) applications of representation

learning. Distributed representations for symbolic data were

introduced by Hinton (1986), and first developed in the

context of statistical language modeling by Bengio et al.

(2003) in so-called neural net language models (Bengio,

2008). They are all based on learning a distributed repre-

sentation for each word, called a word embedding. Adding a

convolutional architecture, Collobert et al. (2011) developed

the SENNA system

5

that shares representations across the

tasks of language modeling, part-of-speech tagging, chunking,

named entity recognition, semantic role labeling and syntactic

parsing. SENNA approaches or surpasses the state-of-the-art

on these tasks but is simpler and much faster than traditional

predictors. Learning word embeddings can be combined with

learning image representations in a way that allow to associate

text and images. This approach has been used successfully to

build Google’s image search, exploiting huge quantities of data

to map images and queries in the same space (Weston et al.,

3. for the knowledge-free version of the task, where no image-specific prior

is used, such as image deformations or convolutions

4. The 1000-class ImageNet benchmark, whose results are detailed here:

http://www.image-net.org/challenges/LSVRC/2012/results.html

5. downloadable from http://ml.nec-labs.com/senna/

2010) and it has recently been extended to deeper multi-modal

representations (Srivastava and Salakhutdinov, 2012).

The neural net language model was also improved by

adding recurrence to the hidden layers (Mikolov et al., 2011),

allowing it to beat the state-of-the-art (smoothed n-gram

models) not only in terms of perplexity (exponential of the

average negative log-likelihood of predicting the right next

word, going down from 140 to 102) but also in terms of

word error rate in speech recognition (since the language

model is an important component of a speech recognition

system), decreasing it from 17.2% (KN5 baseline) or 16.9%

(discriminative language model) to 14.4% on the Wall Street

Journal benchmark task. Similar models have been applied

in statistical machine translation (Schwenk et al., 2012; Le

et al., 2013), improving perplexity and BLEU scores. Re-

cursive auto-encoders (which generalize recurrent networks)

have also been used to beat the state-of-the-art in full sentence

paraphrase detection (Socher et al., 2011a) almost doubling the

F1 score for paraphrase detection. Representation learning can

also be used to perform word sense disambiguation (Bordes

et al., 2012), bringing up the accuracy from 67.8% to 70.2%

on the subset of Senseval-3 where the system could be applied

(with subject-verb-object sentences). Finally, it has also been

successfully used to surpass the state-of-the-art in sentiment

analysis (Glorot et al., 2011b; Socher et al., 2011b).

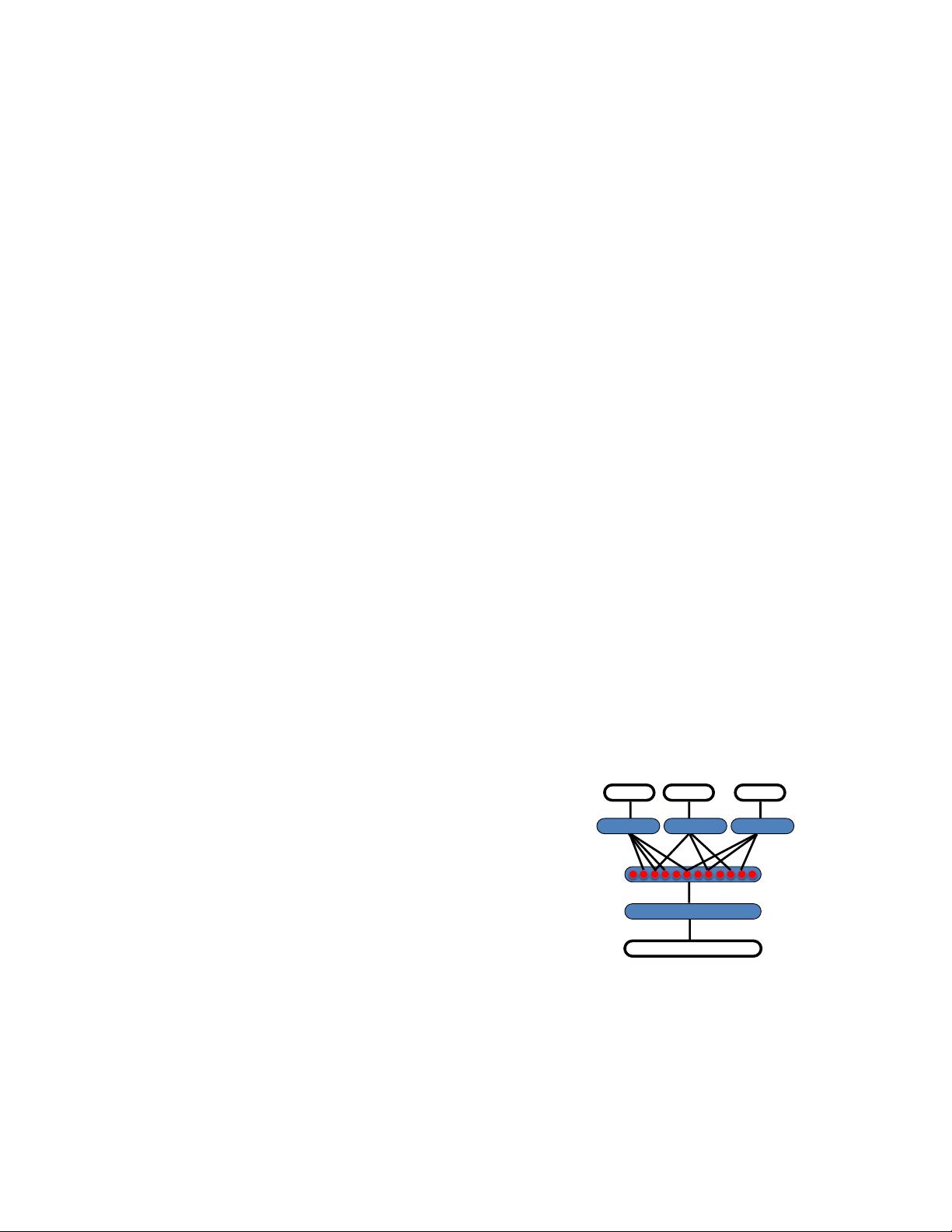

Multi-Task and Transfer Learning, Domain Adaptation

Transfer learning is the ability of a learning algorithm to

exploit commonalities between different learning tasks in order

to share statistical strength, and transfer knowledge across

tasks. As discussed below, we hypothesize that representation

learning algorithms have an advantage for such tasks because

they learn representations that capture underlying factors, a

subset of which may be relevant for each particular task, as

illustrated in Figure 1. This hypothesis seems confirmed by a

number of empirical results showing the strengths of repre-

sentation learning algorithms in transfer learning scenarios.

raw input x

task 1

output y

1

task 3

output y

3

task 2

output y

2

Task%A%

Task%B%

Task%C%

%output%

%input%

%shared%

subsets%of%

factors%

Fig. 1. Illustration of representation-learning discovering ex-

planatory factors (middle hidden layer, in red), some explaining

the input (semi-supervised setting), and some explaining target

for each task. Because these subsets overlap, sharing of statis-

tical strength helps generalization.

.

Most impressive are the two transfer learning challenges

held in 2011 and won by representation learning algorithms.

First, the Transfer Learning Challenge, presented at an ICML

2011 workshop of the same name, was won using unsuper-

vised layer-wise pre-training (Bengio, 2011; Mesnil et al.,

2011). A second Transfer Learning Challenge was held the

3

same year and won by Goodfellow et al. (2011). Results

were presented at NIPS 2011’s Challenges in Learning Hier-

archical Models Workshop. In the related domain adaptation

setup, the target remains the same but the input distribution

changes (Glorot et al., 2011b; Chen et al., 2012). In the

multi-task learning setup, representation learning has also been

found advantageous Krizhevsky et al. (2012); Collobert et al.

(2011), because of shared factors across tasks.

3 WHAT MAKES A REPRESENTATION GOOD?

3.1 Priors for Representation Learning in AI

In Bengio and LeCun (2007), one of us introduced the

notion of AI-tasks, which are challenging for current machine

learning algorithms, and involve complex but highly structured

dependencies. One reason why explicitly dealing with repre-

sentations is interesting is because they can be convenient to

express many general priors about the world around us, i.e.,

priors that are not task-specific but would be likely to be useful

for a learning machine to solve AI-tasks. Examples of such

general-purpose priors are the following:

• Smoothness: assumes the function to be learned f is s.t.

x ≈ y generally implies f(x) ≈ f(y). This most basic prior

is present in most machine learning, but is insufficient to get

around the curse of dimensionality, see Section 3.2.

• Multiple explanatory factors: the data generating distribu-

tion is generated by different underlying factors, and for the

most part what one learns about one factor generalizes in many

configurations of the other factors. The objective to recover

or at least disentangle these underlying factors of variation is

discussed in Section 3.5. This assumption is behind the idea of

distributed representations, discussed in Section 3.3 below.

• A hierarchical organization of explanatory factors: the

concepts that are useful for describing the world around us

can be defined in terms of other concepts, in a hierarchy, with

more abstract concepts higher in the hierarchy, defined in

terms of less abstract ones. This assumption is exploited with

deep representations, elaborated in Section 3.4 below.

• Semi-supervised learning: with inputs X and target Y to

predict, a subset of the factors explaining X’s distribution

explain much of Y , given X. Hence representations that are

useful for P (X) tend to be useful when learning P (Y |X),

allowing sharing of statistical strength between the unsuper-

vised and supervised learning tasks, see Section 4.

• Shared factors across tasks: with many Y ’s of interest or

many learning tasks in general, tasks (e.g., the corresponding

P (Y |X, task)) are explained by factors that are shared with

other tasks, allowing sharing of statistical strengths across

tasks, as discussed in the previous section (Multi-Task and

Transfer Learning, Domain Adaptation).

• Manifolds: probability mass concentrates near regions that

have a much smaller dimensionality than the original space

where the data lives. This is explicitly exploited in some

of the auto-encoder algorithms and other manifold-inspired

algorithms described respectively in Sections 7.2 and 8.

• Natural clustering: different values of categorical variables

such as object classes are associated with separate manifolds.

More precisely, the local variations on the manifold tend to

preserve the value of a category, and a linear interpolation

between examples of different classes in general involves

going through a low density region, i.e., P (X|Y = i) for

different i tend to be well separated and not overlap much. For

example, this is exploited in the Manifold Tangent Classifier

discussed in Section 8.3. This hypothesis is consistent with the

idea that humans have named categories and classes because

of such statistical structure (discovered by their brain and

propagated by their culture), and machine learning tasks often

involves predicting such categorical variables.

• Temporal and spatial coherence: consecutive (from a se-

quence) or spatially nearby observations tend to be associated

with the same value of relevant categorical concepts, or result

in a small move on the surface of the high-density manifold.

More generally, different factors change at different temporal

and spatial scales, and many categorical concepts of interest

change slowly. When attempting to capture such categorical

variables, this prior can be enforced by making the associated

representations slowly changing, i.e., penalizing changes in

values over time or space. This prior was introduced in Becker

and Hinton (1992) and is discussed in Section 11.3.

• Sparsity: for any given observation x, only a small fraction

of the possible factors are relevant. In terms of representation,

this could be represented by features that are often zero (as

initially proposed by Olshausen and Field (1996)), or by the

fact that most of the extracted features are insensitive to small

variations of x. This can be achieved with certain forms of

priors on latent variables (peaked at 0), or by using a non-

linearity whose value is often flat at 0 (i.e., 0 and with a

0 derivative), or simply by penalizing the magnitude of the

Jacobian matrix (of derivatives) of the function mapping input

to representation. This is discussed in Sections 6.1.1 and 7.2.

• Simplicity of Factor Dependencies: in good high-level

representations, the factors are related to each other through

simple, typically linear dependencies. This can be seen in

many laws of physics, and is assumed when plugging a linear

predictor on top of a learned representation.

We can view many of the above priors as ways to help the

learner discover and disentangle some of the underlying (and

a priori unknown) factors of variation that the data may reveal.

This idea is pursued further in Sections 3.5 and 11.4.

3.2 Smoothness and the Curse of Dimensionality

For AI-tasks, such as vision and NLP, it seems hopeless to

rely only on simple parametric models (such as linear models)

because they cannot capture enough of the complexity of in-

terest unless provided with the appropriate feature space. Con-

versely, machine learning researchers have sought flexibility in

local

6

non-parametric learners such as kernel machines with

a fixed generic local-response kernel (such as the Gaussian

kernel). Unfortunately, as argued at length by Bengio and

Monperrus (2005); Bengio et al. (2006a); Bengio and LeCun

(2007); Bengio (2009); Bengio et al. (2010), most of these

algorithms only exploit the principle of local generalization,

i.e., the assumption that the target function (to be learned)

is smooth enough, so they rely on examples to explicitly

map out the wrinkles of the target function. Generalization

6. local in the sense that the value of the learned function at x depends

mostly on training examples x

(t)

’s close to x

4

is mostly achieved by a form of local interpolation between

neighboring training examples. Although smoothness can be

a useful assumption, it is insufficient to deal with the curse

of dimensionality, because the number of such wrinkles (ups

and downs of the target function) may grow exponentially

with the number of relevant interacting factors, when the data

are represented in raw input space. We advocate learning

algorithms that are flexible and non-parametric

7

but do not

rely exclusively on the smoothness assumption. Instead, we

propose to incorporate generic priors such as those enumerated

above into representation-learning algorithms. Smoothness-

based learners (such as kernel machines) and linear models

can still be useful on top of such learned representations. In

fact, the combination of learning a representation and kernel

machine is equivalent to learning the kernel, i.e., the feature

space. Kernel machines are useful, but they depend on a prior

definition of a suitable similarity metric, or a feature space

in which naive similarity metrics suffice. We would like to

use the data, along with very generic priors, to discover those

features, or equivalently, a similarity function.

3.3 Distributed representations

Good representations are expressive, meaning that a

reasonably-sized learned representation can capture a huge

number of possible input configurations. A simple counting

argument helps us to assess the expressiveness of a model pro-

ducing a representation: how many parameters does it require

compared to the number of input regions (or configurations) it

can distinguish? Learners of one-hot representations, such as

traditional clustering algorithms, Gaussian mixtures, nearest-

neighbor algorithms, decision trees, or Gaussian SVMs all re-

quire O(N ) parameters (and/or O(N) examples) to distinguish

O(N) input regions. One could naively believe that one cannot

do better. However, RBMs, sparse coding, auto-encoders or

multi-layer neural networks can all represent up to O(2

k

) input

regions using only O(N) parameters (with k the number of

non-zero elements in a sparse representation, and k = N in

non-sparse RBMs and other dense representations). These are

all distributed

8

or sparse

9

representations. The generalization

of clustering to distributed representations is multi-clustering,

where either several clusterings take place in parallel or the

same clustering is applied on different parts of the input,

such as in the very popular hierarchical feature extraction for

object recognition based on a histogram of cluster categories

detected in different patches of an image (Lazebnik et al.,

2006; Coates and Ng, 2011a). The exponential gain from

distributed or sparse representations is discussed further in

section 3.2 (and Figure 3.2) of Bengio (2009). It comes

about because each parameter (e.g. the parameters of one of

the units in a sparse code, or one of the units in a Restricted

7. We understand non-parametric as including all learning algorithms

whose capacity can be increased appropriately as the amount of data and its

complexity demands it, e.g. including mixture models and neural networks

where the number of parameters is a data-selected hyper-parameter.

8. Distributed representations: where k out of N representation elements

or feature values can be independently varied, e.g., they are not mutually

exclusive. Each concept is represented by having k features being turned on

or active, while each feature is involved in representing many concepts.

9. Sparse representations: distributed representations where only a few of

the elements can be varied at a time, i.e., k < N .

Boltzmann Machine) can be re-used in many examples that are

not simply near neighbors of each other, whereas with local

generalization, different regions in input space are basically

associated with their own private set of parameters, e.g., as

in decision trees, nearest-neighbors, Gaussian SVMs, etc. In

a distributed representation, an exponentially large number of

possible subsets of features or hidden units can be activated

in response to a given input. In a single-layer model, each

feature is typically associated with a preferred input direction,

corresponding to a hyperplane in input space, and the code

or representation associated with that input is precisely the

pattern of activation (which features respond to the input,

and how much). This is in contrast with a non-distributed

representation such as the one learned by most clustering

algorithms, e.g., k-means, in which the representation of a

given input vector is a one-hot code identifying which one of

a small number of cluster centroids best represents the input

10

.

3.4 Depth and abstraction

Depth is a key aspect to representation learning strategies we

consider in this paper. As we will discuss, deep architectures

are often challenging to train effectively and this has been

the subject of much recent research and progress. However,

despite these challenges, they carry two significant advantages

that motivate our long-term interest in discovering successful

training strategies for deep architectures. These advantages

are: (1) deep architectures promote the re-use of features, and

(2) deep architectures can potentially lead to progressively

more abstract features at higher layers of representations

(more removed from the data).

Feature re-use. The notion of re-use, which explains the

power of distributed representations, is also at the heart of the

theoretical advantages behind deep learning, i.e., constructing

multiple levels of representation or learning a hierarchy of

features. The depth of a circuit is the length of the longest

path from an input node of the circuit to an output node of

the circuit. The crucial property of a deep circuit is that its

number of paths, i.e., ways to re-use different parts, can grow

exponentially with its depth. Formally, one can change the

depth of a given circuit by changing the definition of what

each node can compute, but only by a constant factor. The

typical computations we allow in each node include: weighted

sum, product, artificial neuron model (such as a monotone non-

linearity on top of an affine transformation), computation of a

kernel, or logic gates. Theoretical results clearly show families

of functions where a deep representation can be exponentially

more efficient than one that is insufficiently deep (H

˚

astad,

1986; H

˚

astad and Goldmann, 1991; Bengio et al., 2006a;

Bengio and LeCun, 2007; Bengio and Delalleau, 2011). If

the same family of functions can be represented with fewer

10. As discussed in (Bengio, 2009), things are only slightly better when

allowing continuous-valued membership values, e.g., in ordinary mixture

models (with separate parameters for each mixture component), but the

difference in representational power is still exponential (Montufar and Morton,

2012). The situation may also seem better with a decision tree, where each

given input is associated with a one-hot code over the tree leaves, which

deterministically selects associated ancestors (the path from root to node).

Unfortunately, the number of different regions represented (equal to the

number of leaves of the tree) still only grows linearly with the number of

parameters used to specify it (Bengio and Delalleau, 2011).

5

parameters (or more precisely with a smaller VC-dimension),

learning theory would suggest that it can be learned with

fewer examples, yielding improvements in both computational

efficiency (less nodes to visit) and statistical efficiency (less

parameters to learn, and re-use of these parameters over many

different kinds of inputs).

Abstraction and invariance. Deep architectures can lead

to abstract representations because more abstract concepts can

often be constructed in terms of less abstract ones. In some

cases, such as in the convolutional neural network (LeCun

et al., 1998b), we build this abstraction in explicitly via a

pooling mechanism (see section 11.2). More abstract concepts

are generally invariant to most local changes of the input. That

makes the representations that capture these concepts generally

highly non-linear functions of the raw input. This is obviously

true of categorical concepts, where more abstract representa-

tions detect categories that cover more varied phenomena (e.g.

larger manifolds with more wrinkles) and thus they potentially

have greater predictive power. Abstraction can also appear in

high-level continuous-valued attributes that are only sensitive

to some very specific types of changes in the input. Learning

these sorts of invariant features has been a long-standing goal

in pattern recognition.

3.5 Disentangling Factors of Variation

Beyond being distributed and invariant, we would like our rep-

resentations to disentangle the factors of variation. Different

explanatory factors of the data tend to change independently

of each other in the input distribution, and only a few at a time

tend to change when one considers a sequence of consecutive

real-world inputs.

Complex data arise from the rich interaction of many

sources. These factors interact in a complex web that can

complicate AI-related tasks such as object classification. For

example, an image is composed of the interaction between one

or more light sources, the object shapes and the material prop-

erties of the various surfaces present in the image. Shadows

from objects in the scene can fall on each other in complex

patterns, creating the illusion of object boundaries where there

are none and dramatically effect the perceived object shape.

How can we cope with these complex interactions? How can

we disentangle the objects and their shadows? Ultimately,

we believe the approach we adopt for overcoming these

challenges must leverage the data itself, using vast quantities

of unlabeled examples, to learn representations that separate

the various explanatory sources. Doing so should give rise to

a representation significantly more robust to the complex and

richly structured variations extant in natural data sources for

AI-related tasks.

It is important to distinguish between the related but distinct

goals of learning invariant features and learning to disentangle

explanatory factors. The central difference is the preservation

of information. Invariant features, by definition, have reduced

sensitivity in the direction of invariance. This is the goal of

building features that are insensitive to variation in the data

that are uninformative to the task at hand. Unfortunately, it

is often difficult to determine a priori which set of features

and variations will ultimately be relevant to the task at hand.

Further, as is often the case in the context of deep learning

methods, the feature set being trained may be destined to

be used in multiple tasks that may have distinct subsets of

relevant features. Considerations such as these lead us to the

conclusion that the most robust approach to feature learning

is to disentangle as many factors as possible, discarding as

little information about the data as is practical. If some form

of dimensionality reduction is desirable, then we hypothesize

that the local directions of variation least represented in the

training data should be first to be pruned out (as in PCA,

for example, which does it globally instead of around each

example).

3.6 Good criteria for learning representations?

One of the challenges of representation learning that distin-

guishes it from other machine learning tasks such as classi-

fication is the difficulty in establishing a clear objective, or

target for training. In the case of classification, the objective

is (at least conceptually) obvious, we want to minimize the

number of misclassifications on the training dataset. In the

case of representation learning, our objective is far-removed

from the ultimate objective, which is typically learning a

classifier or some other predictor. Our problem is reminiscent

of the credit assignment problem encountered in reinforcement

learning. We have proposed that a good representation is one

that disentangles the underlying factors of variation, but how

do we translate that into appropriate training criteria? Is it even

necessary to do anything but maximize likelihood under a good

model or can we introduce priors such as those enumerated

above (possibly data-dependent ones) that help the representa-

tion better do this disentangling? This question remains clearly

open but is discussed in more detail in Sections 3.5 and 11.4.

4 BUILDING DEEP REPRESENTATIONS

In 2006, a breakthrough in feature learning and deep learning

was initiated by Geoff Hinton and quickly followed up in

the same year (Hinton et al., 2006; Bengio et al., 2007;

Ranzato et al., 2007), and soon after by Lee et al. (2008)

and many more later. It has been extensively reviewed and

discussed in Bengio (2009). A central idea, referred to as

greedy layerwise unsupervised pre-training, was to learn a

hierarchy of features one level at a time, using unsupervised

feature learning to learn a new transformation at each level

to be composed with the previously learned transformations;

essentially, each iteration of unsupervised feature learning adds

one layer of weights to a deep neural network. Finally, the set

of layers could be combined to initialize a deep supervised pre-

dictor, such as a neural network classifier, or a deep generative

model, such as a Deep Boltzmann Machine (Salakhutdinov

and Hinton, 2009).

This paper is mostly about feature learning algorithms

that can be used to form deep architectures. In particular, it

was empirically observed that layerwise stacking of feature

extraction often yielded better representations, e.g., in terms

of classification error (Larochelle et al., 2009; Erhan et al.,

2010b), quality of the samples generated by a probabilistic

model (Salakhutdinov and Hinton, 2009) or in terms of the

invariance properties of the learned features (Goodfellow

剩余29页未读,继续阅读

资源评论

wblgers1234

- 粉丝: 142

- 资源: 16

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功