没有合适的资源?快使用搜索试试~ 我知道了~

raft算法,原版论文,英文版 摘要 Raft 是一种用来管理日志复制的一致性算法。它和 Paxos 的性能和功能是一样的,但是它和 Paxos 的结构不一样;这使得 Raft 更容易理解并且更易于建立实际的系统。为了提高理解性,Raft 将一致性算法分为了几个部分,例如领导选取(leader selection),日志复制(log replication)和安全性(safety),同时它使用了更强的一致性来减少了必须需要考虑的状态。从用户学习的结果来看,Raft 比 Paxos 更容易学会。Raft还包括了一种新的机制来使得动态改变集群成员,它使用重叠大多数(overlapping majorities)来保证安全。

资源推荐

资源详情

资源评论

In Search of an Understandable Consensus Algorithm

(Extended Version)

Diego Ongaro and John Oust erhou t

Stanford University

Abstract

Raft is a consen sus algorithm for managing a replicated

log. It produces a result equivalent to (multi-)Paxos, and

it is as efficient as Paxos, but its structur e is different

from Paxos; this makes Raft more understandab le than

Paxos and also provides a better foundation for build-

ing practical system s. In order to enhance understandabil-

ity, Raft separates the key elements of consensus, such as

leader election, log replication, and safety, and it enforce s

a stronger degree of coherency to reduce the number of

states that must be considered. Results from a user study

demonstra te that Raft is easier for students to learn than

Paxos. Raft also includes a new m e chanism for changing

the cluster membership, which uses overlapping majori-

ties to gua rantee safety.

1 Introduction

Consensus algorithm s allow a collection of machin es

to work as a coheren t group that ca n survive the fail-

ures o f some of its members. Because of this, they play a

key role in building reliable large-scale software systems.

Paxos [15, 16] has dominated the discussion of consen-

sus algorithms over the last decade: most implementations

of consensus are based on Paxos or influenced by it, and

Paxos has become the primary vehicle used to teach stu-

dents about c onsensus.

Unfortu nately, Paxos is quite difficult to understand, in

spite of numerous attempts to make it more approachable.

Furthermore, its architecture requires complex chan ges

to support practical systems. As a result, both system

builders and students struggle with Paxos.

After struggling with Paxos ourselves, we set out to

find a new consensus algorithm that could provide a bet-

ter foundatio n for system building and education. Our ap-

proach was unusua l in that our primary goal was under-

standability: could we define a consensus algorithm for

practical systems and describe it in a way that is signifi-

cantly easier to learn than Paxos? Furtherm ore, we wanted

the algorithm to facilitate the development of intuitions

that are essential for system builders. It was important not

just for the algorithm to work, but for it to be obvious why

it works.

The result of this work is a consensus algorithm called

Raft. In designing Raft we applied specific techniques to

improve understand ability, including de composition (Raft

separates leader election, log rep lica tion, and safety) and

This tech report is an extended version of [32]; additional material is

noted with a gray bar in the margin. Published May 20, 2014.

state space reduction (relative to Paxos, Raft reduces the

degree of nondeterminism and th e ways servers can be in-

consistent with each othe r). A user study with 43 students

at two universities shows that Raft is significan tly easier

to understan d than Paxos: after learning both algorithms,

33 of these students were able to answer questions about

Raft better than questions about Paxos.

Raft is similar in m any ways to existing consensus al-

gorithms (most notably, Oki and Liskov’s Viewstamped

Replication [29, 22]), but it has several novel fea tures:

• Strong leader: Raft u ses a stronger form of leader-

ship than other consensus algorithms. For example,

log entries only flow fr om the leader to other servers.

This simplifies the management of the replicated log

and makes Raft easier to understand.

• Leader election: Raft uses randomized timers to

elect leaders. This adds only a small amount of

mechanism to the heartbeats already required for any

consensus algorithm, while resolving conflicts sim-

ply and rapidly.

• Membership changes: Raft’s mechanism for

changin g the set of servers in the cluster uses a new

joint consensus approach where the majorities of

two different configurations overlap during transi-

tions. This allows the cluster to continue opera ting

normally du ring configuration changes.

We believe that Raft is superior to Paxos and othe r con-

sensus algorithms, both for educatio nal purposes and as a

foundation for implementation. It is simpler and more un-

derstandable than other algorithms; it is described com-

pletely enough to m eet the needs of a practical system;

it has several open-source implementations and is used

by several companies; its saf ety properties have bee n for-

mally specified and proven; and its efficiency is compara-

ble to other algorithms.

The remainder of the paper intr oduces the replicated

state machine p roblem (Section 2), discusses the strength s

and weaknesses of Paxos (Section 3), describes our gen-

eral approach to u nderstandability (Section 4), presents

the Raft co nsensus algorithm (Sections 5–8), evaluates

Raft (Section 9), and discusses related work (Section 10).

2 Replicated state machines

Consensus algorithms typically arise in the context of

replicated state machines [ 37]. In this approach, state m a-

chines on a collection of servers compute identical copies

of the same state an d can continue operating even if some

of the servers are down. Replicated state machines are

1

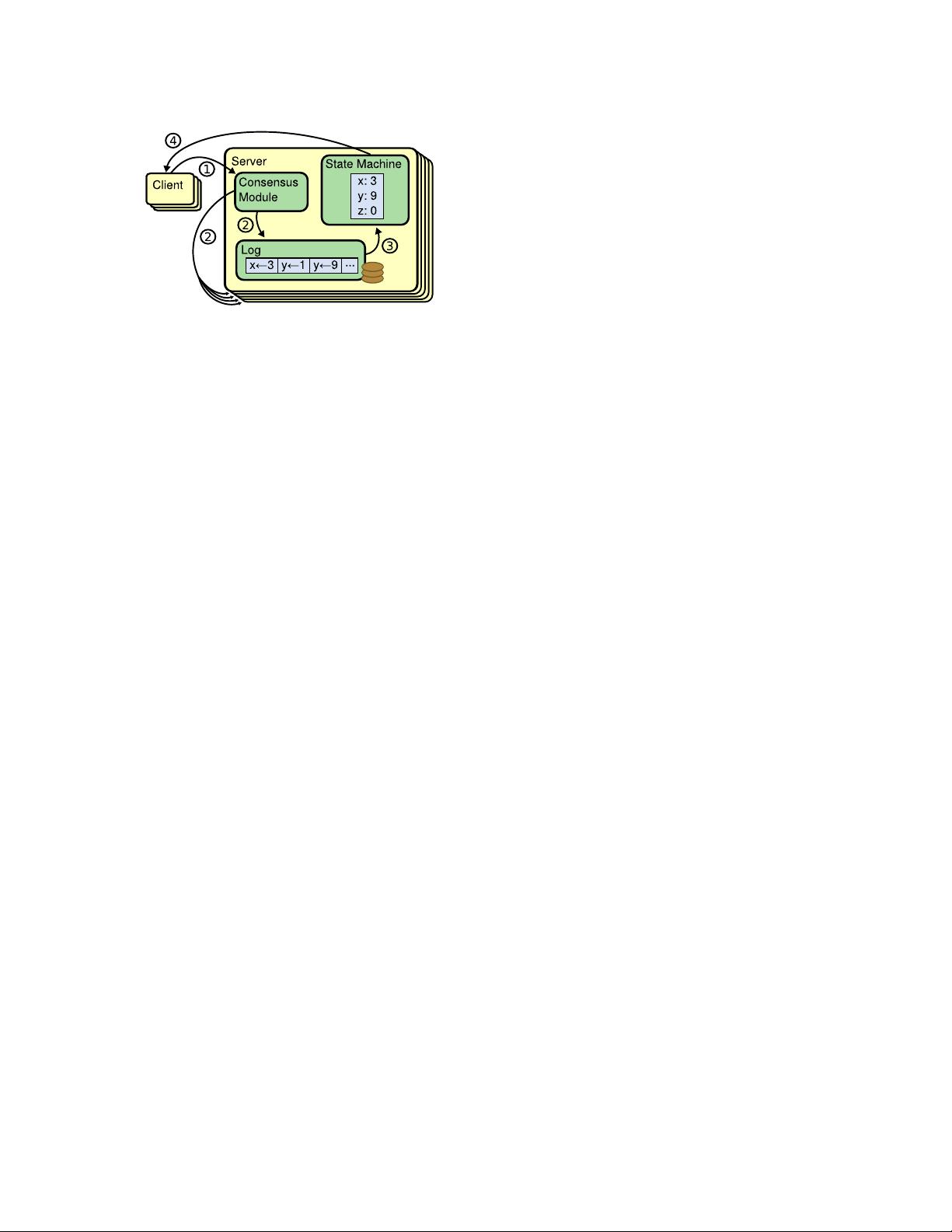

Figure 1: Replicated state machine architecture. The con-

sensus al gorithm manages a replicated log containing state

machine commands f rom clients. The state machines process

identical sequences of commands from the logs, so they pro-

duce the same outputs.

used to solve a variety of fault tolerance problems in dis-

tributed systems. For example, large-scale systems that

have a single cluster leader, such as GFS [8], HDFS [38],

and RAMCloud [33], typically use a separate replicated

state machine to manage leader election and stor e config-

uration information that must survive leader crashes. Ex-

amples of replicated state machines include Chubby [2]

and ZooKeeper [11].

Replicated state machines are typically implemented

using a replicated log, as shown in Figure 1. Each server

stores a log containing a series of comma nds, which its

state ma chine executes in order. Each log contains the

same comma nds in the same order, so each state ma-

chine processes the same seq uence of commands. Since

the state machines are determin istic, each computes the

same state and the same sequen c e of outputs.

Keeping the replicated log consistent is the job of the

consensus algorithm. The consensus modu le on a server

receives commands from clients and adds them to its log.

It communicate s with the consensus m odules on other

servers to ensure that every log eventually contains the

same requests in the same order, even if some servers fail.

Once c ommands are properly re plicated, each server’s

state m a chine processes them in log order, and the out-

puts are returned to clients. As a result, the servers appear

to form a single, high ly reliable state machine.

Consensus algorithms for practica l systems typically

have the f ollowing properties:

• They ensure safety (never returning an incorrect re-

sult) under all non-Byzantine conditions, including

network delays, partitions, and packet loss, duplica-

tion, and reo rdering.

• They are fully functional (available) as long as any

majority o f the servers are operational and can com-

municate with each other and with clients. Thus, a

typical cluster of five servers can tolerate the failure

of any two servers. Servers are assumed to fail by

stopping; they may later recover from state on stable

storage and rejoin the cluster.

• They do not depend on timing to ensure the consis-

tency of the logs: faulty clocks and extreme message

delays can, at worst, cause availability problems.

• In the co mmon case, a command can complete as

soon as a majority of the cluster has r e sponded to a

single round of remote procedure c alls; a minority of

slow servers need not impact overall system perfor-

mance.

3 What’s wrong with Paxos?

Over the last ten years, Leslie Lamport’s Paxos proto-

col [15] has become almost synonymous with consensus:

it is the protocol most commonly taught in courses, and

most imp le mentations o f consensus use it as a starting

point. Paxos first defines a protocol c apable of reaching

agreement on a single decision, such as a single replicated

log entry. We refer to this subset as single-decree Paxos.

Paxos then combines multiple instances of this protocol to

facilitate a series of decisions such as a log (m ulti-Paxos).

Paxos ensure s both safety and liveness, and it supports

changes in c luster membership. Its correctness has been

proven, and it is efficient in the normal case.

Unfortu nately, Paxos has two significant drawbacks.

The first drawback is that Paxos is exceptionally diffi-

cult to understand. The full explanation [15] is noto ri-

ously opaqu e; few pe ople succeed in und e rstanding it, and

only with great effort. As a result, there have been several

attempts to explain Paxos in simpler terms [16, 20, 21].

These explanations focus on the single-decree subset, yet

they are still ch allenging. In an informal survey of atten-

dees at NSDI 2012, we found few people who were com-

fortable with Paxos, even amon g seasoned researchers.

We struggled with Paxos ourselves; we were not able to

understand the complete protocol until after reading sev-

eral simplified explanations and designing our own a lter-

native protocol, a process that took almost a year.

We hypothesize that Paxos’ opaqueness derives from

its choice of the single-decr ee subset as its foundation.

Single-decree Paxos is dense and subtle: it is divided into

two stages that do n ot have simple intuitive explanations

and cannot be understood independently. Because of this,

it is difficult to develop intuitions about why the single-

decree protocol works. The composition rules for multi-

Paxos add significant additional complexity and subtlety.

We believe that the overall problem of reaching consensus

on multip le decisions (i.e., a log in stead of a single entry)

can be decomposed in other ways that are more direct and

obvious.

The second problem with Paxos is that it does not pro-

vide a good foundation for building practical implemen-

tations. One re ason is that there is no widely agreed-

upon algorithm for multi-Paxos. Lamport’s descriptions

are mostly about single-decree Paxos; he sketched possi-

ble approaches to multi-Paxos, but many details are miss-

ing. There have been several attempts to flesh out and op-

timize Paxos, such as [26], [39], and [13], but these differ

2

from each other and from Lamport’s sketches. Systems

such as Chubby [4] have impleme nted Paxos-like algo-

rithms, but in most cases their deta ils have not been pub-

lished.

Furthermore, the Paxos architecture is a poor one for

building pra ctical systems; this is another consequence of

the single-decree decomposition. For example, there is lit-

tle benefit to choosing a collection of log entries indepe n-

dently and then melding them into a sequential log; this

just adds complexity. I t is simp le r and more efficient to

design a system around a log, where new entrie s are ap-

pended sequentially in a constrained order. Another pr ob-

lem is that Paxos uses a symmetric peer-to-peer approach

at its core (though it eventually suggests a w e ak form of

leadership as a performance optimization). This makes

sense in a simplified world where only one decision will

be made, but few practical systems use this approach. If a

series of decisions must be made, it is simpler and faster

to first elect a leader, then have the leader coordinate the

decisions.

As a result, practical systems bear little resemblance

to Paxos. Each implementation begins with Paxos, dis-

covers the difficulties in implementing it, and then de-

velops a significantly different architecture. This is time-

consumin g and error-prone, and the difficulties of under-

standing Paxos exacerbate the problem . Paxos’ formula-

tion may be a good one for proving theorems about its cor-

rectness, but real implementations are so different from

Paxos that the proof s have little value. The following com-

ment fro m the Chubby implem e nters is typical:

There are significant gaps between the description of

the Paxos algorithm and the needs of a real-world

system. . . . the final system will be based on an un-

proven protocol [4].

Because of these problems, we concluded that Paxos

does not provide a good foundation either for system

building or for education . Given the importance of co n-

sensus in large-scale software systems, we decided to see

if we could design an alternative consensus algorithm

with better properties than Paxos. Raft is the result of tha t

experiment.

4 Designing for understandability

We had several goals in designing Raft: it must provide

a complete and practical foundation for system building,

so that it significantly reduces the amo unt of design work

required of developers; it must b e safe under all conditions

and available under typical operating condition s; and it

must be efficient fo r common operations. But our most

important goal—and most difficult challenge—was un-

derstandability. It must be possible for a large aud ie nce to

understand the algorithm co mfortably. In addition, it must

be possible to develop intuitions about the algorithm, so

that system builders can make the extensions that are in-

evitable in real-world implementations.

There were numero us points in the design of Raft

where we had to choose amon g alternative approaches.

In these situations we evaluated the alternatives based on

understandability: how hard is it to explain each altern a -

tive (for example, how complex is its state space, and doe s

it have subtle implicatio ns?), and how easy will it be for a

reader to co mpletely understand the approach and its im-

plications?

We recogn ize that there is a high degree of subjectiv-

ity in such a nalysis; no netheless, we used two techniques

that are generally applicable. The first technique is the

well-known approach o f proble m decomposition: wher-

ever possible, we divided problems into separate pieces

that could be solved, exp lained, and understood relatively

indepen dently. For example, in Raft we separated leader

election, log replication, safety, and membership changes.

Our second appr oach was to simplify the state space

by reducing the number of states to consider, ma king the

system more coherent and elim inating nondeterminism

where possible. Specifically, logs are not allowed to have

holes, and Raft limits the ways in which logs can become

inconsistent with each othe r. Although in most cases we

tried to elimina te nondeterminism, there are some situ-

ations where nondeter minism actually improves under-

standability. In particular, randomized a pproaches intro-

duce nondeterm inism, but they tend to reduce the state

space by hand ling all possible choices in a similar fashion

(“choo se any; it doesn’t matter”). We used randomization

to simplify the Raft leader election algorithm.

5 The Raft consensus algorithm

Raft is an algorithm for managing a replicated log of

the form described in Section 2. Figure 2 summarizes the

algorithm in condensed form for reference, and Figure 3

lists key properties of the algo rithm; the elements of these

figures are discussed pie cewise over the rest of this sec-

tion.

Raft implements co nsensus by first electing a distin-

guished leader, then giving the leader complete responsi-

bility for ma naging the replicated log. The leader accepts

log entries from clients, replicates them on other servers,

and tells servers when it is safe to apply log entries to

their state machines. H aving a leader simplifies the man-

agement of the replicated log. For example, the leader can

decide where to place new entries in the log without con-

sulting other servers, and data flows in a simple fashion

from the leader to other servers. A leader can fail or be-

come disconnected from the other servers, in which case

a new leader is elected.

Given the leader approach, Raft decomposes the con-

sensus problem into thre e relatively independent subprob-

lems, which are discussed in the subsections that follow:

• Leader election: a new leader must be chosen when

an existing leader fails (Section 5.2).

• Log replication: the leader must accept log entries

3

Invoked by candidates to gather votes (§5.2).

Arguments:

term candidate’s term

candidateId candidate requesting vote

lastLogIndex index of candidate’s last log entry (§5.4)

lastLogTerm term of candidate’s last log entry (§5.4)

Results:

term currentTerm, for candidate to update itself

voteGranted true means candidate received vote

Receiver implementation:

1. Reply false if term < currentTerm (§5.1)

2. If votedFor is null or candidateId, and candidate’s log is at

least as up-to-date as receiver’s log, grant vote (§5.2, §5.4)

RequestVote RPC

Invoked by leader to replicate log entries (§5.3); also used as

heartbeat (§5.2).

Arguments:

term leader’s term

leaderId so follower can redirect clients

prevLogIndex index of log entry immediately preceding

new ones

prevLogTerm term of prevLogIndex entry

entries[] log entries to store (empty for heartbeat;

may send more than one for efficiency)

leaderCommit leader’s commitIndex

Results:

term currentTerm, for leader to update itself

success true if follower contained entry matching

prevLogIndex and prevLogTerm

Receiver implementation:

1. Reply false if term < currentTerm (§5.1)

2. Reply false if log doesn’t contain an entry at prevLogIndex

whose term matches prevLogTerm (§5.3)

3. If an existing entry conflicts with a new one (same index

but different terms), delete the existing entry and all that

follow it (§5.3)

4. Append any new entries not already in the log

5. If leaderCommit > commitIndex, set commitIndex =

min(leaderCommit, index of last new entry)

AppendEntries RPC

Persistent state on all servers:

(Updated on stable storage before responding to RPCs)

currentTerm latest term server has seen (initialized to 0

on first boot, increases monotonically)

votedFor candidateId that received vote in current

term (or null if none)

log[] log entries; each entry contains command

for state machine, and term when entry

was received by leader (first index is 1)

Volatile state on all servers:

commitIndex index of highest log entry known to be

committed (initialized to 0, increases

monotonically)

lastApplied index of highest log entry applied to state

machine (initialized to 0, increases

monotonically)

Volatile state on leaders:

(Reinitialized after election)

nextIndex[] for each server, index of the next log entry

to send to that server (initialized to leader

last log index + 1)

matchIndex[] for each server, index of highest log entry

known to be replicated on server

(initialized to 0, increases monotonically)

State

All Servers:

• If commitIndex > lastApplied: increment lastApplied, apply

log[lastApplied] to state machine (§5.3)

• If RPC request or response contains term T > currentTerm:

set currentTerm = T, convert to follower (§5.1)

Followers (§5.2):

• Respond to RPCs from candidates and leaders

• If election timeout elapses without receiving AppendEntries

RPC from current leader or granting vote to candidate:

convert to candidate

Candidates (§5.2):

• On conversion to candidate, start election:

• Increment currentTerm

• Vote for self

• Reset election timer

• Send RequestVote RPCs to all other servers

• If votes received from majority of servers: become leader

• If AppendEntries RPC received from new leader: convert to

follower

• If election timeout elapses: start new election

Leaders:

• Upon election: send initial empty AppendEntries RPCs

(heartbeat) to each server; repeat during idle periods to

prevent election timeouts (§5.2)

• If command received from client: append entry to local log,

respond after entry applied to state machine (§5.3)

• If last log index ≥ nextIndex for a follower: send

AppendEntries RPC with log entries starting at nextIndex

• If successful: update nextIndex and matchIndex for

follower (§5.3)

• If AppendEntries fails because of log inconsistency:

decrement nextIndex and retry (§5.3)

• If there exists an N such that N > commitIndex, a majority

of matchIndex[i] ≥ N, and log[N].term == currentTerm:

set commitIndex = N (§5.3, §5.4).

Rules for Servers

Figure 2: A condensed summary of the Raft consensus algorithm (excluding membership changes and log compaction). The server

behavior in the upper-left box is described as a set of rules that t rigger independently and repeatedly. Section numbers such as §5.2

indicate where particular features are discussed. A formal specification [31] describes the algorithm more precisely.

4

剩余17页未读,继续阅读

资源评论

mamil

- 粉丝: 9

- 资源: 6

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功