arXiv:1911.02685v1 [cs.LG] 7 Nov 2019

1

A Comprehensive Survey on Transfer Learning

Fuzhen Zhuang, Zhiyuan Qi, Keyu Duan, Dongbo Xi, Yongchun Zhu, Hengshu Zhu, Senior Member, IEEE,

Hui Xiong, Senior Member, IEEE, and Qing He

Abstract—Transfer learning aims at improving the performance of target learners on target domains by transferring the knowledge

contained in different but related source domains. In this way, the dependence on a large number of target domain data can be reduced

for constructing target learners. Due to the wide application prospects, transfer learning has become a popular and promising area in

machine learning. Although there are already some valuable and impressive surveys on transfer learning, these surveys introduce

approaches in a relatively isolat ed way and lack the recent advances in transfer learning. As the rapid expansion of the transfer

learning area, it is both necessary and challenging to comprehensively review the relevant studies. This survey attempts to connect

and systematize the existing transfer learning researches, as well as to summar ize and interpret the mechanisms and the strategies in

a comprehensive way, which may help readers have a better understanding of the current research status and ideas. Different from

previous surveys, this survey paper reviews over forty representative transfer learning approaches from t he perspectives of data and

model. The applications of transfer l earning are also briefly introduced. In order to show the performance of different transfer learning

models, twenty representative transfer learning models are used for experiments. The models are performed on three different

datasets, i.e., Amazon Reviews, Reuters-21578, and Office-31. And the experimental results demonstrate the importance of selecting

appropriate transfer learning models for different applications in practice.

Index Terms—Transfer learning, machine learning, domain adaptation, interpretation.

✦

1 INTRODUCTION

A

LTHOUGH traditional machine learning technology has

achieved great success and has been successfully ap-

plied in many practical ap plications, it still has some limit a-

tions for certain real-world scenarios. The ideal scenario of

machine learning is that there are abundant labeled training

instances, which have the sa m e distribu tion of the test

data. However, collecting sufficient training data is often

expensive, time-consuming, or even unrealistic in many

applications. Semi-supervised learning can pa rtly solve this

problem by relaxing the need of mass labeled data. Typ-

ically, a semi-supervised approach only requires a limited

number of labeled data, and it utilizes a large amount of un-

labeled data to improve the learning accuracy. But in many

cases, unlabeled instances are also difficult to collect, which

usually makes the resultant traditional models uns atisfying.

Tra nsfer learning, which focuses on transferring the

knowledge across domains, is a promising machine learning

methodology for resolving the above p roblem. In practice,

a person who has learned the piano can learn the violin

faster than others. Inspired by human beings’ capabilities to

transfer knowledge across domains, transfer learning aims

to leverage knowledge from a related domain (called source

domain) to improve the learning performance or minimize

the number of labeled examples required in a target domain.

It is worth mentioning that the relationship between the

• Fuzhen Zhuang, Zhiyuan Qi, Keyu Duan, Dongbo Xi, Yo ngchun Zhu,

and Qing He are with the Key Laboratory of Intelligent Information

Processing of Chinese Academy of Sciences (CAS), Institute of Computing

Technology, CAS, Beijing 100190, China and the University of Chinese

Academy o f Sciences, Beijing 100049, China.

• Hengshu Zhu is with Baidu Inc., No. 10 Shangdi 10th Street, Haidian

District, Beijing, China.

• Hui Xiong is with Rutgers, the State University of New Jersey, 1

Washington Park, N e wark, New Jersey, USA.

• Zhiyuan Qi is with the equal contribution to the first authour.

source and the target domains affects the pe rformance of

the t ra nsfer learning models. Intuitively, a person who has

learned the viola usually learns the violin faster than the

one who has learned the piano. In contrast, if there is little

in common between the domains, the learner is particularly

likely to be negatively affected by the transferred knowl-

edge. This phenomenon is termed as negative transfer.

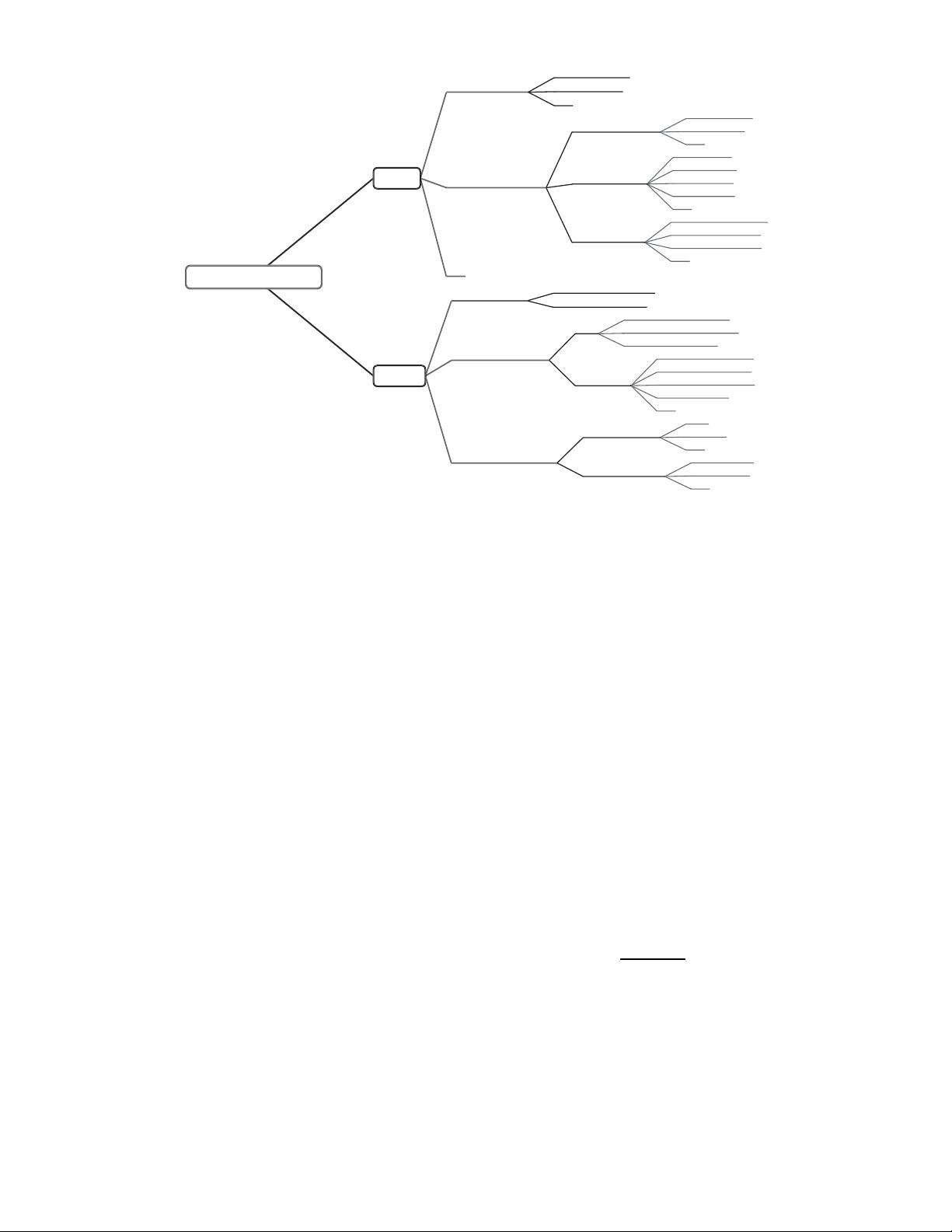

Roughly speaking, according to the discrepancy between

domains, transfer learning can be further divided into t wo

categories, i.e., homogeneous and heterogeneous transfer

learning [1 ]. Homogeneous transfer learning approaches are

developed and proposed for handling the situ ation t hat

the domains have the same feature sp ace. Some studies

assume that domains differ only in marginal distributions.

Therefore, they adapt the domains by correcting the sam-

ple selection bias [2] or covariate shift [3]. However, this

assumption does not hold in many cases. For example, in

sentiment classification problem, a word may have different

meaning tendencies in different domains. This phenomenon

is als o called context feature bias [4]. To solve this problem,

some studies further adapt the conditional distributions.

Heterogeneous transfer learning refers to t he knowledge

transfer process in the situation that the domains have

different feature space. In a ddition to distribution adapta-

tion, heterogeneous transfer learning requires feature space

adaptation [4], which makes it more complicated than ho-

mogeneous transfer learning.

The survey aims to give readers a comprehensive un-

derstanding about transfer learning from the perspectives

of data and model. The mechanisms and the strategies of

the transfer learning approaches are introduced to make

readers grasp how the approaches work. And a number of

the existing transfer learning researches are connected and

systematized. Specifically, over forty representative transfer

learning approaches are introduced. Besides, we conduct