UNCORRECTED

PROOF

1

2

Neighborhood classifiers

3

Qinghua Hu

*

, Daren Yu, Zongxia Xie

4

Harbin Institute of Technology, Harbin 150001, People’s Republic of China

5

6

Abstract

7

K nearest neighbor classifier (K-NN) is widely discussed and applied in pattern recognition and machine learning, however, as a

8

similar lazy classifier using local information for recognizing a new test, neighborhood classifier, few literatures are reported on. In this

9

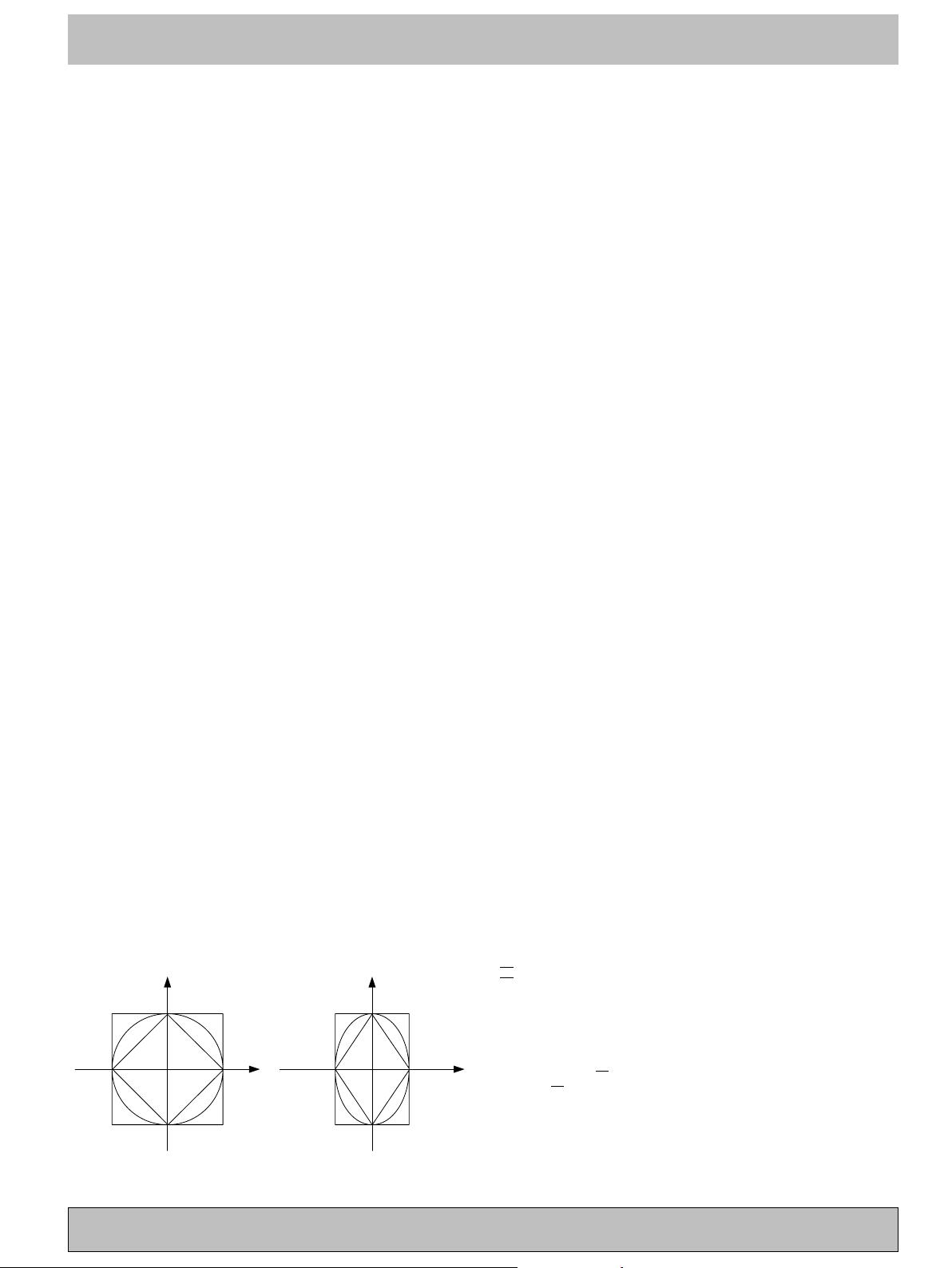

paper, we introduce neighborhood rough set model as a uniform framework to understand and implement neighborhood classifiers. This

10

algorithm integrates attribute reduction technique with classification learning. We study the influence of the three norms on attribute

11

reduction and classification, and compare neighborhood classifier with KNN, CART and SVM. The experimental results show that

12

neighborhood-based feature selection algorithm is able to delete most of the redundant and irrelevant features. The classification accu-

13

racies based on neighborhood classifier is superior to K-NN, CART in original feature spaces and reduced feature subspaces, and a little

14

weaker than SVM.

15

2006 Elsevier Ltd. All rights reserved.

16

Keywords: Metric space; Neighborhood; Rough set; Reduction; Classifier; Norm

17

18 1. Introduction

19 Giving a set of samples U, described with some input

20 variables C (also called condition attributes, feature) and

21 an output D (decision), the task of classification learning

22 is to construct a mapping from the condition to the deci-

23 sion labels based on the set of training samples. One of

24 the most popular learni ng and classification techniques is

25 the nearest neighbor search, introduced by Fix and Hodges

26 (1951). It has been proven to be a simple and yet powerful

27 recognition algorithm. In 1967, Cover and Hart (1967)

28 showed, under some con tinuity assumptions on the under-

29 lying distributions, that the asymptotic error rate of the 1-

30 NN rule is bounded from above by twice the Bayes error

31 (the error of the best possible rule). What is more, a key

32 feature of this decision rule is that it performs remarkably

33 well considering that no explicit knowledge of the underly-

34 ing distributions of the data is used. Furthermore, a simple

35generalization of this method, called K-NN-rule, in which a

36new pattern is classified into the class with the most mem-

37bers present among the K nearest neighbors, can be used to

38obtain good estimates of the Bayes error and its probability

39of error asymptotically approaches the Bayes error (Duda

40& Hart, 1973). However, K-NN classifiers require comput-

41ing all the distances between the training set and test sam-

42ples, it is time-consuming if the available samples are of

43very great size. Besides, when the number of prototypes

44in the training set is not large enough, the K-NN rule is

45no longer optimal. This problem becomes more relevant

46when having few prototypes compared to the intrinsic

47dimensionality of the feature space. After half century, a

48wide variety of algorithms have been developed to deal

49with these problems (Anil, 2006; Fu, Chan, & Cheung,

502000; Fukunaga & Narendra, 1975; Hart, 1968; Kuncheva

51& Lakhmi, 1999; Kushilevitz, Ostrovsky, & Rabani, 2000;

52Lindenbaum, Markovitch, & Rusakov, 2004; Short &

53Fukunaga, 1981; Vidal, 1986; Wilson & Martinez, 2000;

54Zhang, Yan, & Chen, 2004; Zhou, Yan, & Chen, 2006).

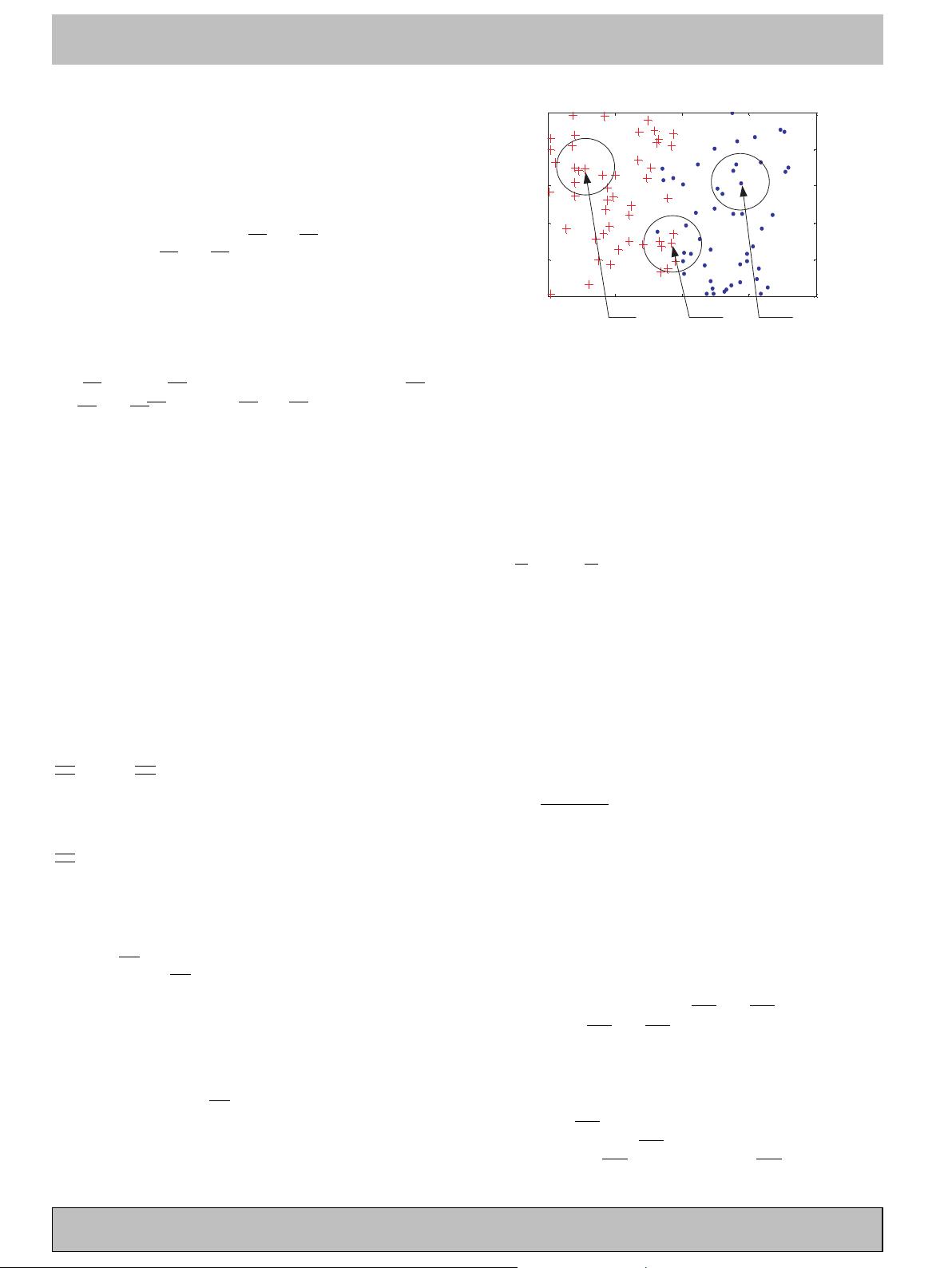

55From another viewpoint, some classification algorithms

56based on neighborhood were propo sed, where a ne w sam-

57ple is associated wi th a neighborhood, rather than some

0957-4174/$ - see front matter 2006 Elsevier Ltd. All rights reserved.

doi:10.1016/j.eswa.2006.10.043

*

Corresponding author. Tel.: +86 451 86413241 252; fax: +86 451

86413241 221.

E-mail address: huqinghua@hcms.hit.edu.cn (Q. Hu).

www.elsevier.com/locate/eswa

Expert Systems with Applications xxx (2006) xxx–xxx

Expert Systems

with Applications

ESWA 1881 No. of Pages 11, Model 5+

1 December 2006 Disk Used

ARTICLE IN PRESS

Please cite this article in press as: Hu, Q. et al., Neighborhood classifiers, Expert Systems with Applications (2006), doi:10.1016/

j.eswa.2006.10.043