3.2 Smoothness and the Curse of Dimensionality

For AI tasks such as vision and NLP, it seems hopeless to

rely only on simple parametric models (such as linear

models) because they cannot capture enough of the

complexity of interest unless provided with the appropriate

feature space. Conversely, machine learning researchers

have sought flexibility in local

6

nonparametric learners such as

kernel machines with a fixed generic local-response kernel

(such as the Gaussian kernel). Unfortunately, as argued at

length by Bengio and Monperrus [17], Bengio et al. [21],

Bengio and LeCun [16], Bengio [11], and Bengio et al. [25],

most of these algorithms only exploit the principle of local

generalization, i.e., the assumption that the target function (to

be learned) is smooth enough, so they rely on examples to

explicitly map out the wrinkles of the target function. General-

ization is mostly achieved by a form of local interpolation

between neighboring training examples. Although smooth-

ness can be a useful assumption, it is insufficient to deal

with the curse of dimensionality because the number of such

wrinkles (ups and downs of the target function) may grow

exponentially with the number of relevant interacting

factors when the data are represented in raw input space.

We advocate learning algorithms that are flexible and

nonparametric,

7

but do not rely exclusively on the smooth-

ness assumption. Instead, we propose to incorporate generic

priors such as those enumerated above into representation-

learning algorithms. Smoothness-based learners (such as

kernel machines) and linear models can still be useful on top

of such learned representations. In fact, the combination of

learning a representation and kernel machine is equivalent

to learning the kernel, i.e., the feature space. Kernel machines

are useful, but they depend on a prior definition of a suitable

similarity metric or a feature space in which naive similarity

metrics suffice. We would like to use the data, along with

very generic priors, to discover those features or, equiva-

lently, a similarity function.

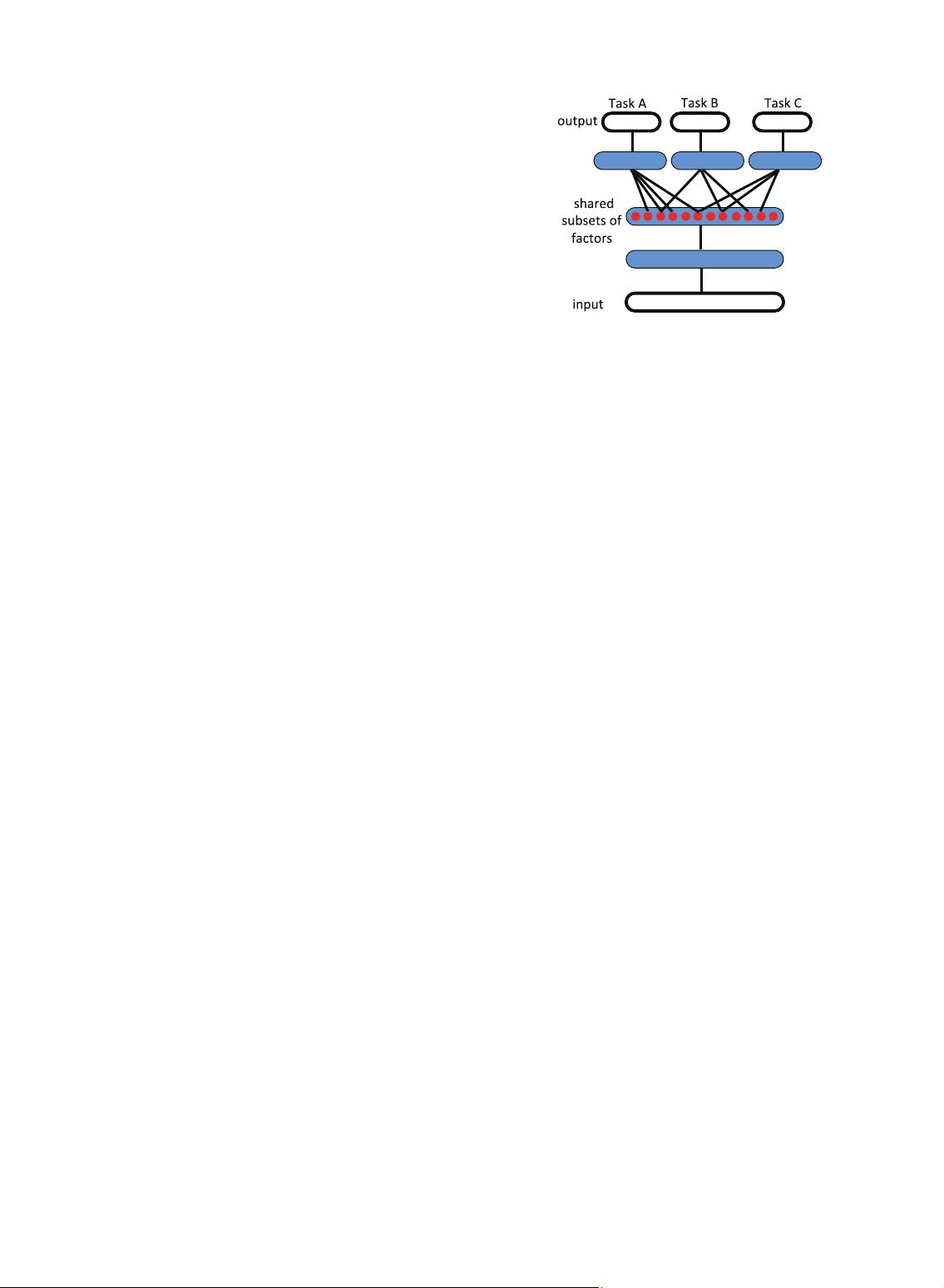

3.3 Distributed Representations

Good representations are expressive, meaning that a reason-

ably sized learned representation can capture a huge

number of possible input configurations. A simple counting

argument helps us to assess the expressiveness of a model

producing a representation: How many parameters does it

require compared to the number of input regions (or

configurations) it can distinguish? Learners of one-hot

representations, such as traditional clustering algorithms,

Gaussian mixtures, nearest-neighbor algorithms, decision

trees, or Gaussian SVMs, all require OðNÞ parameters (and/

or OðNÞ examples) to distinguish OðNÞ input regions. One

could naively believe that one cannot do better. However,

restricted Boltzmann machines (RBMs), sparse coding,

autoencoders, or multilayer neural networks can all

represent up to Oð2

k

Þ input regions using only OðNÞ

parameters (with k the number of nonzero elements in a

sparse representation, and k ¼ N in nonsparse RBMs and

other dense representations). These are all distributed

8

or

sparse

9

representations. The generalization of clustering to

distributed representations is multiclustering, where either

several clusterings take place in parallel or the same

clustering is applied on different parts of the input, such

as in the very popular hierarchical feature extraction for

object recognition based on a histogram of cluster categories

detected in different patches of an image [120], [48]. The

exponential gain from distributed or sparse representations

is discussed further in [11, Section 3.2, Fig. 3.2]. It comes

about because each parameter (e.g., the parameters of one of

the units in a sparse code or one of the units in an RBM) can

be reused in many examples that are not simply near

neighbors of each other, whereas with local generalization,

different regions in input space are basically associated with

their own private set of parameters, for example, as in

decision trees, nearest neighbors, Gaussian SVMs, and so

on. In a distributed representation, an exponentially large

number of possible subsets of features or hidden units can be

activated in response to a given input. In a single-layer

model, each feature is typically associated with a preferred

input direction, corresponding to a hyperplane in input

space, and the code or representation associated with that

input is precisely the pattern of activation (which features

respond to the input, and how much). This is in contrast

with a nondistributed representation such as the one

learned by most clustering algorithms, for example,

k-means, in which the representation of a given input

vector is a one-hot code identifying which one of a small

number of cluster centroids best represents the input.

10

3.4 Depth and Abstraction

Depth is a key aspect to the representation learning strategies

we consider in this paper. As we will discuss, deep

architectures are often challenging to train effectively, and

this has been the subject of much recent research and

progress. However, despite these challenges, they carry two

significant advantages that motivate our long-term interest in

discovering successful training strategies for deep architec-

tures. These advantages are: 1) deep architectures promote

the reuse of features, and 2) deep architectures can potentially

lead to progressively more abstract features at higher layers of

representations (more removed from the data).

Feature reuse. The notion of reuse, which explains the

power of distributed representations, is also at the heart of

the theoretical advantages behind deep learning, i.e.,

BENGIO ET AL.: REPRESENTATION LEARNING: A REVIEW AND NEW PERSPECTIVES 1801

6. Local in the sense that the value of the learned function at x depends

mostly on training examples x

ðtÞ

s close to x.

7. We understand nonparametric as including all learning algorithms

whose capacity can be increased appropriately as the amount of data

and its complexity demands it, for example, including mixture models

and neural networks where the number of parameters is a data-

selected hyperparameter.

8. Distributed representations, where k out of N representation

elements or feature values can be independently varied; for example,

they are not mutually exclusive. Each concept is represented by having k

features being turned on or active, while each feature is involved in

representing many concepts.

9. Sparse representations: distributed representations where only a few

of the elements can be varied at a time, i.e., k<N.

10. As discussed in [11], things are only slightly better when allowing

continuous-valued membership values, for example, in ordinary mixture

models (with separate parameters for each mixture component), but the

difference in representational power is still exponential [149]. The situation

may also seem better with a decision tree, where each given input is

associated with a one-hot code over the tree leaves, which deterministically

selects associated ancestors (the path from root to node). Unfortunately, the

number of different regions represented (equal to the number of leaves of

the tree) still only grows linearly with the number of parameters used to

specify it [15].

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜 信息提交成功

信息提交成功