没有合适的资源?快使用搜索试试~ 我知道了~

一篇不错的 关于视频对象 自动检测 的文章

资源推荐

资源详情

资源评论

IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY, VOL. 20, NO. 3, MARCH 2010 351

Automatic Detection and Analysis of Player Action

in Moving Background Sports Video Sequences

Haojie Li, Jinhui Tang, Member, IEEE, Si Wu, Yongdong Zhang, and Shouxun Lin, Member, IEEE

Abstract—This paper presents a system for automatically

detecting and analyzing complex player actions in moving back-

ground sports video sequences, aiming at action-based sports

videos indexing and providing kinematic measurements for coach

assistance and performance improvement. The system works

in a coarse-to-fine fashion. For an input video, in the coarse

granularity level, we automatically segment the highlights, that

is, the video clips containing the desired action as summaries

for general user viewing purposes; in the middle granularity

level, we recognize the action types to support action-based

video indexing and retrieval; and finally in the fine granularity

level, the critical kinematic parameters of player action are

obtained for sports professionals’ training purposes. However,

the complex and dynamic background of sports videos and the

complexity of player actions bring considerable difficulty to the

automatic analysis. To fulfill such a challenging task, robust

algorithms including global motion estimation with adaptive out-

liers filtering, object segmentation based on adaptive background

construction, and automatic human body tracking are proposed

in this paper. Two visual analyzing tools: motion panorama and

overlay composition, are also introduced. Real diving and jump

game videos are used to test the proposed system and algorithms,

and the extensive and encouraging experimental results show

their effectiveness.

Index Terms—Action recognition, human body tracking, sports

training, video analysis, video object segmentation.

I. Introduction

W

ITH THE EXPLOSIVE growth of digital videos in

our daily life, automatic video content analysis has

become a basic requirement for efficient indexing and retrieval

of long video sequences. In recent years, the analysis of sports

videos has attracted great attention due to its mass appeal

and tremendous commercial potentials. Many works have been

conducted and technologies and systems have been developed

for automatic or semi-automatic parsing the structure of sports

Manuscript received October 9, 2008; revised May 10, 2009. First version

published November 3, 2009; current version published March 5, 2010. This

work was supported in part by the National Basic Research Program of China

(Grant No. 2007CB311100), and the Co-building Program of the Beijing

Municipal Education Commission. This paper was recommended by Associate

Editor T. Fujii.

H. Li is with the School of Software, Dalian University of Technology,

Dalian 116620, China.

J. Tang is with the School of Computing, National University of Singapore,

117590 Singapore (e-mail: tangjh@comp.nus.edu.sg).

S. Wu is with France Telecom Research and Development Beijing, Beijing

100080, China.

Y. Zhang and S. Lin are with the Institute of Computing Technology,

Chinese Academy of Sciences, Beijing 100080, China.

Color versions of one or more of the figures in this paper are available

online at http://ieeexplore.ieee.org.

Digital Object Identifier 10.1109/TCSVT.2009.2035833

video [1], [2], semantic events detection and video summariza-

tion [3], [4], enhanced sports TV broadcasting [5], and content

insertion [6]. The difficulty of video analysis is the semantic

gap between the low-level audio-visual features and high-level

concepts. To bridge the gap, some mid-level representations

are constructed based on low-level features with clustering

or classification methods [7]. However, these representations

are extracted from frame-based approaches and have no direct

link to high-level semantics. Moreover, they can only deduce

coarse-level knowledge of the video contents for general user

viewing purposes. Object’s behavior is another type but more

effective mid-level representation for video content analysis

[8], [9]. At the same time, for sports professionals such as

coachers and players, it is desired for finer granularity analysis

to get more detailed information such as action names, match

tactics, kinematical, or biometric measurements from videos

for coaching assistant and performance improvements. For ex-

ample, by automatically recognizing the actions and obtaining

player body joint angles in diving game videos, the coaches or

players can easily retrieve and compare qualitatively or quan-

titatively the performed actions with the same ones performed

by elite players in video database, and then improve their

performance in later training or competition. To these ends,

this paper addresses the automatic detection, recognition, and

analysis of player actions from broadcast sports game videos

or videos recorded during daily training. More specifically,

we focus on one sports genre where the player performs

his or her action in a large arena and the camera needs to

be operated with pan/tilt/zoom to capture the player in the

middle of the image. Usually, one or more cameras are placed

in the side-view to record the entire detailed action. This

includes a broad category of individual sports videos, such

as diving, jumps, gymnastics videos, and so on (see Fig. 1).

For such kind of action-critical sports videos, general users

would like to rapidly locate and watch the highlights, namely,

the video clips containing the desired action, while sports

professionals will be more interested in the performances of

the players. Manual analysis of sports videos to achieve such

aims is labor-intensive and time-consuming. Therefore, the

systems and techniques that can automatically parse long time

videos into browse-able actions and further provide kinematic

measurements for performance analysis are demanding.

In this paper,we present an integrated coarse-to-fine sports

video analysis system and various robust algorithms. Diving

videos are used as case study to demonstrate the effectiveness

of the system and algorithms due to the following motivations:

1051-8215/$26.00

c

2010 IEEE

352 IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY, VOL. 20, NO. 3, MARCH 2010

Fig. 1. Some individual sports actions captured with a side-view camera.

From left to right: diving, long jump, and tumbling.

1) diving is among one of the most popular spectator sports;

2) to make the player actions clearly viewed by the references

and audiences, there are always side-view shots for diving

videos; and 3) the complex and moving background of diving

videos and the complexity of diving action make the auto-

matic analysis a rather challenging task. To show the general

versatility of the proposed algorithms, we also test some key

algorithms on jump videos.

A. Related Work

Human action detection and recognition in videos is a hot

topic in computer vision research community and various

approaches have been proposed [10], [11]. But in the field of

sports video, due to the dynamic background and the complex-

ity of sports action, there existed few works and most of them

were focused on action recognition with simple background,

e.g., tennis or soccer videos, or focused on relatively simple

action recognition. These works either took not much pain

to segment player’s body or didn’t need accurate tracking and

segmentation of the players. One of the earlier works on sports

action recognition was provided by Yamato et al. in [12].

They proposed using discrete hidden Markov models (HMMs)

to recognize image sequences of six different tennis strokes

among three subjects. Their system worked in a constrained

testing environment where the mesh features they used were

extracted from binarized human images which were obtained

from a pre-known background. Sullivan et al. [13] presented

a method for detecting tennis players’ strokes based on sim-

ilarity computed by point-to-point correspondence between

shapes. Although impressive results in terms of posture match-

ing were given, the extraction of edge needed a clean back-

ground. Miyamori et al. [8] analyzed the tennis player behav-

iors based on silhouette transitions. They extracted the player

silhouette images by frame-differencing, so the static back-

ground was necessary. Efros et al. [14] developed a generic

approach to recognizing action in “medium field” sports video

by introducing a novel motion descriptor based on noisy op-

tical flow measurements in a spatio-temporal volume for each

stabilized human figure. Similarly, Zhu et al. [9] proposed slice

based optical flow histograms as motion descriptor to classify

player’s basic actions such as left-swing/right-swing in low

resolution tennis video sequence. Lu et al. [15] used the grids

of histograms of oriented gradient as representation of players

to track and recognize player actions like skate left/skate right

and running/walking in hockey and soccer sequences. The

limitation of the above three works [9], [14], [15] is that they

only presented results with relatively simple actions and simple

background such as soccer field, tennis court, or hockey field.

Compared with these previous works, the task in this paper,

to automatically detect and classify more complex actions in

video captured with moving camera, is more challenging.

Recent advances in video technology and computing power

have motivated the research of using computer vision and

image processing techniques to analyze sports game video

for tactics statistics, computer-aided coaching, or performance

improvements. Compared with previous methods that require

retro-reflective markers or magnetic sensors to be placed on

a player body, video-based approach has more advantages:

much less cost, no interference to the performance of player

and can analyze the rich archived video clips. Among such

works, Pascual et al. [16] developed a method for soccer player

position tracking aiming at the kinematical motion analysis

through a graph representation with four static cameras. Aided

with manually tracking in some cases, their method could

collect statistics measurements of each player in the game.

Wang et al. [17] classified tennis games into 58 winning

tactics patterns for archiving video clips and training purposes

using the ball trajectory and Bayesian network. To recover the

trajectory and ball landing position they turned to a wide-view

calibrated camera. The widely-studied human body model

based tracking approach has also been suggested for sports

biometric analysis. As shown in [18], a 42-dimensional body

model was used to track the golfer’s postural information and

then the obtained information was analyzed with respect to

a learned ideal motion. Nevertheless, their system ran such

slowly that each frame would take about 25 min to process,

and the initial parameters of the body model needed be set

up manually before tracking. Raquel et al. [19] incorporated

strong dynamic models into the human body tracking pro-

cess to recover the 3-D postural parameters of golfer from

monocular golf videos. One similar work to our player action

analysis was provided by Ryan et al. [20]. In their system,

the authors analyzed the acrobatic gestures of several sports

through modeling and characterizing acrobatic movements and

image processing techniques. However, their work was less

challenging than ours since that the player movements were

captured with static camera and they only recognized simple

acrobatic gestures by global measurements analysis.

B. Contributions of This Paper

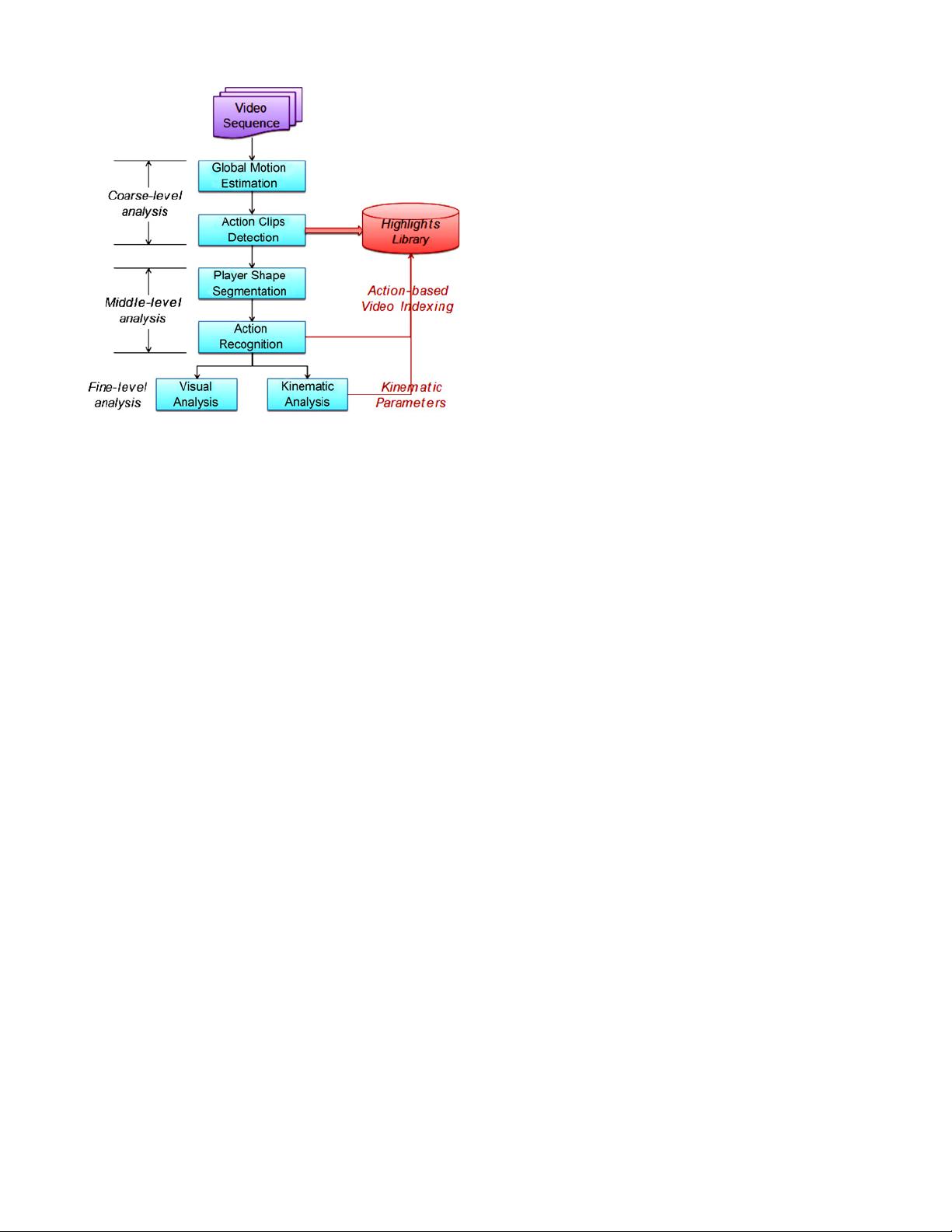

In this paper, we develop robust algorithms and a system

for fully automatic analysis of complex actions in challeng-

ing dynamic background videos, aiming at high-level sports

video indexing/retrieval and training purposes. The schematic

diagram of the proposed system is illustrated in Fig. 2. For

an input video, global motion parameters between adjacent

frames are first estimated, which are used as motion feature

for the highlights, to say, action clips detection. The detected

highlights are stored into library as video summaries for

user’s quick browsing. Then the player body shapes in action

clips are segmented and fed into hidden Markov models to

recognize the action type, which is used to index the highlight

library. With the segmented shape and recognized action type,

some critical kinematic parameters of action are automatically

obtained through the kinematic analysis component, and visual

analysis is conducted.

LI et al.: AUTOMATIC DETECTION AND ANALYSIS OF PLAYER ACTION IN MOVING BACKGROUND SPORTS VIDEO SEQUENCES 353

Fig. 2. Block diagram of the proposed system.

The contributions of our paper consist of the following

points.

1) An integrated framework for automatic analysis of sports

video in a coarse-to-fine fashion is presented, which

attempts to extract semantic information with different

granularity to suffice the retrieval requirements from

general users to sports professionals.

2) To robustly estimate the global motion between adjacent

video frames, we propose an adaptive outliers filtering

strategy using Fisher linear discriminant analysis.

3) An object segmentation algorithm based on adaptive

dynamic background construction is proposed, which is

robust to complex and dynamic scenes.

4) The automatic kinematic analysis of player body move-

ments is achieved by model fitting. By transferring the

initial model parameters from the recognized action

templates, our method avoids the manual setup of human

body model.

5) With the enabling techniques above, we present two

visual tools for individual sports game training: motion

panorama and overlay composition, which are percep-

tible for the visual analysis or comparing of player

performance.

II. Global Motion Estimation

Global motion (GM) is referred to the motion of the

background in a video sequence caused by the camera motion.

Global motion estimation (GME) is a process to estimate

the rotation, scaling, and translation parameters of camera

motion by comparing two different frames of a video, which

is the basic technique to the following algorithms of this

paper. The methods for GME can be classified into two cat-

egories: differential method [21], [22] and feature matching-

based method [23]. For methods among both categories, their

accuracies are influenced by the so-called outliers, to say, the

measurements whose motion is not consistent with the global

motion, mainly caused by local object (such as player) motion.

Some robust estimation methods have been developed to

handle outliers [21]–[23]. However, these methods used either

experimentally determined or manually specified thresholds

to remove outliers, thus are not adaptive to other data. In

this paper, we propose an adaptive outlier filtering strategy

using Fisher linear discriminant analysis [24] to improve the

robustness of GME.

In this paper, we adopt the 6-parameter affine model [25] to

describe the camera motion since it is computationally efficient

but powerful to model the translation, rotation, and scaling op-

erations of camera when the relative depth of the object is not

large, which is applicable to most videos including our case.

Let u

i

=(x

i

,y

i

)

T

be the position of a point p in the current

frame I

k

, and u

i

=(x

i

,y

i

)

T

be the position of p in frame

I

k−1

, then we can build the relation between u

i

and u

i

using

the affine model as follows:

u

i

= H

i

A (1)

where A =(a, b, c, d, e, f )

T

is the global motion parameter

and H

i

isa2× 6 matrix

H

i

=

x

i

y

i

10 00

000x

i

y

i

1

. (2)

Assume we have N feature point pairs (u

i

,u

i

)(i =

1,...,N; N ≥ 3) from frames I

k

and I

k−1

, then we have

U = HA (3)

where H =(H

1

T

,H

2

T

,...,H

N

T

)

T

,U=(u

1

T

,u

2

T

,...,

u

N

T

)

T

. Thus A can be obtained by solving (3) using the least

squares method.

The feature point pairs are constructed as follows. We first

calculate the global standard variance, gstd, of pixel values in

frame I

k

. Then we scan frame I

k

and check each n × n block.

If the standard variance, std, of a block is large enough, to

say, larger than α ∗ gstd, the upper left corner of the block is

selected as u

i

. u

i

is obtained by searching nearby blocks in

frame I

k−1

. In this way, we collect all the point pairs between

I

k

and I

k−1

.

Suppose A

∗

is the initial solution for global motion, we

calculate the residual error for each pair (u

i

,u

i

)as

r

i

= u

i

− H

i

A

∗

. (4)

Since A* is the approximate solution to GM and the motion

of outliers is not consistent with GM, the residual errors of

outliers will be larger than those of inliers. Therefore, we can

separate the point pairs into inliers and outliers according to

their residual errors and then use the inliers to refine A. Let

R = {|r

1

|,...,|r

N

|} be the residual error set for point pair set

{(u

1

,u

1

),...,(u

N

,u

N

)}, R

in

and R

out

be the residual error set

for inliers and outliers. The key problem is to find a appropriate

threshold T such that

R

in

= {|r

i

|||r

i

| <T},R

out

= {|r

i

|||r

i

|≥T }

subject to R

in

R

out

= R & R

in

R

out

= ∅. (5)

Assume that the mean for R

in

is µ

in

, probability is p

in

=

|R

in

|/N; the mean for R

out

is µ

out

, and probability is p

out

=

剩余13页未读,继续阅读

资源评论

huangjie817

- 粉丝: 0

- 资源: 8

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜最新资源

- 办工,日常生活中电脑中的磁盘清理功能,可以查找本机的指定大小文件,非常方便!

- cuda-使用cuda并行加速实现之gemv.zip

- cuda-使用cuda并行加速实现之softmax.zip

- 基于Opencv的车牌识别系统

- cuda-使用cuda并行加速实现之reduce.zip

- 基于Protel 99se 超级元件库电子器件芯片库原理图库2MB(810个)+PCB封装库10MB(1240个)合集.zip

- mmexport1713919112597.jpg

- cuda-使用cuda并行加速实现之kmeans聚类算法的实现.zip

- web-work-2024-4-24

- cuda-使用cuda并行加速实现之UpsampleNearest2D.zip

资源上传下载、课程学习等过程中有任何疑问或建议,欢迎提出宝贵意见哦~我们会及时处理!

点击此处反馈

安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功