没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

试读

19页

神经网络吸收信息的能力受限于其参数的数量。在这篇论文中,我们提出一种新类型的层——稀疏门控专家混合层(Sparsely-Gated Mixture-of-Experts(MoE)),它能够在仅需增加一点计算的基础上被用于有效提升模型的能力。这种层包含了多达数万个前向的子网络(feed-forward sub-networks,被称为专家(expert)),总共包含了多达数百亿个参数。一个可训练的门网络(gating network)可以确定这些专家的稀疏组合以用于每一个样本。我们将这种 MoE 应用到了语言建模任务上——在这种任务中,模型能力对吸收训练语料库中可用的大量世界知识而言是至关重要的。我们提出了将 MoE 层注入堆叠 LSTM(stacked LSTM)的新型语言模型架构,得到的模型的可用参数数量可比其它模型多几个数量级。在语言建模和机器翻译基准上,我们在更低的成本上实现了可与当前最佳表现媲美或更好的结果,其中包括在 1 Billion Word Language Modeling Benchmark 上测得的 29.9 的困惑度(perplexity),以及在 WMT'14 En to Fr(英法翻译)和 En to De(英德翻译)上分别得到了 40.56 和 26.03 的 BLEU 分数。

资源推荐

资源详情

资源评论

Under review as a conference paper at ICLR 2017

OUTRAGEOUSLY LARGE NEURAL NETWORKS:

THE SPARSELY-GATED MIXTURE-OF-EXPERTS LAYER

Noam Shazeer

1

, Azalia Mirhoseini

∗†1

, Krzysztof Maziarz

∗2

, Andy Davis

1

, Quoc Le

1

, Geoffrey

Hinton

1

and Jeff Dean

1

1

Google Brain, {noam,azalia,andydavis,qvl,geoffhinton,jeff}@google.com

2

Jagiellonian University, Cracow, krzysztof.maziarz@student.uj.edu.pl

ABSTRACT

The capacity of a neural network to absorb information is limited by its number of

parameters. Conditional computation, where parts of the network are active on a

per-example basis, has been proposed in theory as a way of dramatically increas-

ing model capacity without a proportional increase in computation. In practice,

however, there are significant algorithmic and performance challenges. In this

work, we address these challenges and finally realize the promise of conditional

computation, achieving greater than 1000x improvements in model capacity with

only minor losses in computational efficiency on modern GPU clusters. We in-

troduce a Sparsely-Gated Mixture-of-Experts layer (MoE), consisting of up to

thousands of feed-forward sub-networks. A trainable gating network determines

a sparse combination of these experts to use for each example. We apply the MoE

to the tasks of language modeling and machine translation, where model capacity

is critical for absorbing the vast quantities of knowledge available in the training

corpora. We present model architectures in which a MoE with up to 137 billion

parameters is applied convolutionally between stacked LSTM layers. On large

language modeling and machine translation benchmarks, these models achieve

significantly better results than state-of-the-art at lower computational cost.

1 INTRODUCTION AND RELATED WORK

1.1 CONDITIONAL COMPUTATION

Exploiting scale in both training data and model size has been central to the success of deep learn-

ing. When datasets are sufficiently large, increasing the capacity (number of parameters) of neural

networks can give much better prediction accuracy. This has been shown in domains such as text

(Sutskever et al., 2014; Bahdanau et al., 2014; Jozefowicz et al., 2016; Wu et al., 2016), images

(Krizhevsky et al., 2012; Le et al., 2012), and audio (Hinton et al., 2012; Amodei et al., 2015). For

typical deep learning models, where the entire model is activated for every example, this leads to

a roughly quadratic blow-up in training costs, as both the model size and the number of training

examples increase. Unfortunately, the advances in computing power and distributed computation

fall short of meeting such demand.

Various forms of conditional computation have been proposed as a way to increase model capacity

without a proportional increase in computational costs (Davis & Arel, 2013; Bengio et al., 2013;

Eigen et al., 2013; Ludovic Denoyer, 2014; Cho & Bengio, 2014; Bengio et al., 2015; Almahairi

et al., 2015). In these schemes, large parts of a network are active or inactive on a per-example

basis. The gating decisions may be binary or sparse and continuous, stochastic or deterministic.

Various forms of reinforcement learning and back-propagation are proposed for trarining the gating

decisions.

∗

Equally major contributors

†

Work done as a member of the Google Brain Residency program (g.co/brainresidency)

1

arXiv:1701.06538v1 [cs.LG] 23 Jan 2017

Under review as a conference paper at ICLR 2017

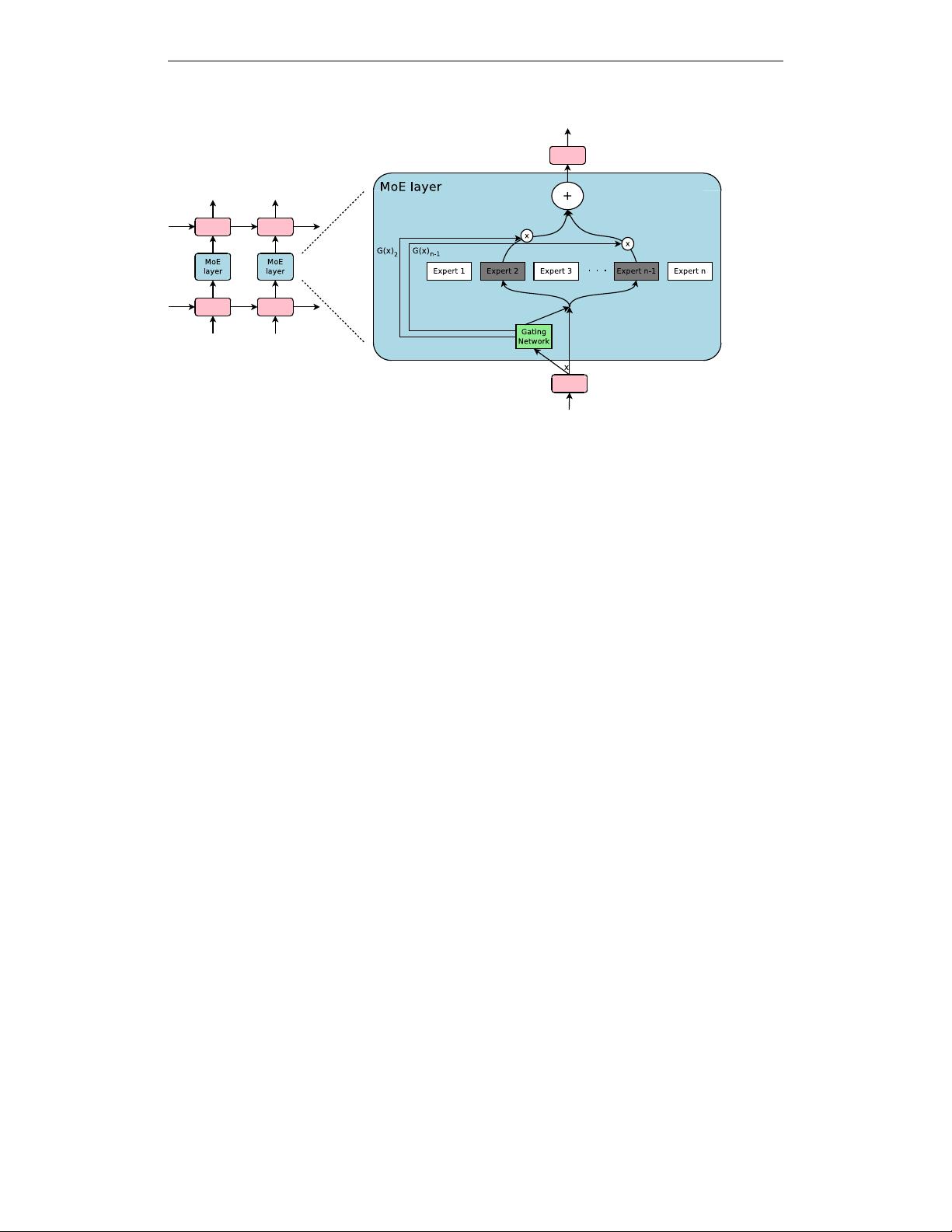

Figure 1: A Mixture of Experts (MoE) layer embedded within a recurrent language model. In this

case, the sparse gating function selects two experts to perform computations. Their outputs are

modulated by the outputs of the gating network.

While these ideas are promising in theory, no work to date has yet demonstrated massive improve-

ments in model capacity, training time, or model quality. We blame this on a combination of the

following challenges:

• Modern computing devices, especially GPUs, are much faster at arithmetic than at branch-

ing. Most of the works above recognize this and propose turning on/off large chunks of the

network with each gating decision.

• Large batch sizes are critical for performance, as they amortize the costs of parameter trans-

fers and updates. Conditional computation reduces the batch sizes for the conditionally

active chunks of the network.

• Network bandwidth can be a bottleneck. A cluster of GPUs may have computational power

thousands of times greater than the aggregate inter-device network bandwidth. To be com-

putationally efficient, the relative computational versus network demands of an algorithm

must exceed this ratio. Embedding layers, which can be seen as a form of conditional com-

putation, are handicapped by this very problem. Since the embeddings generally need to

be sent across the network, the number of (example, parameter) interactions is limited by

network bandwidth instead of computational capacity.

• Depending on the scheme, loss terms may be necessary to achieve the desired level of

sparsity per-chunk and/or per example. Bengio et al. (2015) use three such terms. These

issues can affect both model quality and load-balancing.

• Model capacity is most critical for very large data sets. The existing literature on condi-

tional computation deals with relatively small image recognition data sets consisting of up

to 600,000 images. It is hard to imagine that the labels of these images provide a sufficient

signal to adequately train a model with millions, let alone billions of parameters.

In this work, we for the first time address all of the above challenges and finally realize the promise

of conditional computation. We obtain greater than 1000x improvements in model capacity with

only minor losses in computational efficiency and significantly advance the state-of-the-art results

on public language modeling and translation data sets.

1.2 OUR APPROACH: THE SPARSELY-GATED MIXTURE-OF-EXPERTS LAYER

Our approach to conditional computation is to introduce a new type of general purpose neural net-

work component: a Sparsely-Gated Mixture-of-Experts Layer (MoE). The MoE consists of a num-

ber of experts, each a simple feed-forward neural network, and a trainable gating network which

selects a sparse combination of the experts to process each input (see Figure 1). All parts of the

network are trained jointly by back-propagation.

2

Under review as a conference paper at ICLR 2017

While the introduced technique is generic, in this paper we focus on language modeling and machine

translation tasks, which are known to benefit from very large models. In particular, we apply a MoE

convolutionally between stacked LSTM layers (Hochreiter & Schmidhuber, 1997), as in Figure 1.

The MoE is called once for each position in the text, selecting a potentially different combination

of experts at each position. The different experts tend to become highly specialized based on syntax

and semantics (see Appendix E Table 9). On both language modeling and machine translation

benchmarks, we improve on best published results at a fraction of the computational cost.

1.3 RELATED WORK ON MIXTURES OF EXPERTS

Since its introduction more than two decades ago (Jacobs et al., 1991; Jordan & Jacobs, 1994),

the mixture-of-experts approach has been the subject of much research. Different types of expert

architectures hae been proposed such as SVMs (Collobert et al., 2002), Gaussian Processes (Tresp,

2001; Theis & Bethge, 2015; Deisenroth & Ng, 2015), Dirichlet Processes (Shahbaba & Neal, 2009),

and deep networks. Other work has focused on different expert configurations such as a hierarchical

structure (Yao et al., 2009), infinite numbers of experts (Rasmussen & Ghahramani, 2002), and

adding experts sequentially (Aljundi et al., 2016). Garmash & Monz (2016) suggest an ensemble

model in the format of mixture of experts for machine translation. The gating network is trained on

a pre-trained ensemble NMT model.

The works above concern top-level mixtures of experts. The mixture of experts is the whole model.

Eigen et al. (2013) introduce the idea of using multiple MoEs with their own gating networks as

parts of a deep model. It is intuitive that the latter approach is more powerful, since complex prob-

lems may contain many sub-problems each requiring different experts. They also allude in their

conclusion to the potential to introduce sparsity, turning MoEs into a vehicle for computational

computation.

Our work builds on this use of MoEs as a general purpose neural network component. While Eigen

et al. (2013) uses two stacked MoEs allowing for two sets of gating decisions, our convolutional

application of the MoE allows for different gating decisions at each position in the text. We also

realize sparse gating and demonstrate its use as a practical way to massively increase model capacity.

2 THE STRUCTURE OF THE MIXTURE-OF-EXPERTS LAYER

The Mixture-of-Experts (MoE) layer consists of a set of n “expert networks" E

1

, · · · , E

n

, and a

“gating network" G whose output is a sparse n-dimensional vector. Figure 1 shows an overview

of the MoE module. The experts are themselves neural networks, each with their own parameters.

Although in principle we only require that the experts accept the same sized inputs and produce the

same-sized outputs, in our initial investigations in this paper, we restrict ourselves to the case where

the models are feed-forward networks with identical architectures, but with separate parameters.

Let us denote by G(x) and E

i

(x) the output of the gating network and the output of the i-th expert

network for a given input x. The output y of the MoE module can be written as follows:

y =

n

X

i=1

G(x)

i

E

i

(x) (1)

We save computation based on the sparsity of the output of G(x). Wherever G(x)

i

= 0, we need not

compute E

i

(x). In our experiments, we have up to thousands of experts, but only need to evaluate

a handful of them for every example. If the number of experts is very large, we can reduce the

branching factor by using a two-level hierarchical MoE. In a hierarchical MoE, a primary gating

network chooses a sparse weighted combination of “experts", each of which is itself a secondary

mixture-of-experts with its own gating network. In the following we focus on ordinary MoEs. We

provide more details on hierarchical MoEs in Appendix B.

Our implementation is related to other models of conditional computation. A MoE whose experts are

simple weight matrices is similar to the parameterized weight matrix proposed in (Cho & Bengio,

2014). A MoE whose experts have one hidden layer is similar to the block-wise dropout described

in (Bengio et al., 2015), where the dropped-out layer is sandwiched between fully-activated layers.

3

Under review as a conference paper at ICLR 2017

2.1 GATING NETWORK

Softmax Gating: A simple choice of non-sparse gating function (Jordan & Jacobs, 1994) is to

multiply the input by a trainable weight matrix W

g

and then apply the Sof tmax function.

G

σ

(x) = Sof tmax(x · W

g

) (2)

Noisy Top-K Gating: We add two components to the Softmax gating network: sparsity and noise.

Before taking the softmax function, we add tunable Gaussian noise, then keep only the top k values,

setting the rest to −∞ (which causes the corresponding gate values to equal 0). The sparsity serves

to save computation, as described above. While this form of sparsity creates some theoretically

scary discontinuities in the output of gating function, we have not yet observed this to be a problem

in practice. The noise term helps with load balancing, as will be discussed in Appendix A. The

amount of noise per component is controlled by a second trainable weight matrix W

noise

.

G(x) = Sof tmax(KeepT opK(H(x), k)) (3)

H(x)

i

= (x · W

g

)

i

+ StandardNormal() · Softplus((x · W

noise

)

i

) (4)

KeepT opK(v, k)

i

=

v

i

if v

i

is in the top k elements of v.

−∞ otherwise.

(5)

Training the Gating Network We train the gating network by simple back-propagation, along

with the rest of the model. If we choose k > 1, the gate values for the top k experts have nonzero

derivatives with respect to the weights of the gating network. This type of occasionally-sensitive

behavior is described in (Bengio et al., 2013) with respect to noisy rectifiers. Gradients also back-

propagate through the gating network to its inputs. Our method differs here from (Bengio et al.,

2015) who use boolean gates and a REINFORCE-style approach to train the gating network.

3 ADDRESSING PERFORMANCE CHALLENGES

3.1 THE SHRINKING BATCH PROBLEM

On modern CPUs and GPUs, large batch sizes are necessary for computational efficiency, so as

to amortize the overhead of parameter loads and updates. If the gating network chooses k out of

n experts for each example, then for a batch of b examples, each expert receives a much smaller

batch of approximately

kb

n

b examples. This causes a naive MoE implementation to become

very inefficient as the number of experts increases. The solution to this shrinking batch problem is

to make the original batch size as large as possible. However, batch size tends to be limited by the

memory necessary to store activations between the forwards and backwards passes. We propose the

following techniques for increasing the batch size:

Mixing Data Parallelism and Model Parallelism: In a conventional distributed training setting,

multiple copies of the model on different devices asynchronously process distinct batches of data,

and parameters are synchronized through a set of parameter servers. In our technique, these different

batches run synchronously so that they can be combined for the MoE layer. We distribute the

standard layers of the model and the gating network according to conventional data-parallel schemes,

but keep only one shared copy of each expert. Each expert in the MoE layer receives a combined

batch consisting of the relevant examples from all of the data-parallel input batches. The same set

of devices function as data-parallel replicas (for the standard layers and the gating networks) and

as model-parallel shards (each hosting a subset of the experts). If the model is distributed over d

devices, and each device processes a batch of size b, each expert receives a batch of approximately

kbd

n

examples. Thus, we achieve a factor of d improvement in expert batch size.

In the case of a hierarchical MoE (Section B), the primary gating network employs data parallelism,

and the secondary MoEs employ model parallelism. Each secondary MoE resides on one device.

4

剩余18页未读,继续阅读

资源评论

阿炜

- 粉丝: 129

- 资源: 24

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功