没有合适的资源?快使用搜索试试~ 我知道了~

温馨提示

试读

31页

Understanding disk failure rates What Understanding disk failure rates What Understanding disk failure rates What Understanding disk failure rates What Understanding disk failure rates What Understanding disk failure rates What

资源推荐

资源详情

资源评论

8

Understanding Disk Failure Rates: What

Does an MTTF of 1,000,000 Hours Mean

to You?

BIANCA SCHROEDER and GARTH A. GIBSON

Carnegie Mellon University

Component failure in large-scale IT installations is becoming an ever-larger problem as the number

of components in a single cluster approaches a million.

This article is an extension of our previous study on disk failures [Schroeder and Gibson 2007]

and presents and analyzes field-gathered disk replacement data from a number of large produc-

tion systems, including high-performance computing sites and internet services sites. More than

110,000 disks are covered by this data, some for an entire lifetime of five years. The data includes

drives with SCSI and FC, as well as SATA interfaces. The mean time-to-failure (MTTF) of those

drives, as specified in their datasheets, ranges from 1,000,000 to 1,500,000 hours, suggesting a

nominal annual failure rate of at most 0.88%.

We find that in the field, annual disk replacement rates typically exceed 1%, with 2–4% common

and up to 13% observed on some systems. This suggests that field replacement is a fairly different

process than one might predict based on datasheet MTTF.

We also find evidence, based on records of disk replacements in the field, that failure rate is not

constant with age, and that rather than a significant infant mortality effect, we see a significant

The MPP2 data was collected and made available using the Molecular Science Computing Facility

(MSCF) in the William R. Wiley Environmental Molecular Sciences Laboratory, a national scientific

user facility sponsored by the U.S. Department of Energy’s Office of Biological and Environmental

Research. This material is based upon work supported by the Department of Energy under Award

no. DE-FC02-06ER25767 and on research sponsored in part by the Army Research Office under

agreement no. DAAD19-02-1-0389. This report was prepared as an account of work sponsored by an

agency of the United States Government. Neither the United States Government nor any agency

thereof, nor any of their employees, makes any warranty, express or implied, or assumes any legal

liability or responsibility for the accuracy, completeness, or usefulness of any information, appa-

ratus, product, or process disclosed, or represents that its use would not infringe privately owned

rights. Reference herein to any specific commercial product, process, or service by trade name,

trademark, manufacturer, or otherwise does not necessarily constitute or imply its endorsement,

recommendation, or favoring by the United States Government or any agency thereof. The views

and opinions of authors expressed herein do not necessarily state or reflect those of the United

States Government or any agency thereof.

Authors’ addresses: B. Schroeder (corresponding author), G. A. Gibson, Computer Science

Department, Carnegie Mellon University, 5000 Forbes Ave., Pittsburgh, PA 15213; email:

{bianca,garth}@cs.cmu.edu.

Permission to make digital or hard copies of part or all of this work for personal or classroom use is

granted without fee provided that copies are not made or distributed for profit or direct commercial

advantage and that copies show this notice on the first page or initial screen of a display along

with the full citation. Copyrights for components of this work owned by others than ACM must be

honored. Abstracting with credit is permitted. To copy otherwise, to republish, to post on servers,

to redistribute to lists, or to use any component of this work in other works requires prior specific

permission and/or a fee. Permissions may be requested from Publications Dept., ACM, Inc., 2 Penn

Plaza, Suite 701, New York, NY 10121-0701 USA, fax +1 (212) 869-0481, or permissions@acm.org.

C

2007 ACM 1553-3077/2007/10-ART8 $5.00 DOI = 10.1145/1288783.1288785 http://doi.acm.org/

10.1145/1288783.1288785

ACM Transactions on Storage, Vol. 3, No. 3, Article 8, Publication date: October 2007.

8:2

•

B. Schroeder and G. A. Gibson

early onset of wear-out degradation. In other words, the replacement rates in our data grew con-

stantly with age, an effect often assumed not to set in until after a nominal lifetime of 5 years.

Interestingly, we observe little difference in replacement rates between SCSI, FC, and SATA

drives, potentially an indication that disk-independent factors such as operating conditions affect

replacement rates more than component-specific ones. On the other hand, we see only one instance

of a customer rejecting an entire population of disks as a bad batch, in this case because of media

error rates, and this instance involved SATA disks.

Time between replacement, a proxy for time between failure, is not well modeled by an ex-

ponential distribution and exhibits significant levels of correlation, including autocorrelation and

long-range dependence.

Categories and Subject Descriptors: B.8.0 [Performance and Reliability]: General; C.4

[Computer Systems Organization]: Performance of Systems; D.4.5 [Operating Systems]:

Reliability

General Terms: Measurement, Reliability

Additional Key Words and Phrases: Hard drive replacements, hard drive failure, storage reliabil-

ity, MTTF, annual failure rates, annual replacement rates, time between failure, wear-out, infant

mortality, failure correlation, datasheet MTTF

ACM Reference Format:

Schroeder, B. and Gibson, G. A. 2007. Understanding disk failure rates: What does an MTTF of

1,000,000 hours mean to you? ACM Trans. Storage 3, 3, Article 8 (October 2007), 31 pages. DOI =

10.1145/1288783.1288785 http://doi.acm.org/ 10.1145/1288783.1288785

1. MOTIVATION

Despite major efforts both in industry and in academia, high reliability remains

a major challenge in running large-scale IT systems, and disaster prevention

and cost of actual disasters make up a large fraction of the total cost of owner-

ship. With ever-larger server clusters, maintaining high levels of reliability and

availability is a growing problem for many sites, including high-performance

computing systems and Internet service providers. A particularly big concern

is the reliability of storage systems, for several reasons. First, failure of stor-

age can not only cause temporary data unavailability, but in the worst case

can lead to permanent data loss. Second, technology trends and market forces

may combine to make storage system failures occur more frequently in the fu-

ture [Prabhakaran et al. 2005]. Finally, the size of storage systems in modern,

large-scale IT installations has grown to an unprecedented scale with thou-

sands of storage devices, making component failures the norm rather than the

exception [Ghemawat et al. 2003].

Large-scale IT systems therefore need better system design and manage-

ment to cope with more frequent failures. One might expect increasing levels of

redundancy designed for specific failure modes [Corbett et al. 2004; Ghemawat

et al. 2003], for example. Such designs and management systems are based

on very simple models of component failure and repair processes [Patterson

et al. 1988]. Better knowledge about the statistical properties of storage fail-

ure processes, such as the distribution of time between failures, may empower

researchers and designers to develop new, more reliable, and available storage

systems.

ACM Transactions on Storage, Vol. 3, No. 3, Article 8, Publication date: October 2007.

Understanding Disk Failure Rates

•

8:3

Unfortunately, many aspects of disk failures in real systems are not well

understood, probably because the owners of such systems are reluctant to re-

lease failure data or do not gather such data. As a result, practitioners usually

rely on vendor-specified parameters such as mean time-to-failure (MTTF) to

model failure processes, although many are skeptical of the accuracy of those

models [Elerath 2000a, 2000b]. Too much academic and corporate research is

based on anecdotes and back-of-the-envelope calculations, rather than empiri-

cal data [Schwarz et al. 2006].

The work in this article is part of a broader research agenda with the long-

term goal of providing a better understanding of failures in IT systems by

collecting, analyzing, and making publicly available a diverse set of real failure

histories from large-scale production systems.In our pursuit, we have spoken

to a number of large production sites and were able to convince several of them

to provide failure data from some of their systems.

In this work, we provide an extension of our study in Schroeder and Gibson

[2007]. We present an analysis of nine datasets we have collected, with a focus

on storage-related failures. The datasets come from a number of large-scale

production systems, including high-performance computing sites and large In-

ternet services sites, and consist primarily of hardware replacement logs. The

datasets vary in duration from one month to five years and cover in total a pop-

ulation of more than 100,000 drives from at least four different vendors. Disks

covered by this data include drives with SCSI and FC interfaces, commonly rep-

resented as the most reliable types of disk drives, as well as drives with SATA

interfaces, common in desktop and nearline systems. Although 100,000 drives

is a very large sample relative to previously published studies, it is small com-

pared to the estimated 35 million enterprise drives, and 300 million total drives

built in 2006 [Drummer et al. 2006]. Phenomena such as bad batches caused by

fabrication line changes may require much larger datasets to fully characterize.

We analyze three different aspects of the data. We begin in Section 3 by ask-

ing how disk replacement frequencies compare to replacement frequencies of

other hardware components. In Section 4, we provide a quantitative analysis of

disk replacement rates observed in the field and compare our observations with

common predictors and models used by vendors. In Section 5, we analyze the

statistical properties of disk replacement rates. We study correlations between

disk replacements, identify the key properties of the empirical distribution of

time between replacements, and compare our results to common models and

assumptions. Section 7 provides an overview of related work and Section 8

concludes.

2. METHODOLOGY

2.1 What is a Disk Failure?

While it is often assumed that disk failures follow a simple fail-stop model

(where disks either work perfectly or fail absolutely and in an easily detectable

manner [Patterson et al. 1988; Prabhakaran et al. 2005]), disk failures are much

more complex in reality. For example, disk drives can experience latent sector

ACM Transactions on Storage, Vol. 3, No. 3, Article 8, Publication date: October 2007.

8:4

•

B. Schroeder and G. A. Gibson

faults or transient performance problems. Often it is hard to correctly attribute

the root cause of a problem to a particular hardware component.

Our work is based on hardware replacement records and logs, that is, we

focus on the disk conditions that lead a drive customer to treat a disk as per-

manently failed and to replace it. We analyze records from a number of large

production systems which contain a record for every disk that was replaced in

the system during the time of the data collection. To interpret the results of

our work correctly, it is crucial to understand the process of how this data was

created. After a disk drive is identified as the likely culprit in a problem, the

operations staff (or the computer system itself) perform(s) a series of tests on

the drive to assess its behavior. If the behavior qualifies as faulty according

to the customer’s definition, the disk is replaced and a corresponding entry is

made in the hardware replacement log.

The important thing to note is that there is not one unique definition for

when a drive is faulty. In particular, customers and vendors might use different

definitions. For example, a common way for a customer to test a drive is to

read all of its sectors to see if any reads experience problems, deciding that it is

faulty if any one operation takes longer than a certain threshold. The outcome

of such a test will depend on how the thresholds are chosen. Many sites follow

a “better safe than sorry” mentality, and use even more rigorous testing. As

a result, it cannot be ruled out that a customer may declare a disk faulty,

while its manufacturer sees it as healthy. This also means that the definition of

“faulty” that a drive customer uses does not necessarily fit the definition that

a drive manufacturer uses to make drive reliability projections. In fact, a disk

vendor has reported that for 43% of all disks returned by customers they find

no problem with the disk [Drummer et al. 2006].

It is also important to note that the failure behavior of a drive depends on

the operating conditions, and not only on component-level factors. For example,

failure rates are affected by environmental factors such as temperature and

humidity, data center handling procedures, workloads, and “duty cycles” or

powered-on hours patterns.

We would also like to point out that the failure behavior of disk drives, even

if they are of the same model, can differ, since disks are manufactured using

processes and parts that may change. These changes, such as a change in a

drive’s firmware or a hardware component, or even the assembly line on which

a drive was manufactured, can change the failure behavior of a drive. This

effect is often called the effect of batches or vintage. A bad batch can lead to

unusually high drive failure rates or anomalously high rates of media errors.

For example, in the HPC3 dataset (see Table I) the customer had 11,000 SATA

drives replaced in October 2006 after observing a high frequency of media er-

rors during writes. Although it took a year to resolve, the customer and vendor

agreed that these drives did not meet warranty conditions. The cause was at-

tributed to the breakdown of a lubricant, leading to unacceptably high head

flying heights. In the data, the replacements of these drives are not recorded

as failures.

In our analysis we do not further study the effect of batches. We report on the

field experience, in terms of disk replacement rates, of a set of drive customers.

ACM Transactions on Storage, Vol. 3, No. 3, Article 8, Publication date: October 2007.

Understanding Disk Failure Rates

•

8:5

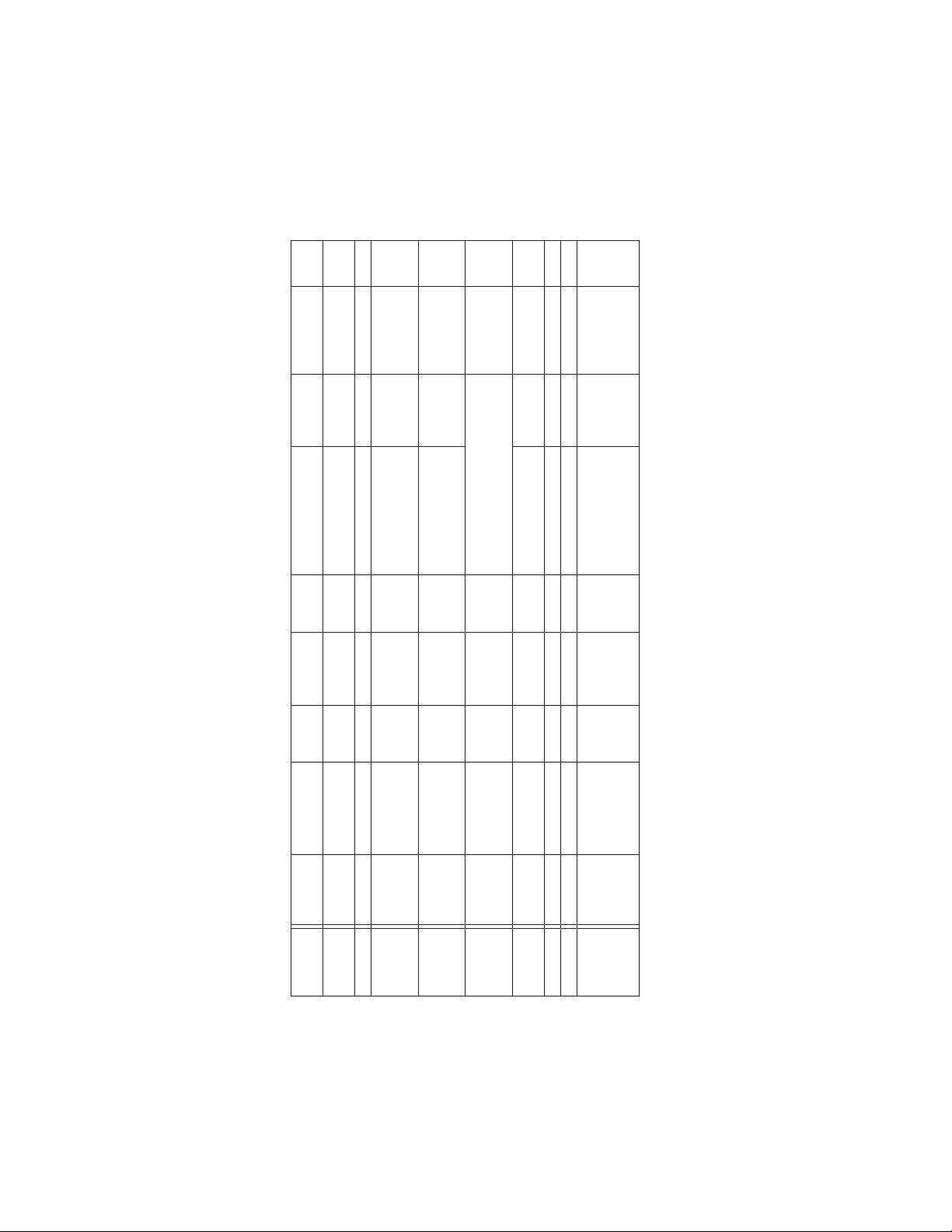

Table I. Overview of the Nine Failure Datasets

Type of #Disk Disk Disk MTTF Date of first ARR

Data set cluster Duration events # Servers Count Parameters (Mhours) Deploym. (%)

HPC1 HPC 08/01 - 05/06 474 765 2,318 18GB 10K SCSI 1.2 08/01 4.0

124 64 1,088 36GB 10K SCSI 1.2 2.2

HPC2 HPC 01/04 - 07/06 14 256 520 36GB 10K SCSI 1.2 12/01 1.1

HPC3 HPC 12/05 - 11/06 103 1,532 3,064 146GB 15K SCSI 1.5 08/05 3.7

HPC 12/05 - 11/06 4 N/A 144 73GB 15K SCSI 1.5 3.0

HPC 12/05 - 08/06 253 N/A 11,000 250GB 7.2K SATA 1.0 3.3

HPC4 Various 09/03 - 08/06 269 N/A 8,430 250GB SATA 1.0 09/03 2.2

HPC 11/05 - 08/06 7 N/A 2,030 500GB SATA 1.0 11/05 0.5

clusters 09/05 - 08/06 9 N/A 3,158 400GB SATA 1.0 09/05 0.8

HPC5 HPC 01/01 - 12/06 134 380 4,280 Mixed drive populations. 01/01 1.2

HPC 11/05 - 12/06 14 111 1,252 See description of HPC5 in 11/05 1.1

HPC 01/05 - 12/06 47 356 759 Section 2.3 for details. 01/05 3.1

HPC6 HPC 12/03 - 02/07 183 366 732 36GB 15K SCSI 1.2 12/02 6.7

HPC 12/03 - 02/07 1,642 574 5,166 36/73GB 15K SCSI 1.2 12/02 8.5

COM1 Int. serv. May 2006 84 N/A 26,734 10K SCSI 1.0 2001 2.8

COM2 Int. serv. 09/04 - 04/06 506 9,232 39,039 15K SCSI 1.2 2004 3.1

COM3 Int. serv. 01/05 - 12/05 2 N/A 56 10K FC 1.2 N/A 3.6

132 N/A 2,450 10K FC 1.2 N/A 5.4

108 N/A 796 10K FC 1.2 N/A 13.6

104 N/A 432 10K FC 1.2 1998 24.1

Note that the disk count given in the table is the number of drives in the system at the end of the data collection period. For some systems the number of

drives changed during the data collection period, and we account for that in our analysis. The disk parameters 10K and 15K refer to the rotation speed

in revolutions per minute; drives not labeled 10K or 15K probably have a rotation speed of 7200 rpm.

ACM Transactions on Storage, Vol. 3, No. 3, Article 8, Publication date: October 2007.

剩余30页未读,继续阅读

资源评论

chocolatezwj

- 粉丝: 0

- 资源: 5

上传资源 快速赚钱

我的内容管理

展开

我的内容管理

展开

我的资源

快来上传第一个资源

我的资源

快来上传第一个资源

我的收益 登录查看自己的收益

我的收益 登录查看自己的收益 我的积分

登录查看自己的积分

我的积分

登录查看自己的积分

我的C币

登录后查看C币余额

我的C币

登录后查看C币余额

我的收藏

我的收藏  我的下载

我的下载  下载帮助

下载帮助

前往需求广场,查看用户热搜

前往需求广场,查看用户热搜安全验证

文档复制为VIP权益,开通VIP直接复制

信息提交成功

信息提交成功